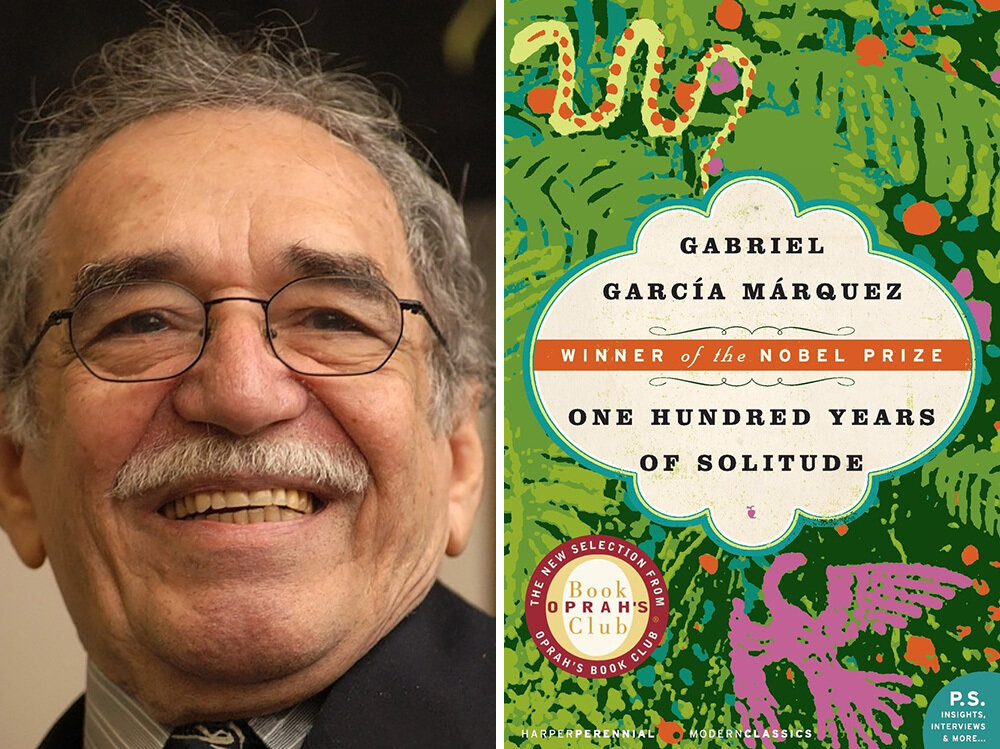

Yuan-Sen Ting (丁源森)

The Ohio State University

Expediting Discoveries in Astronomy with A.I. Agents

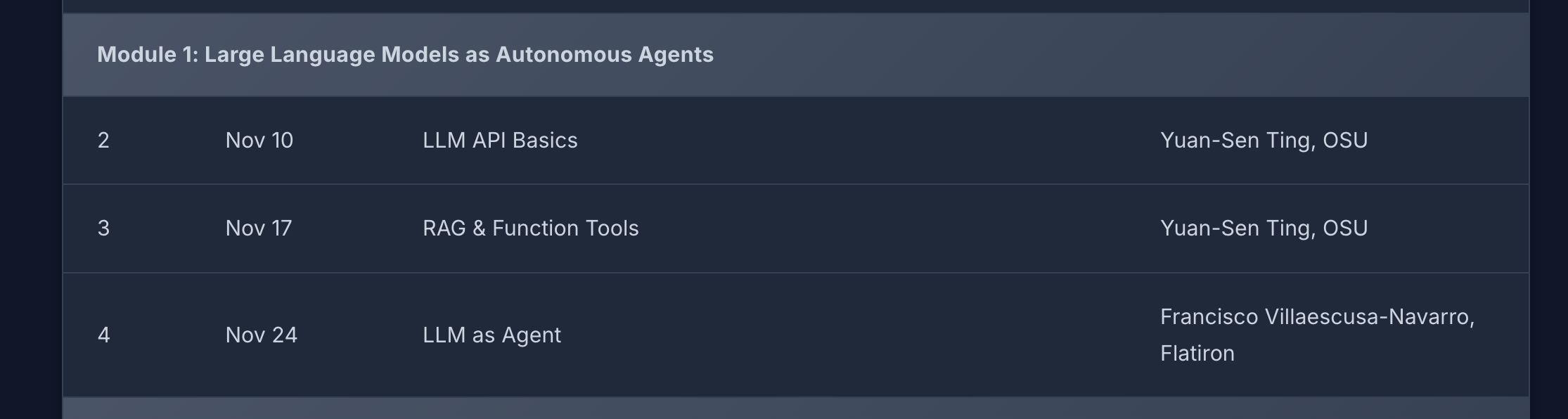

NSF awarded over $200 million for AI Research Institutes

~ 2 centers

~ 2 centers

Physical Sciences

~ 3 centers

7 centers x 15M ~ 100M

Environmental Sciences

Biological Sciences

Hype, myth, or real deal?

Why hasn't astronomy had its

"AlphaFold" moment yet?"

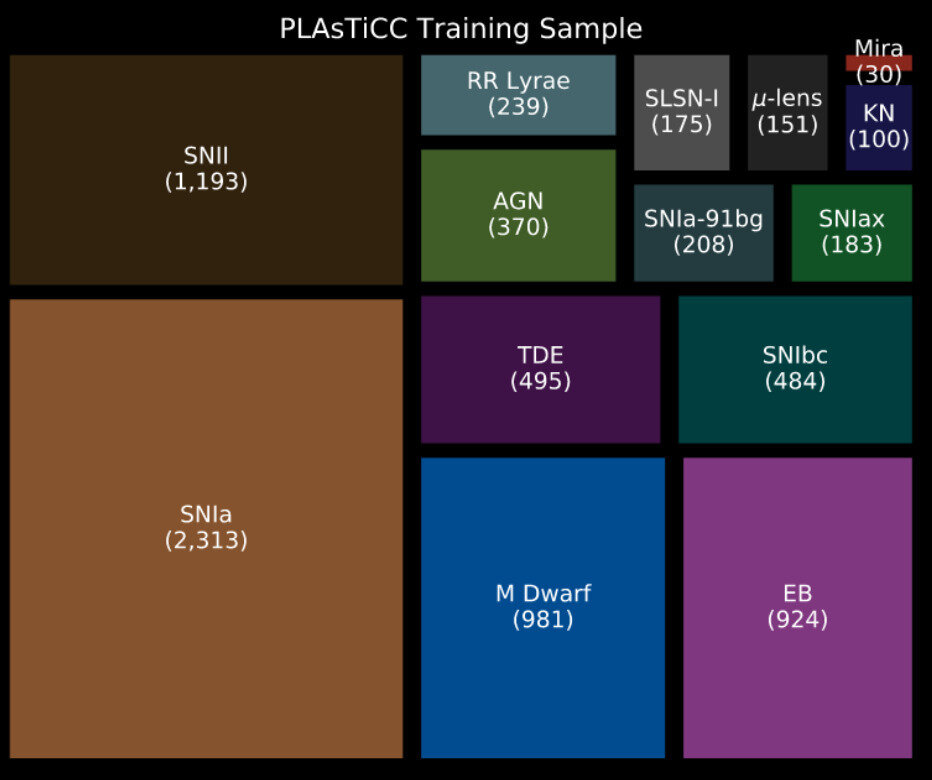

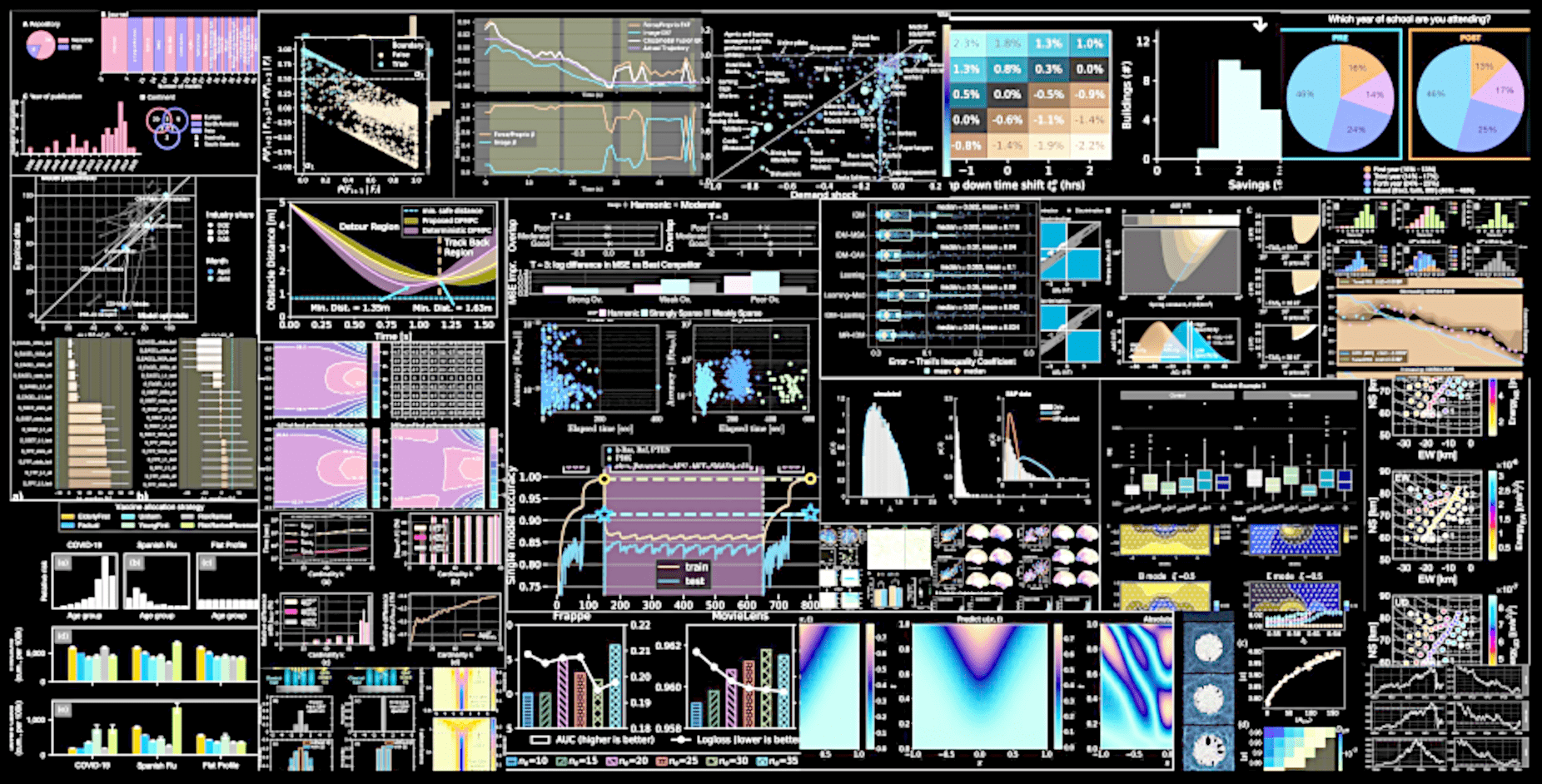

Most AI in Astronomy focuses on extending statistical methods

Most AI in Astronomy focuses on extending statistical methods

0.9

0.8

0.7

0.25

0.30

0.35

0.40

Dark Matter Density

Growth Amplitude

E.g.,

simulation-based

inferences

Cheng, YST+, 2020

or building effective workflow control / optimization

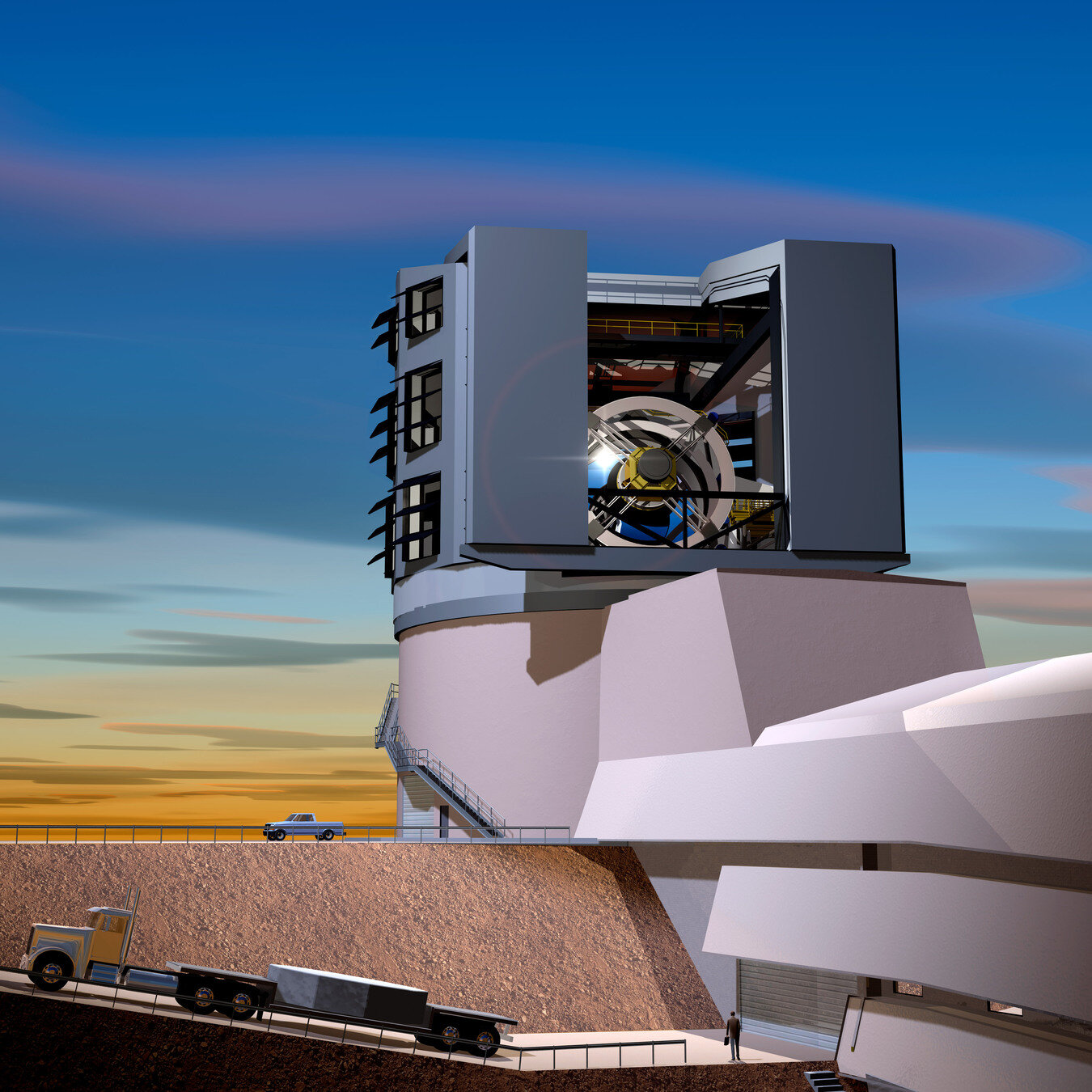

Rubin Observatory

The goal here is NOT just solving things faster that we can already solve,

but solving astrophysical problems that would otherwise be too complex to solve

Applying A.I. to individual tasks

will have limited impacts in astrophysics

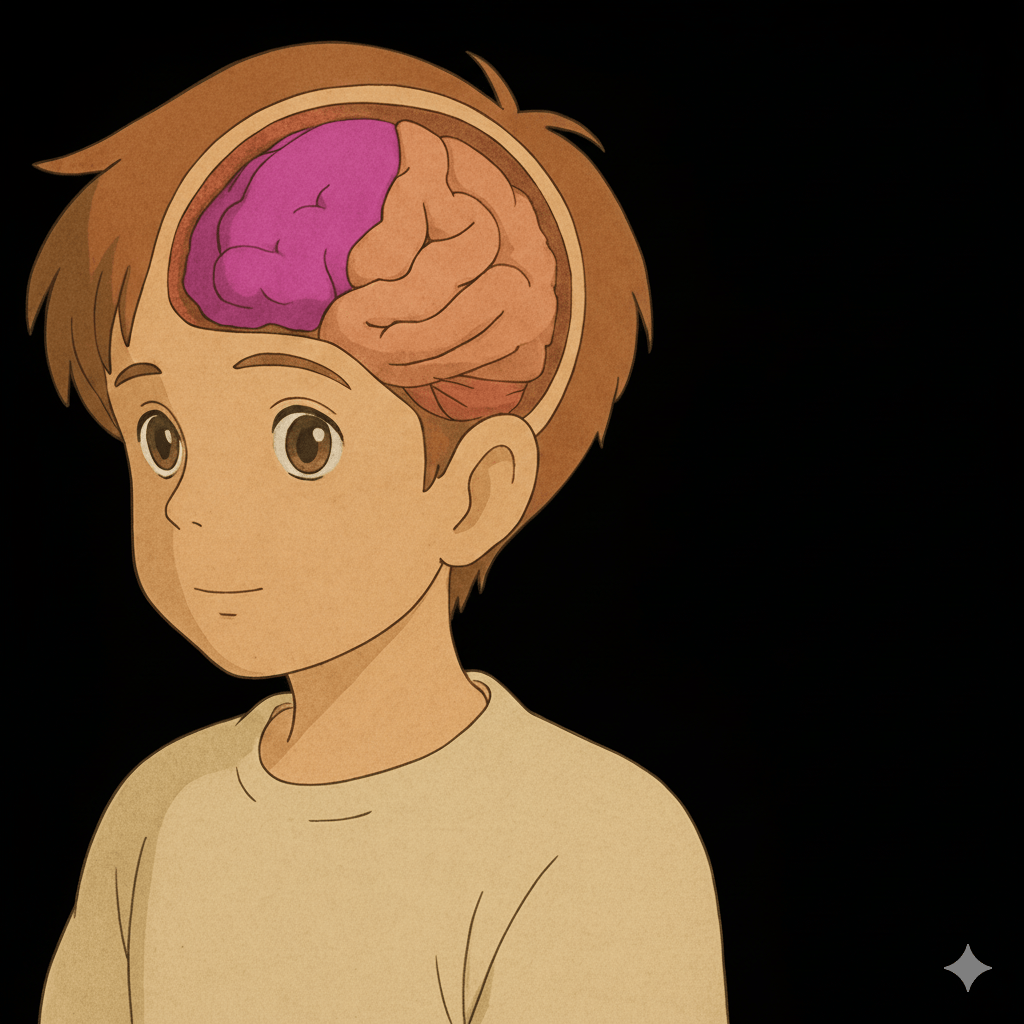

The complexity of astronomy is too low for AI

My niece

Highly non-Gaussian

Weakly non-Gaussian

Cosmic large-scale structure

Astronomy is not biology

Data / Observation

Theory / Hypothesis

Analysis Pipelines

True

False

Biology faced fundamental bottlenecks from individual tasks

Data / Observation

Theory / Hypothesis

Analysis Pipelines

True

False

Alphafold

Astronomy already has a successful standard model

Data / Observation

Theory / Hypothesis

LamdaCDM

True

False

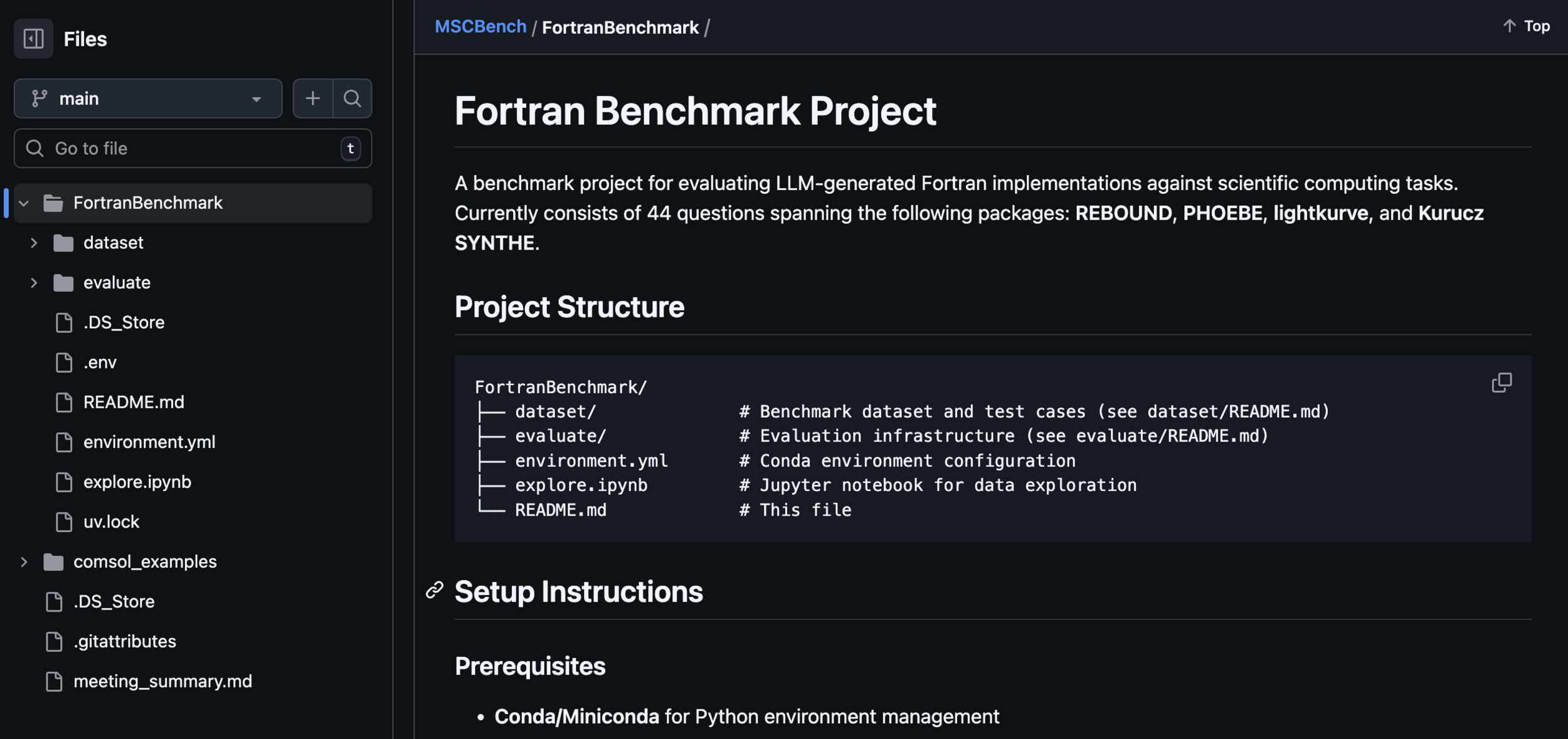

Toward Agentic Research for Astronomy

Data

Theory

State of the research

Making "plans"

Making "hypotheses"

Beyond just individual task optimizations

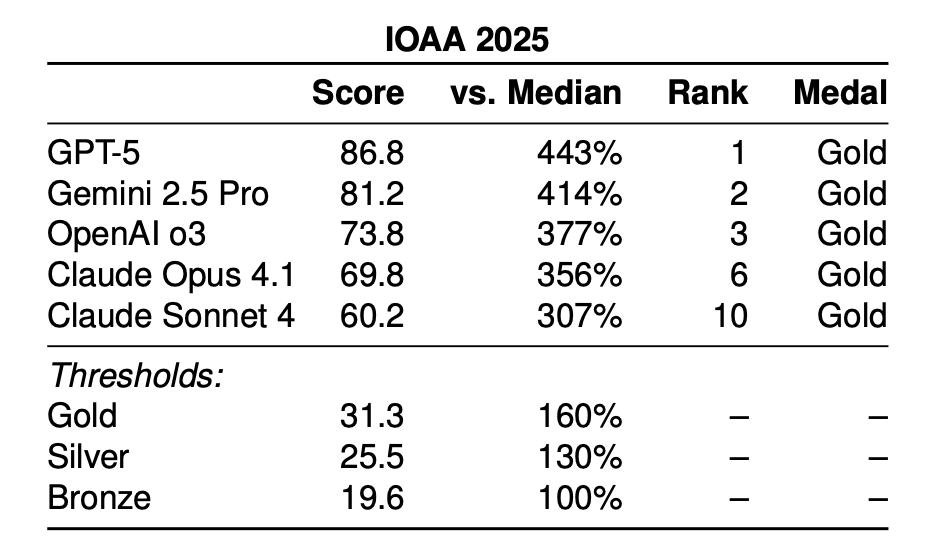

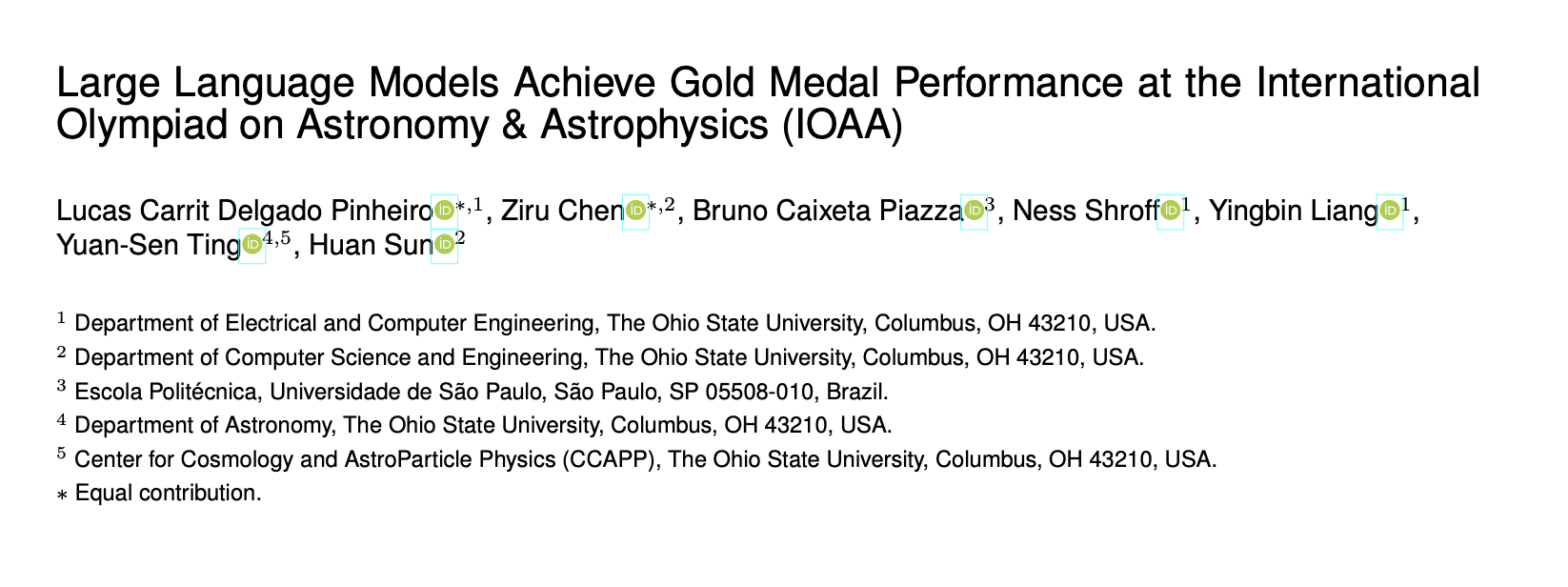

A.I. in Math Olympiads

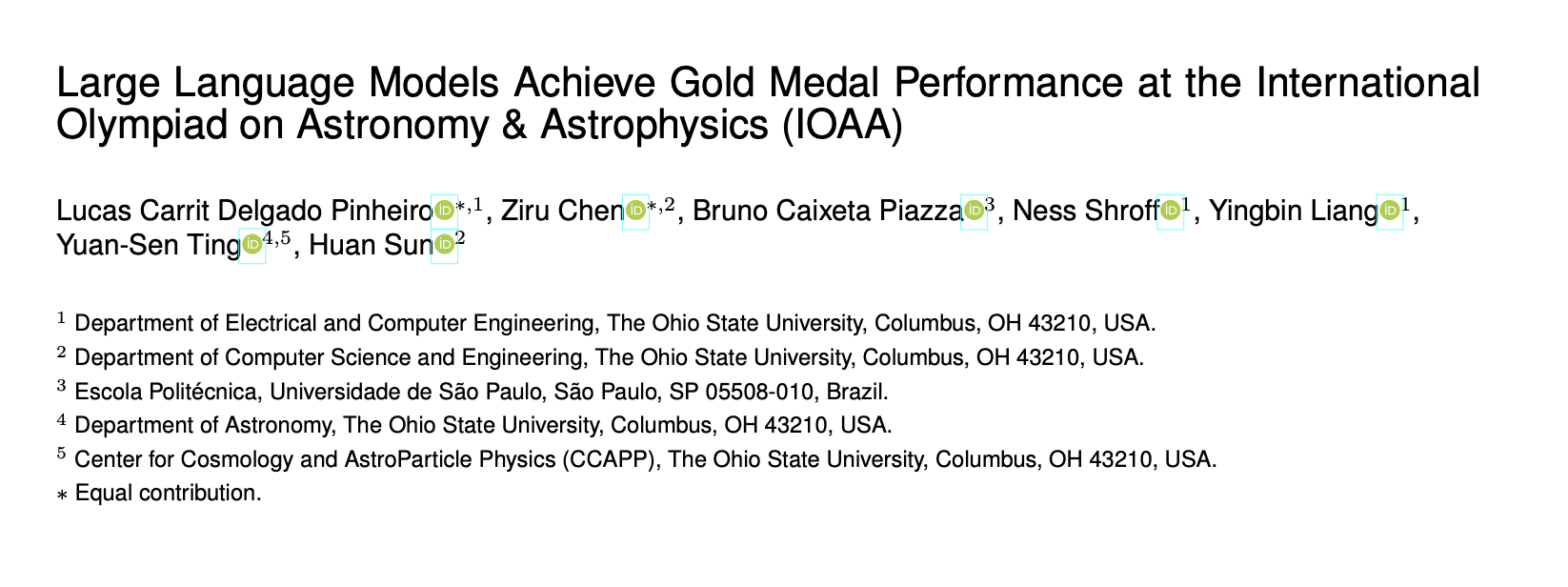

A.I. in Astronomy Olympiads

Pinheiro, ..., YST+, 2025

In open-world exploration, can large language models match human researchers at navigating vast hypothesis spaces?

Sun, YST+, 2024b, 2025

??

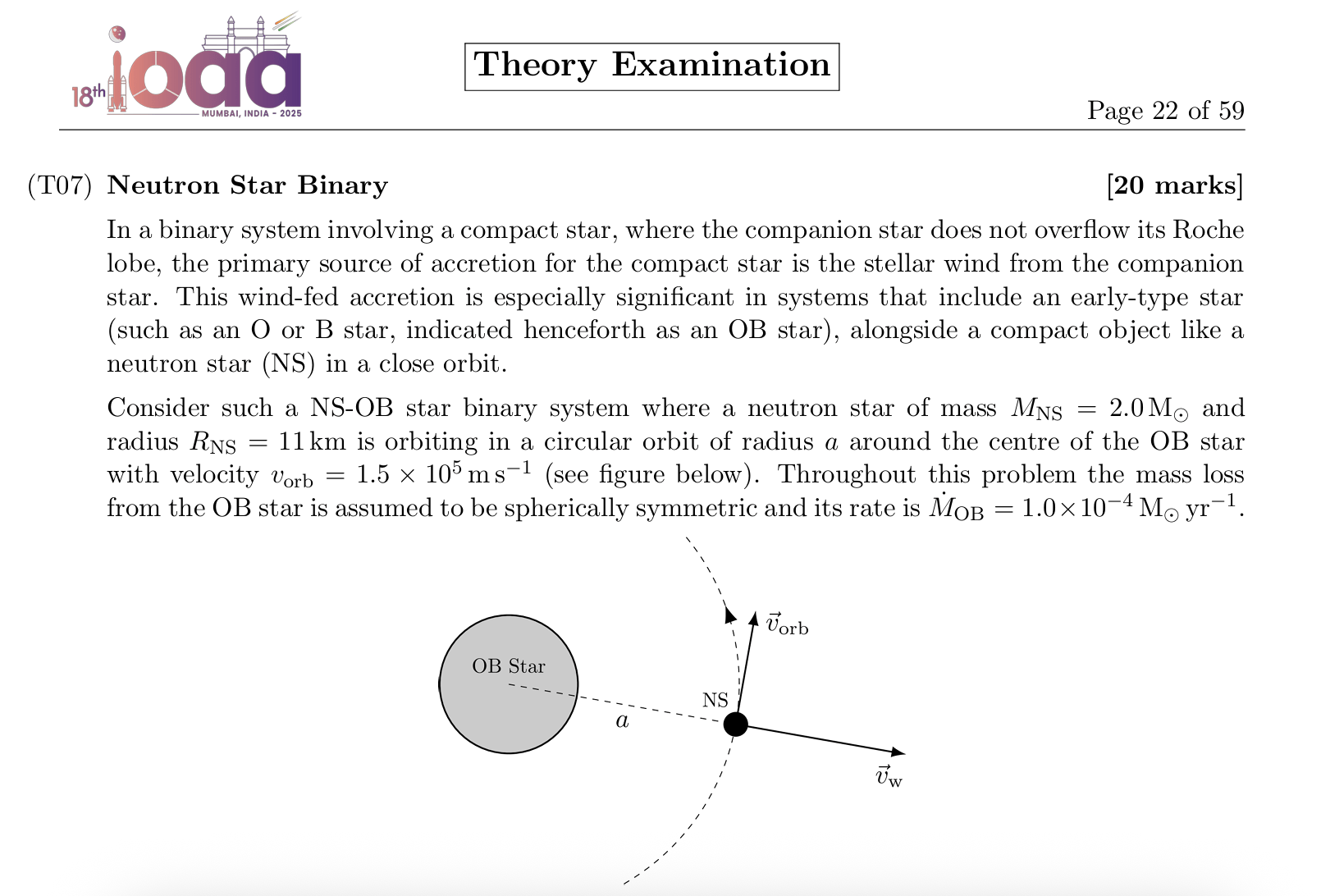

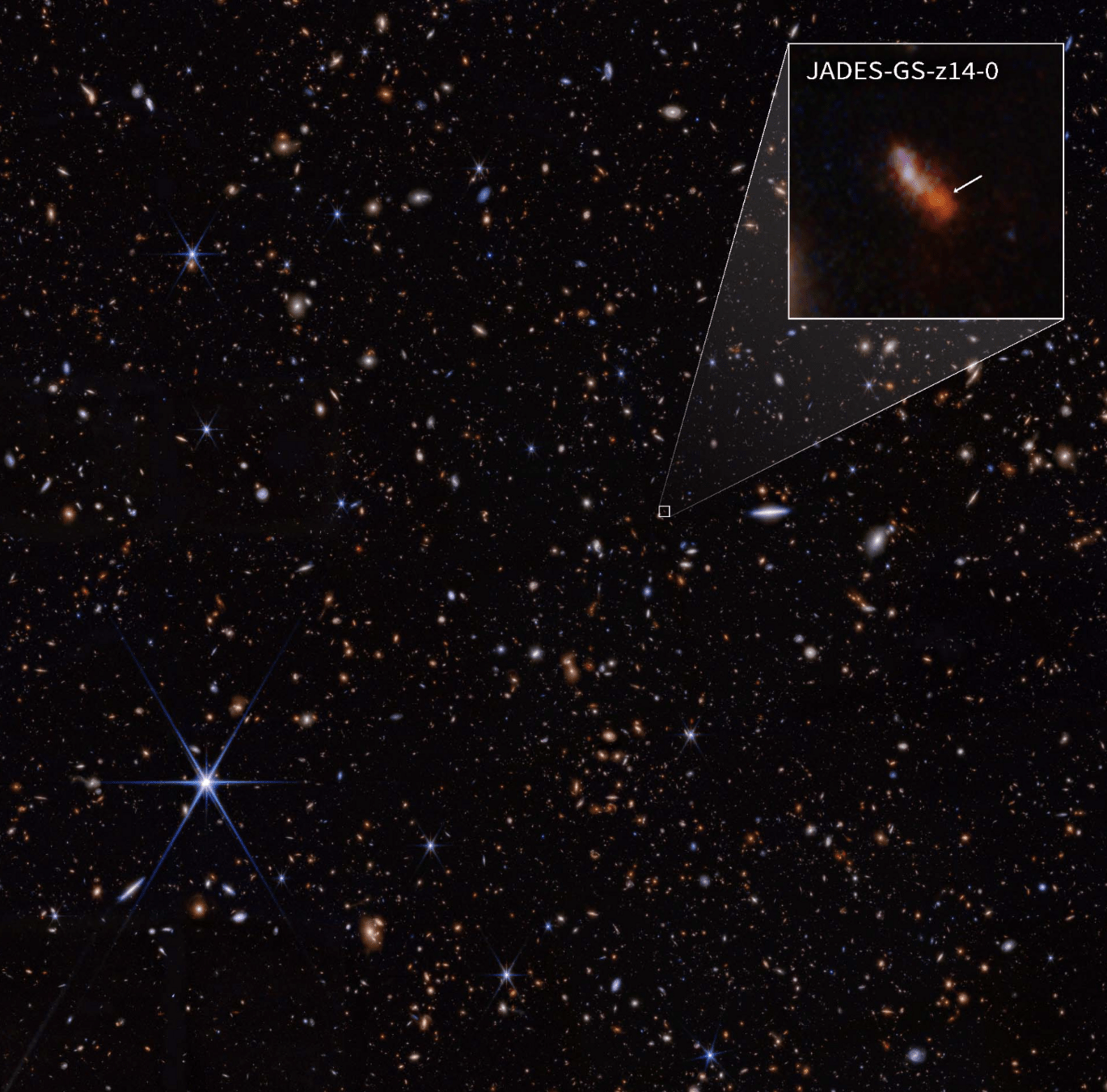

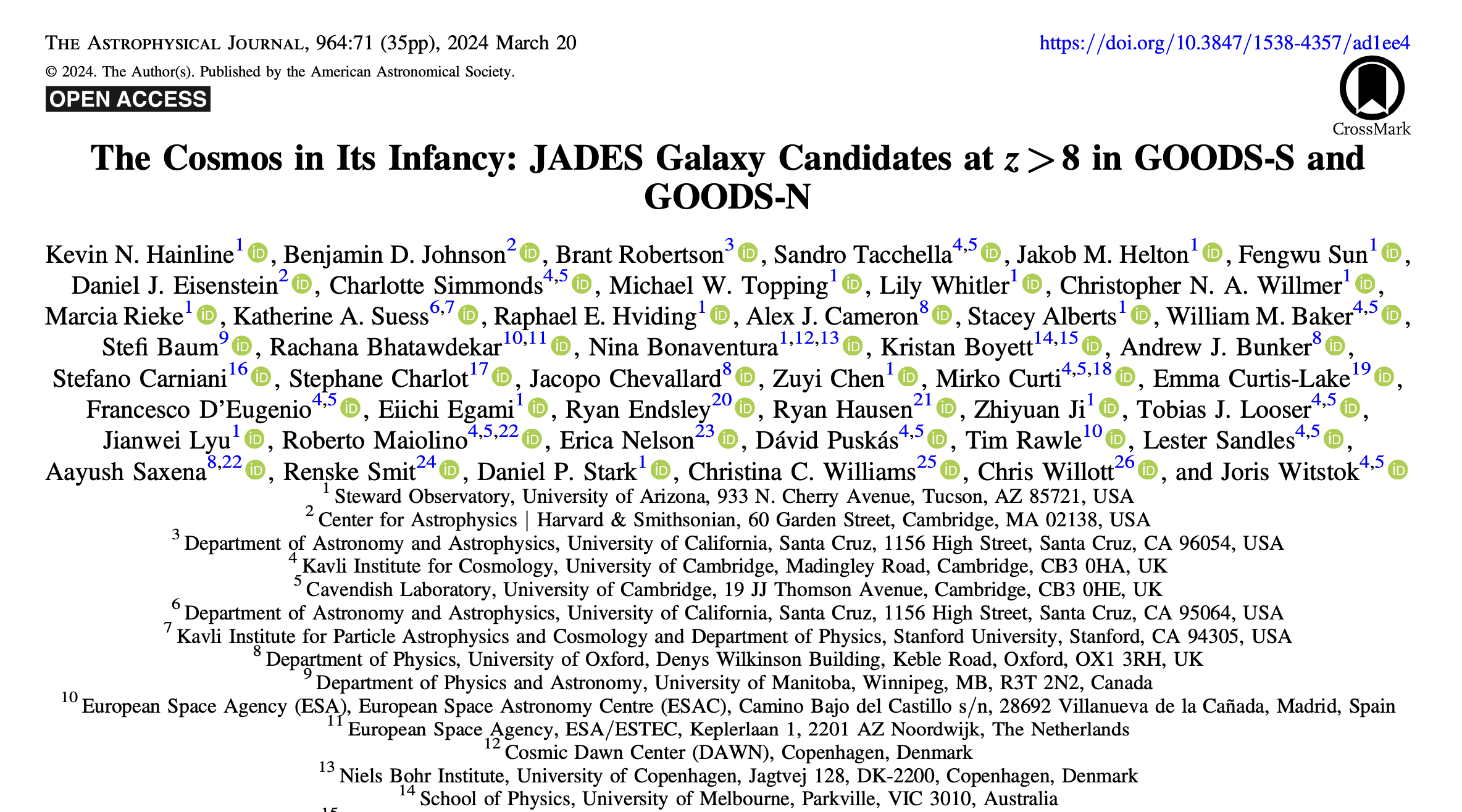

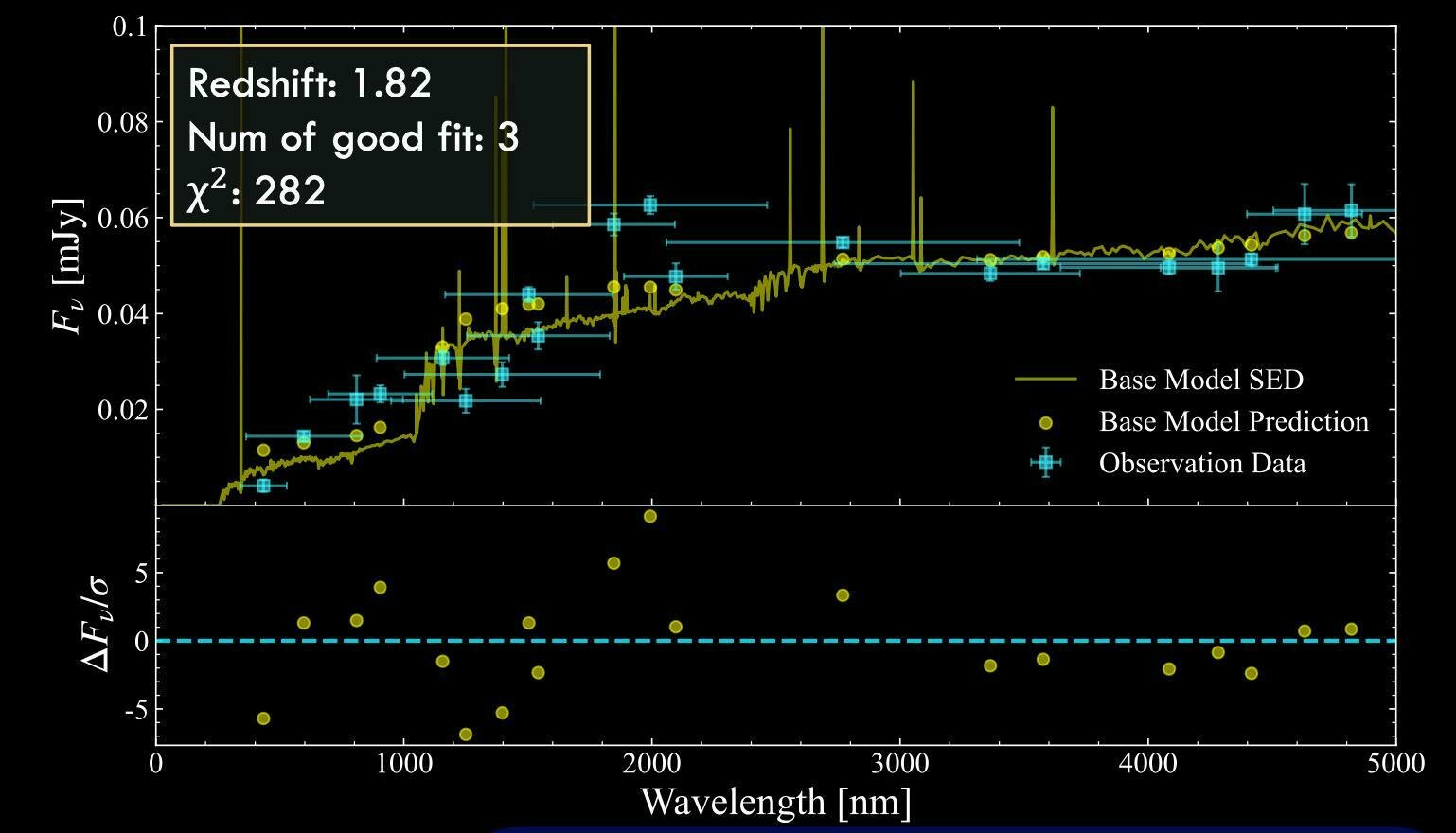

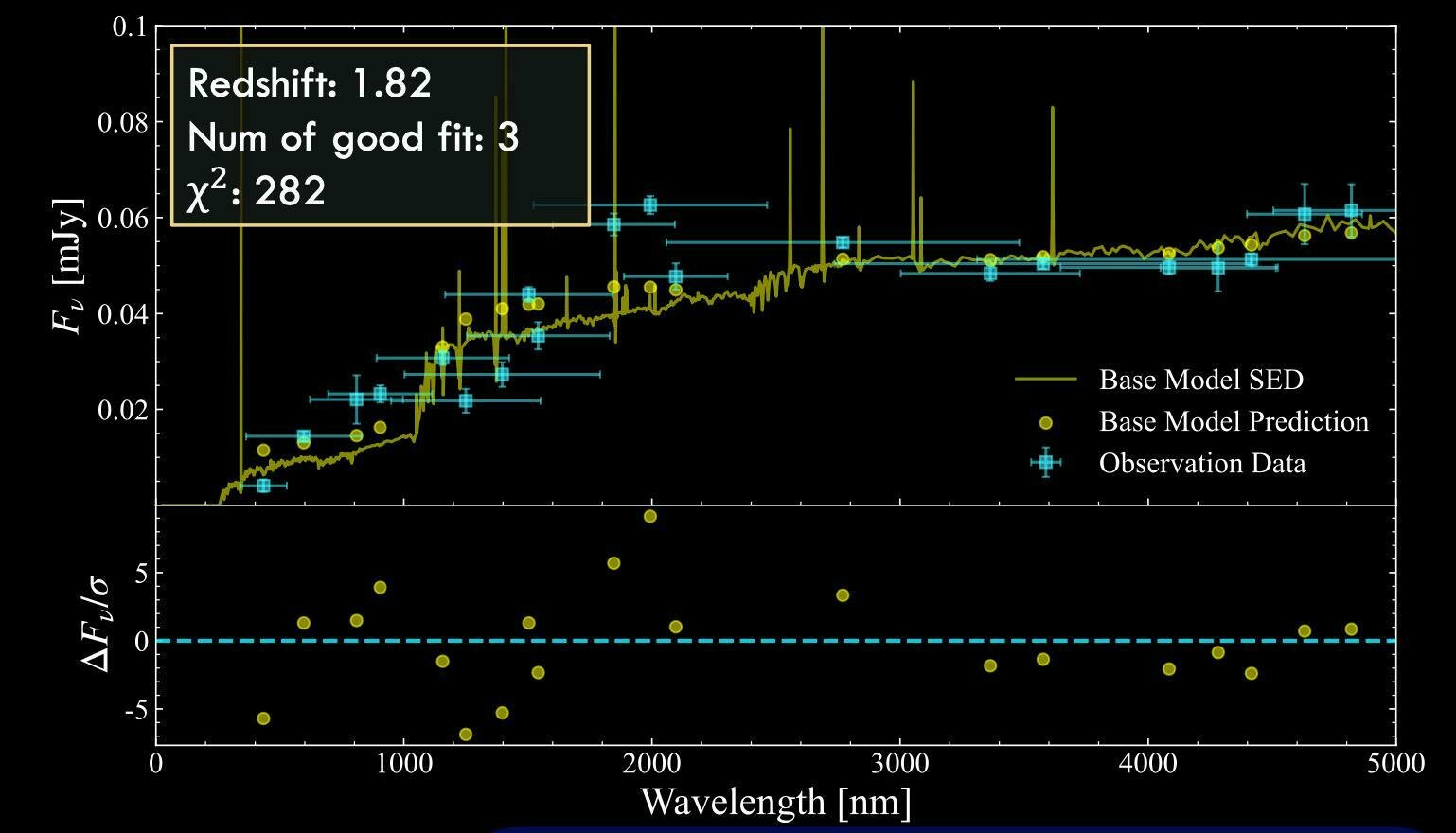

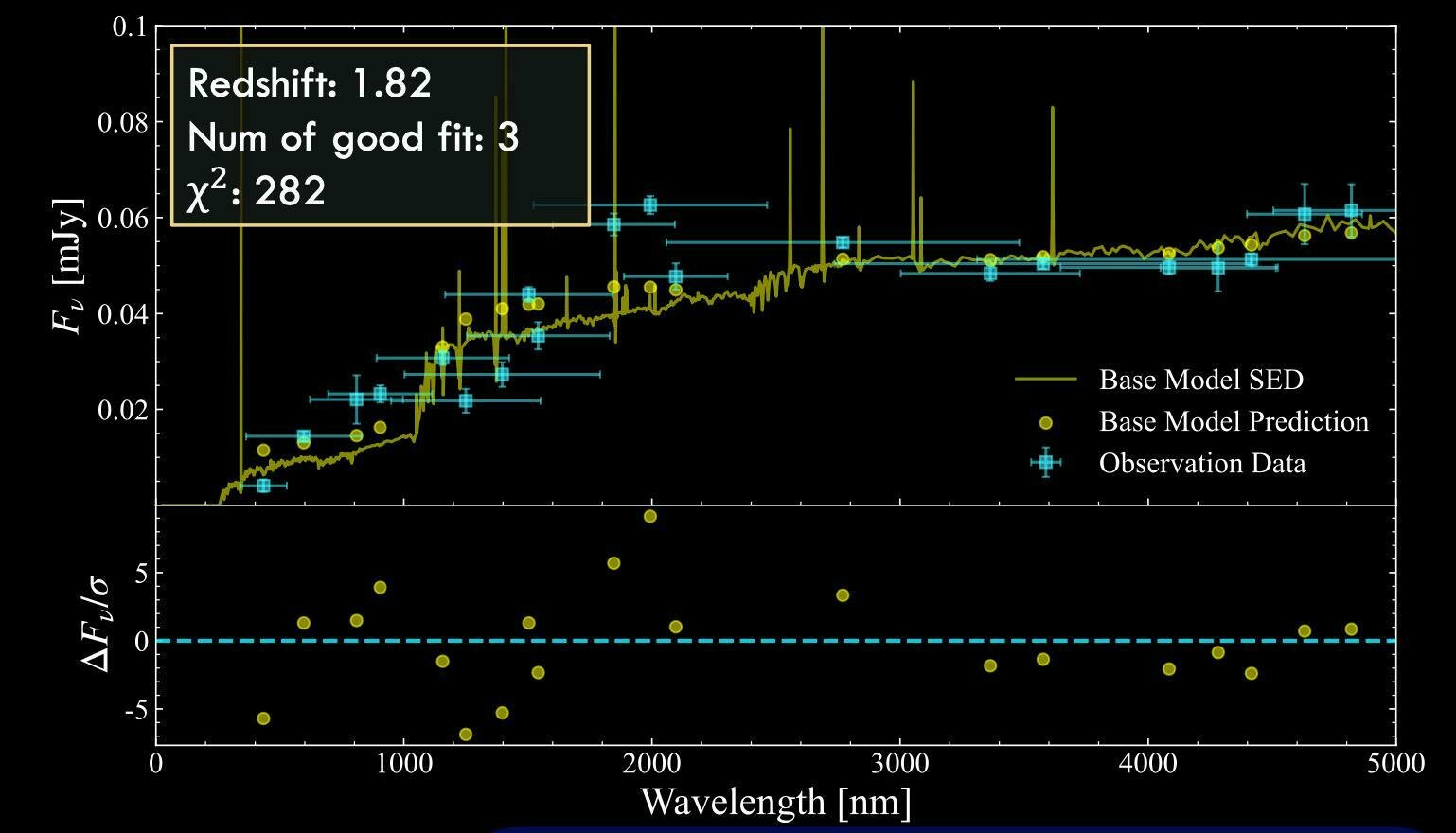

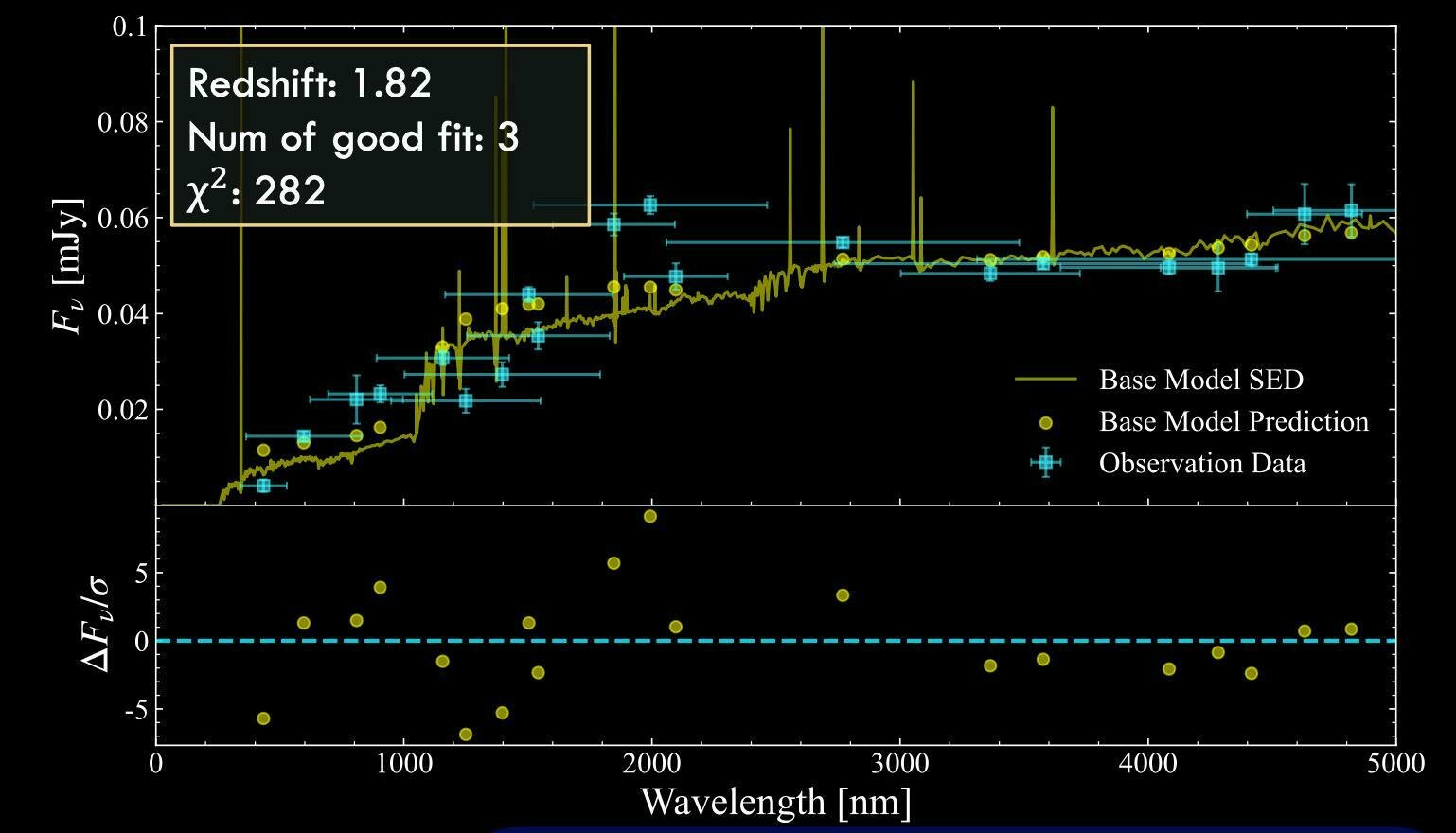

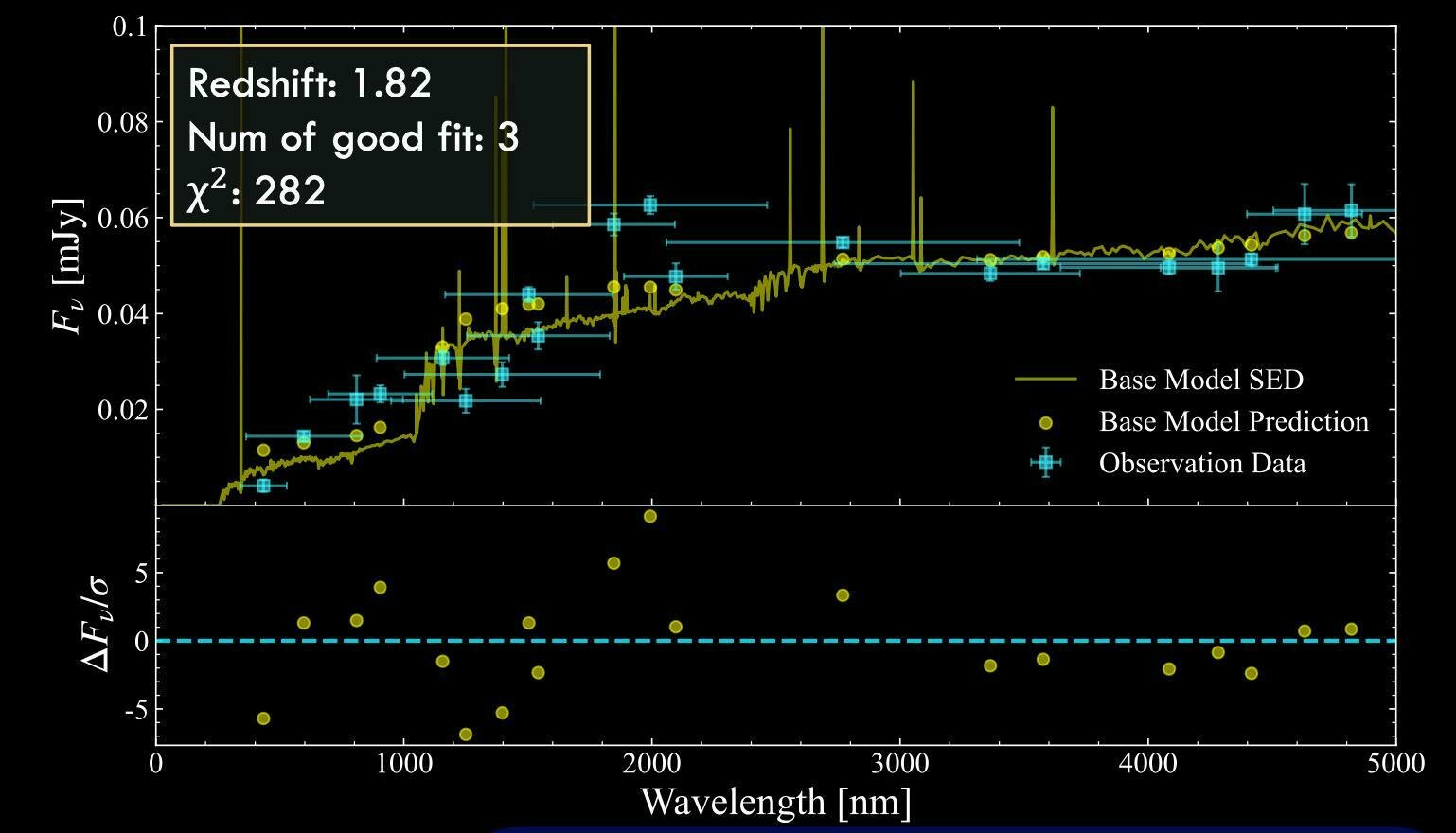

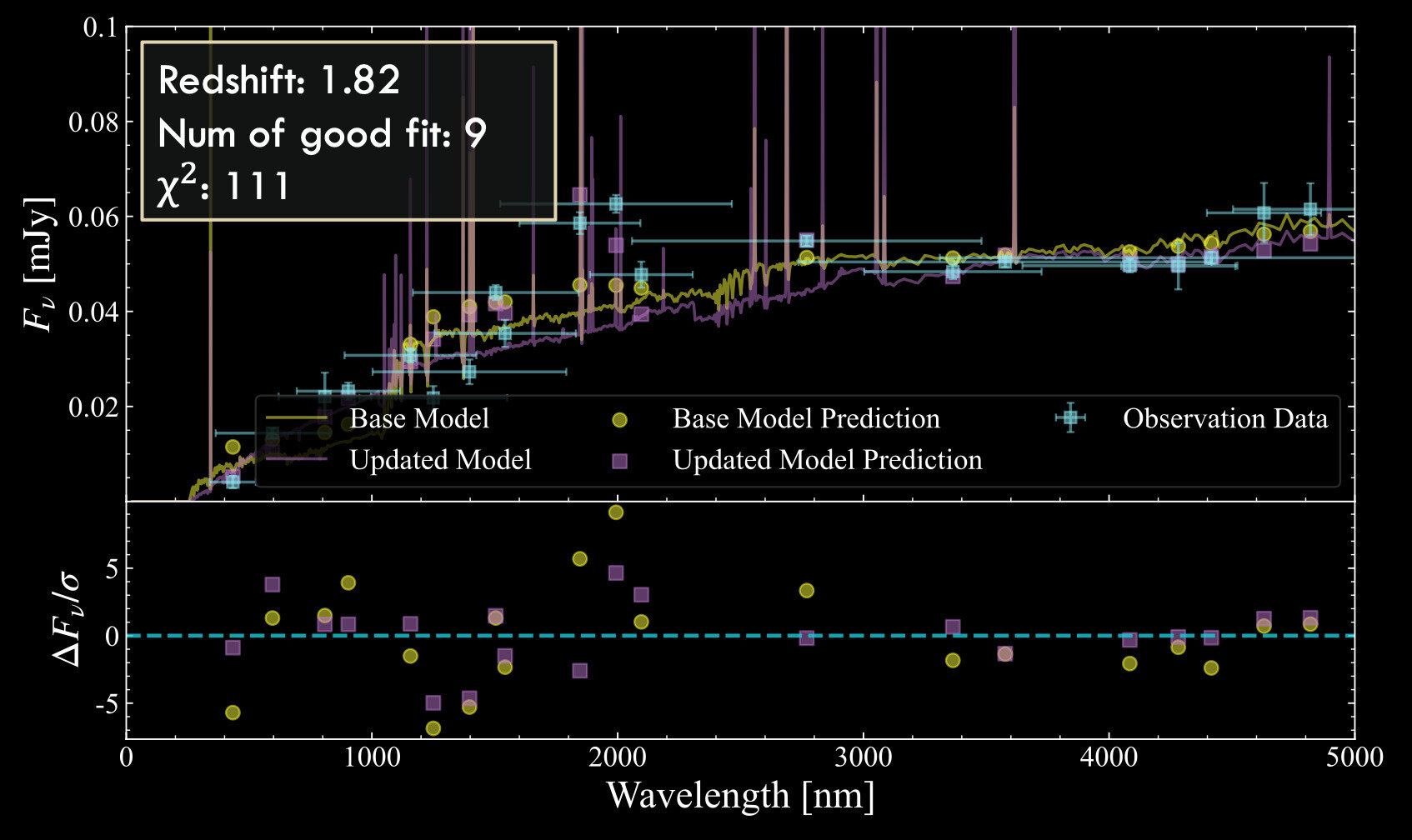

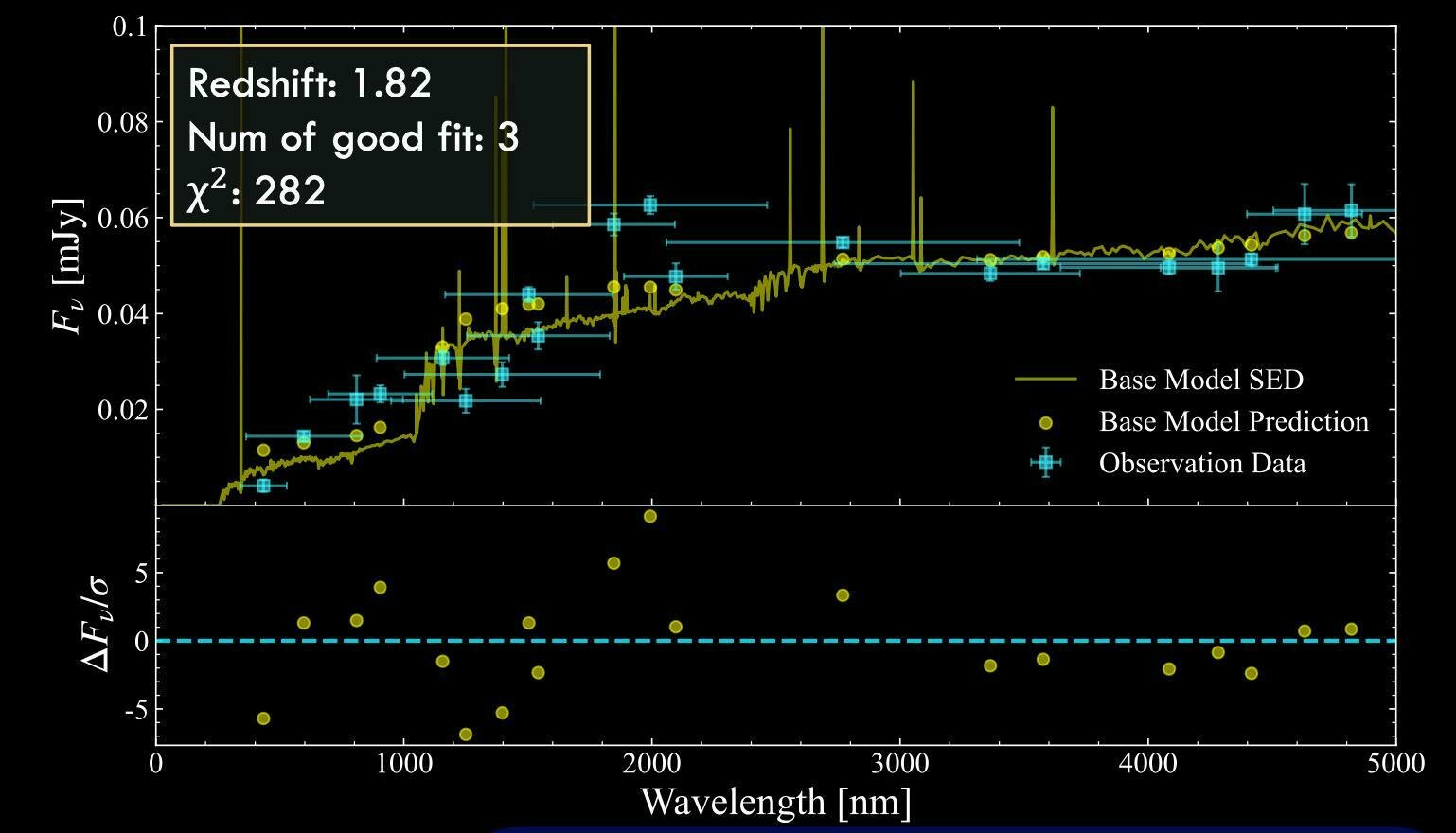

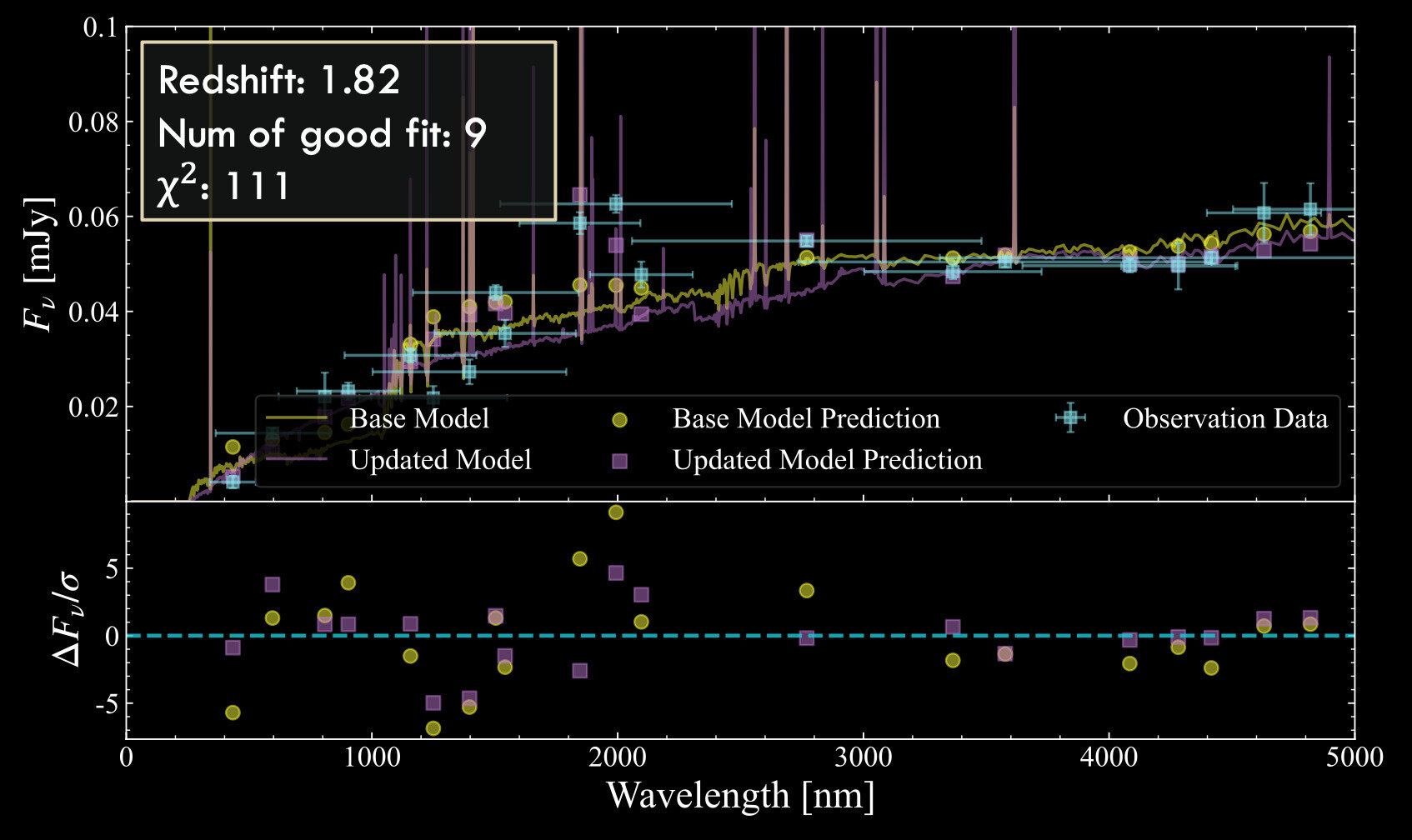

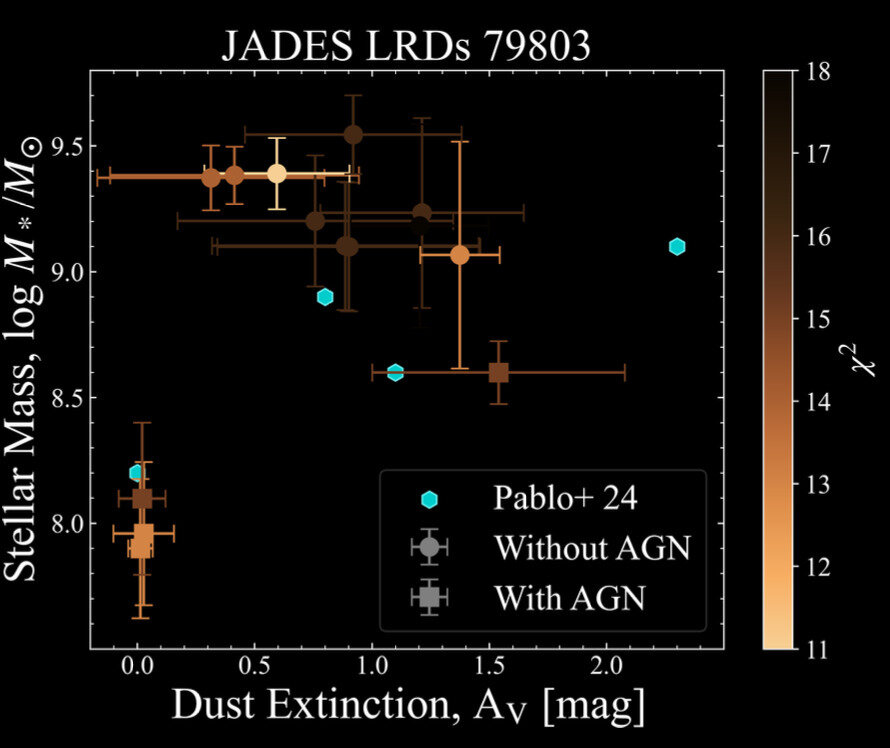

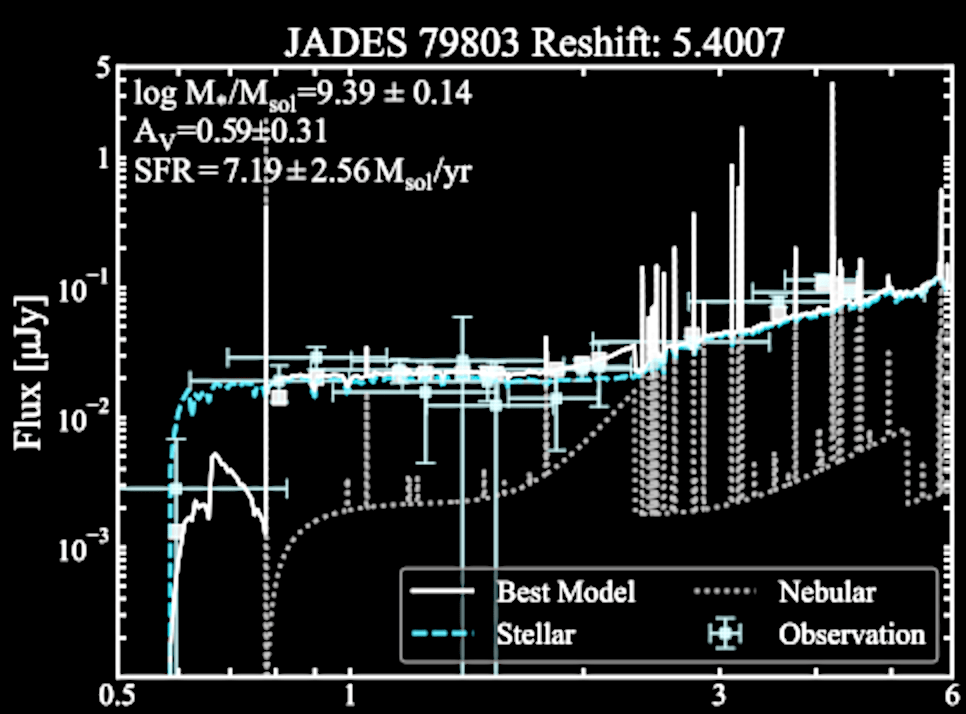

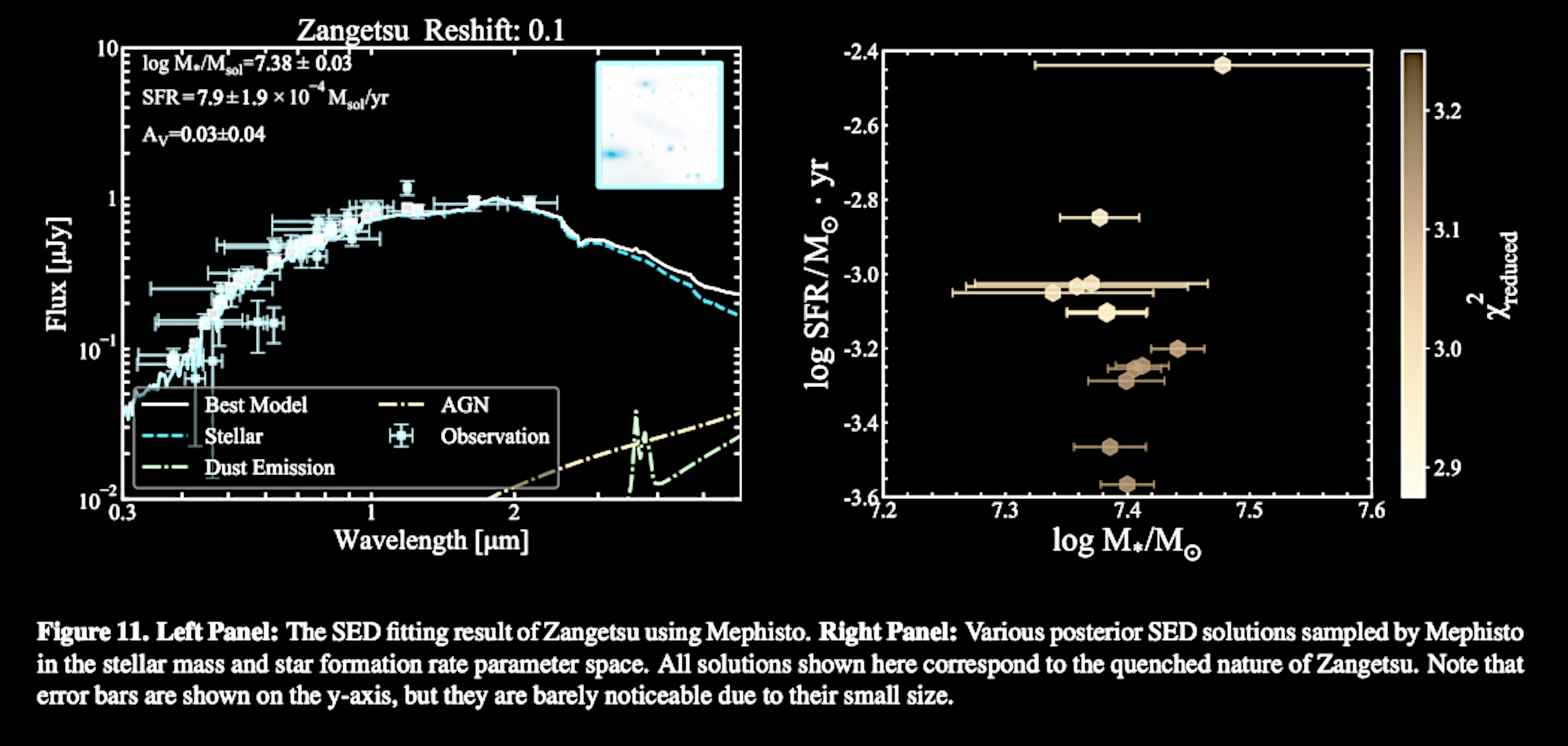

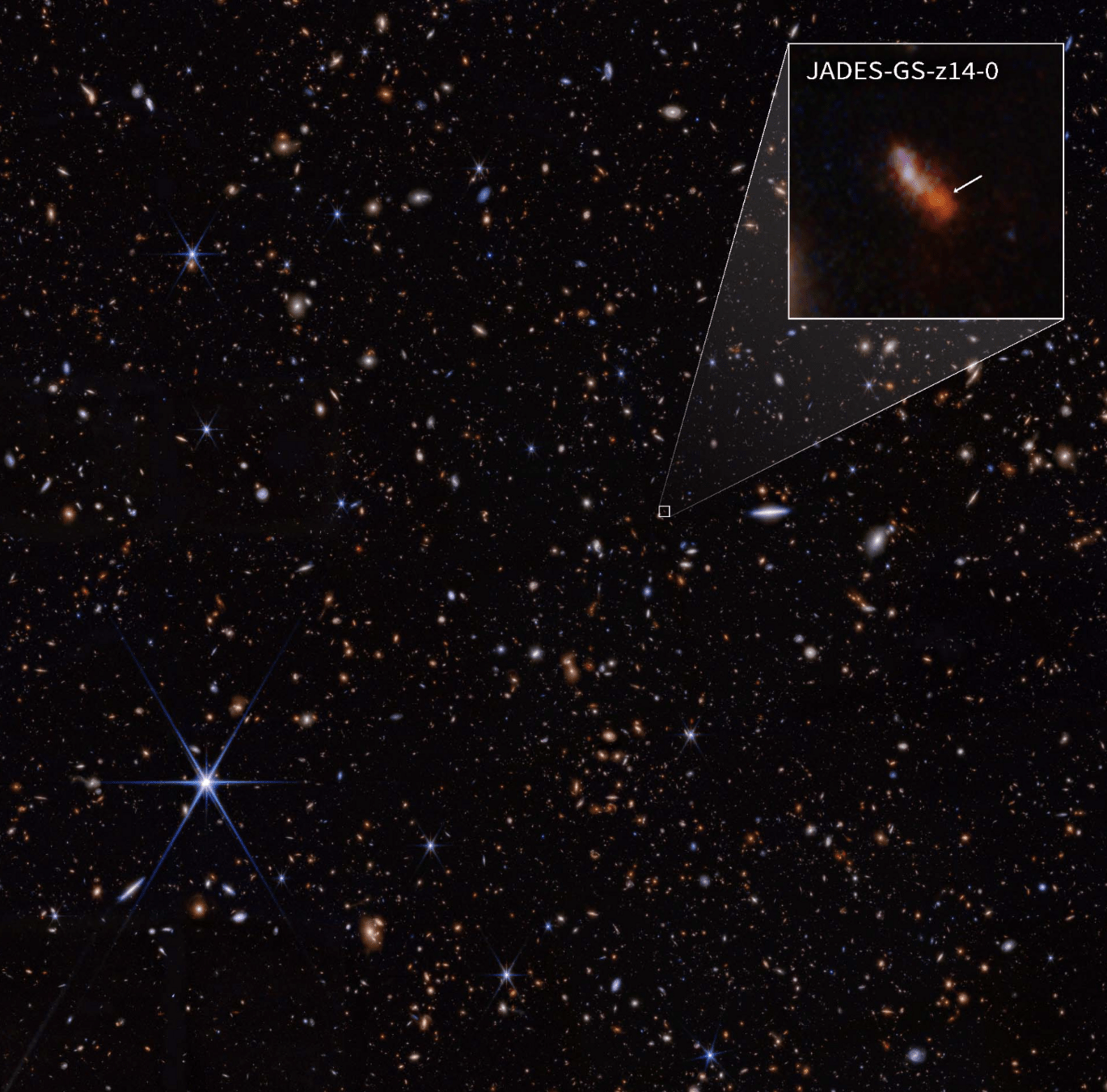

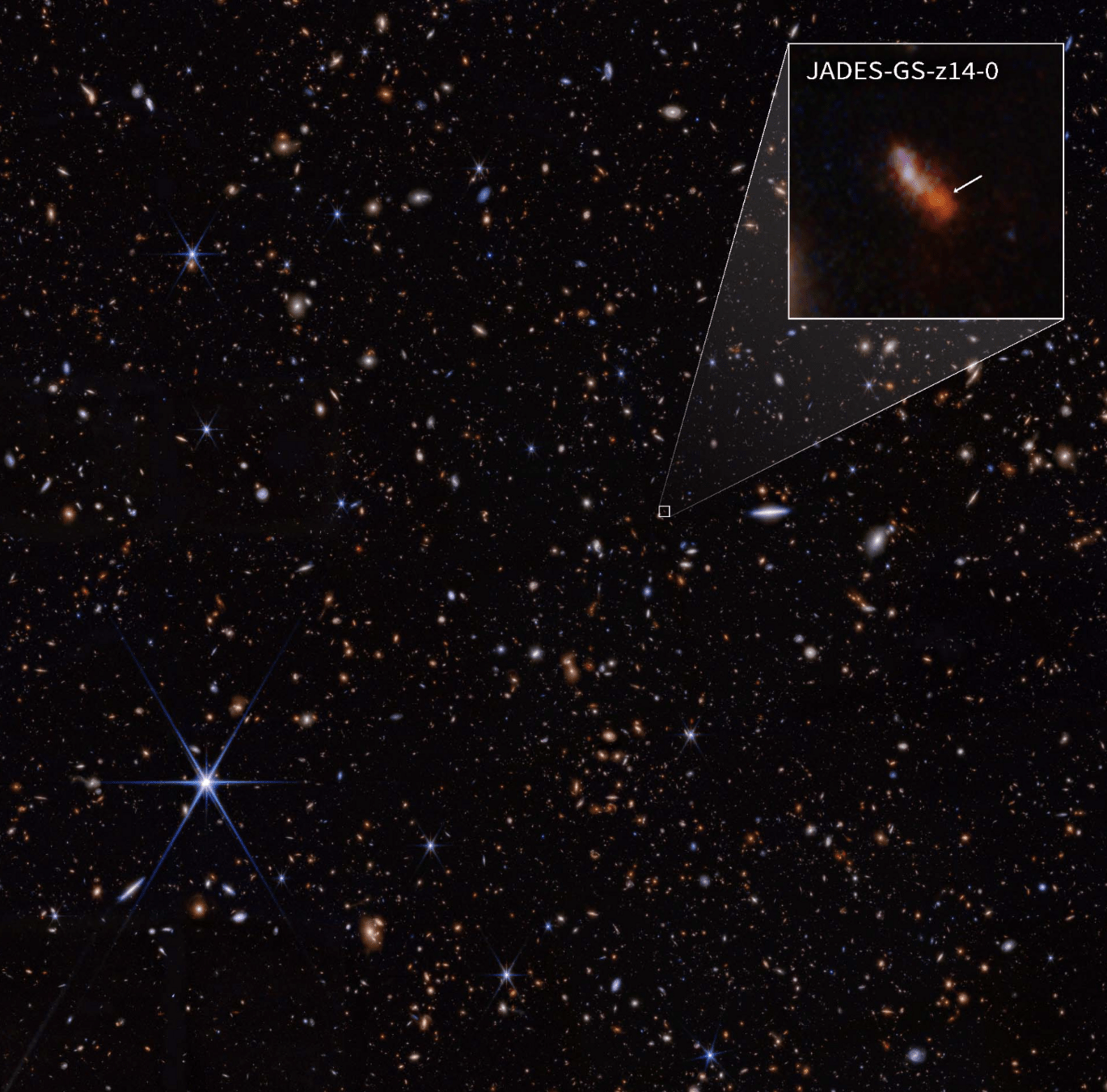

Can A.I. agents understand spectral data (spectral energy distribution) from JWST?

Hypothesis space is vast and beyond mathematical formalism

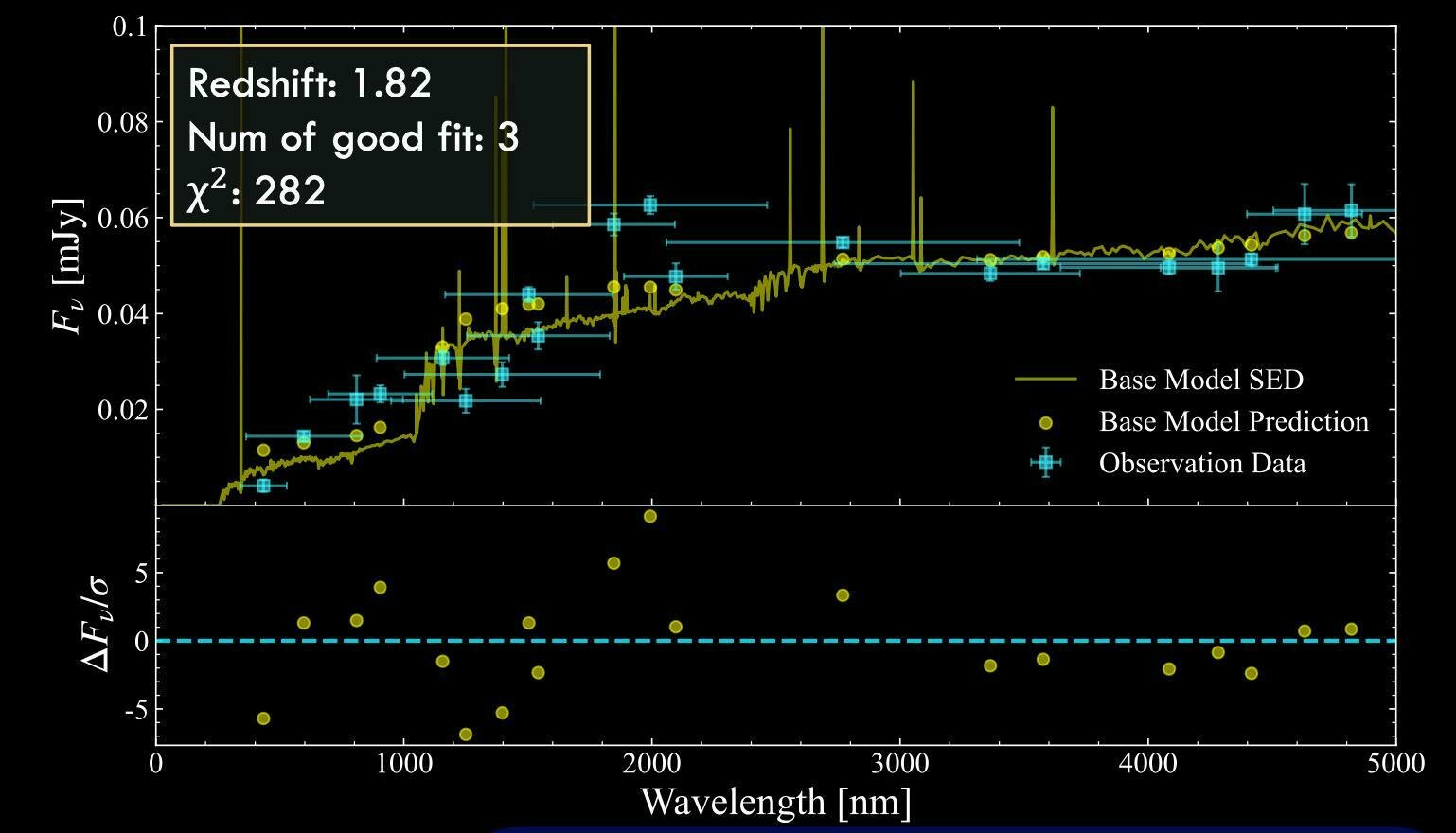

A default fit with

an SED model

Extinction model ?

Hypothesis space is vast and beyond mathematical formalism

Young stellar population?

Hypothesis space is vast and beyond mathematical formalism

Many real-world problems aren't simple optimization problems

The objective goes beyond minimizing a single error metric.

Many tasks may require modifying assumptions / physical models, not just optimizing over all parameters

Hypothesis spaces are vast and hard to parameterize.

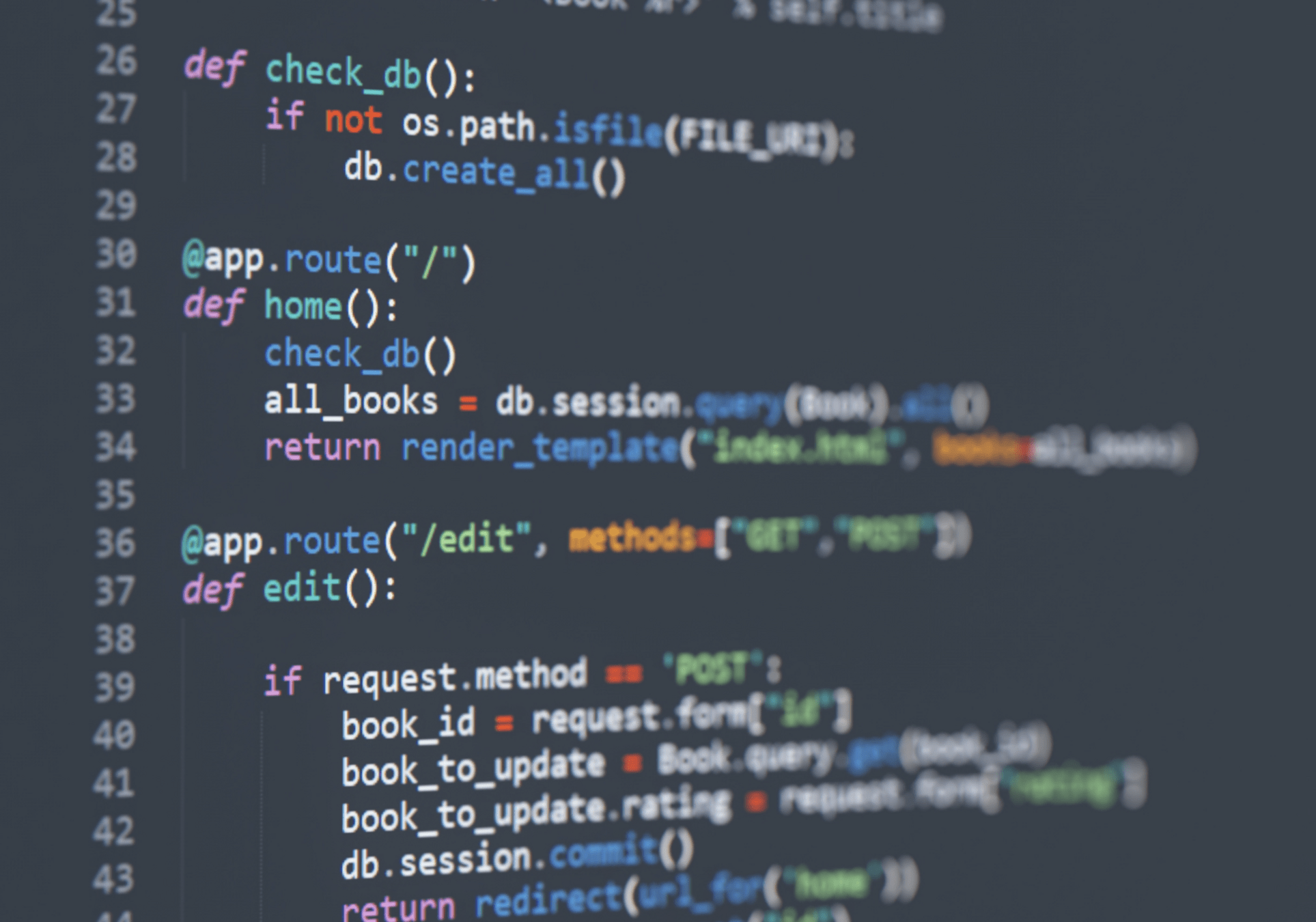

Can a large-language model learn

from its own experience?

Human "intuition" + experience

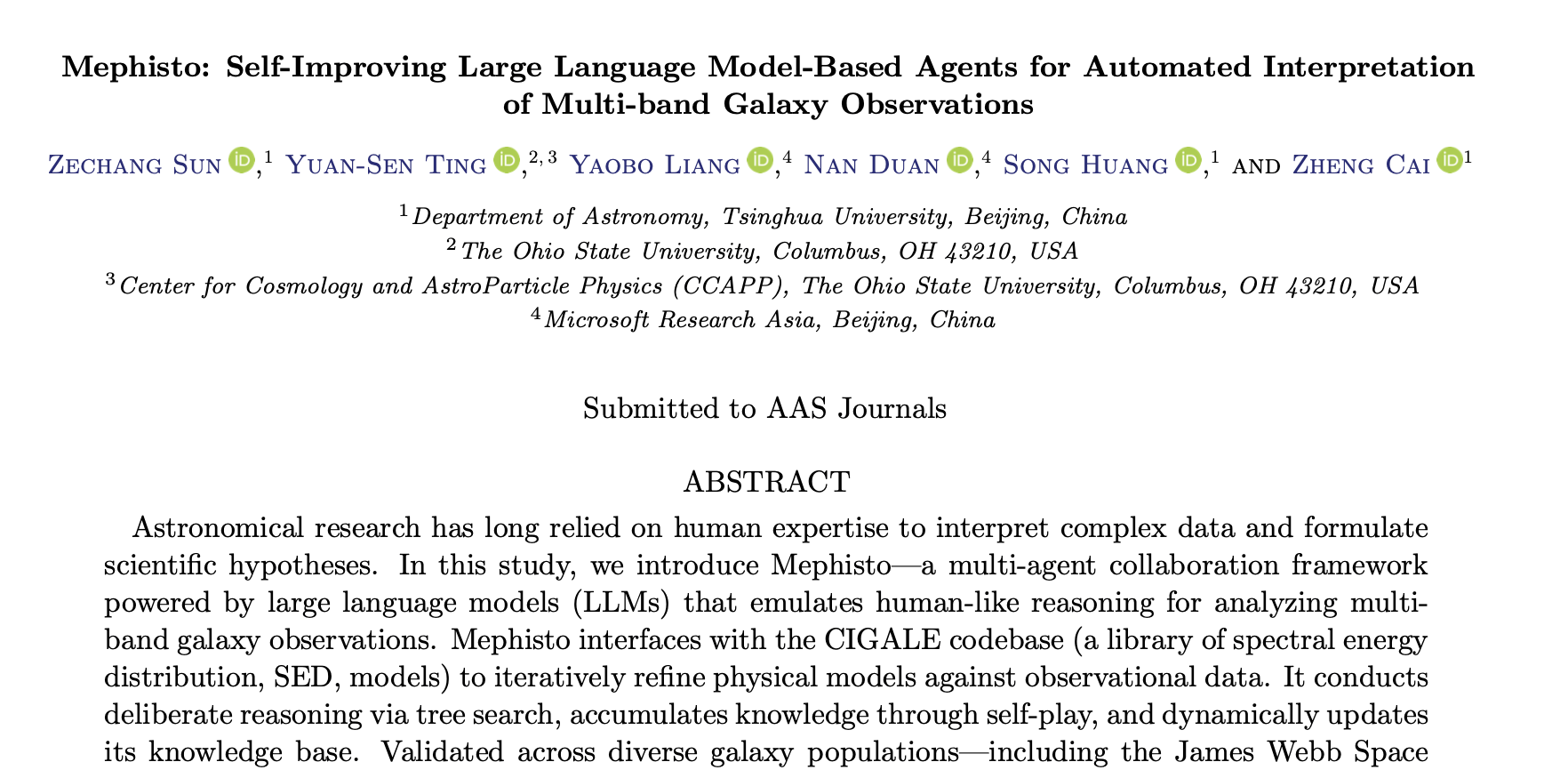

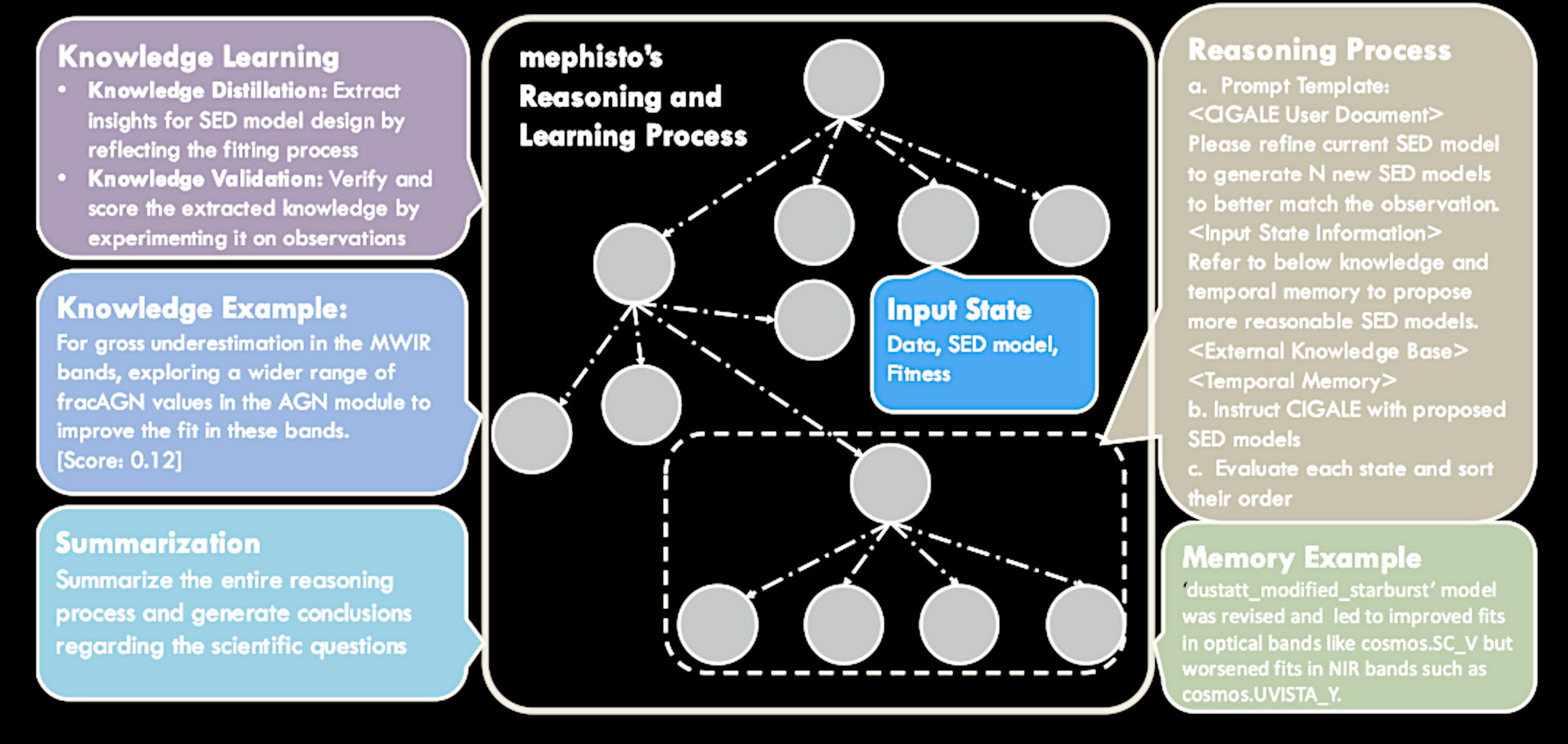

Introducing Mephisto*

* In the classic tale of Faust, Mephisto is a demon who tempts the scholar Faust with knowledge and power in exchange for his soul.

A collaboration of multiple AI agents (LLM models)

Proposing actions

Execute actions

State evolution

Knowledge distillation

A collaboration of multiple AI agents (LLM models)

Proposing actions

Execute actions

State evolution

Knowledge distillation

Enabling AI to collect "knowledge" through exploration

Knowledge base

1

2

3

4

Proposing Actions - e.g., different physical models / parameter range

Enabling AI to collect "knowledge" through exploration

Knowledge base

1

2

3

4

Execute Actions - write configuration files, run the codes, automously

Enabling AI to collect "knowledge" through exploration

Knowledge base

1

2

3

4

vs.

vs.

vs.

vs.

State Evaluation - evaluate the results (beyond a single error metric)

Enabling AI to collect "knowledge" through exploration

Knowledge base

1

2

3

4

vs.

vs.

vs.

vs.

Knowledge Distillation - summarise useful actions given the previous state

Mephisto - deployed as "walkers" in the hypothesis space

Example of learned "knowledge"

" If the fit is overestimated in the UV and optical bands,

increasing the E_BV_lines parameter may lead to a better fit by accounting for more dust attenuation in these bands. "

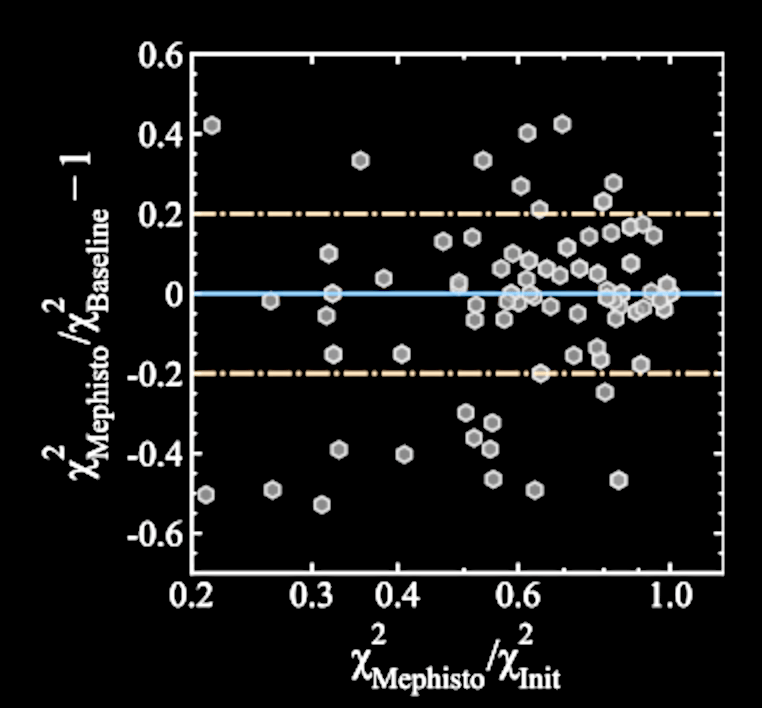

Number of Learning Iterations

0

10

20

30

5.1

5.6

6.0

6.4

GPT-4o baseline --

"think without knowledge"

Chi-Square of the Fit

LLMs with self-improvement outperforms native LLMs

Fitting JWST JADES data

Sun, YST+, 2024b

Number of Learning Iterations

0

10

20

30

5.1

5.6

6.0

6.4

GPT-4o baseline --

"think without knowledge"

Mephisto

Chi-Square of the Fit

Sun, YST+, 2024b

LLMs with self-improvement outperforms native LLMs

Sun, YST+, 2025

Mephisto operates as walkers exploring the "hypothesis space"

With COSMOS2020 SEDs

Mephisto finds better solutions using only 1% of the trials that brute force methods require

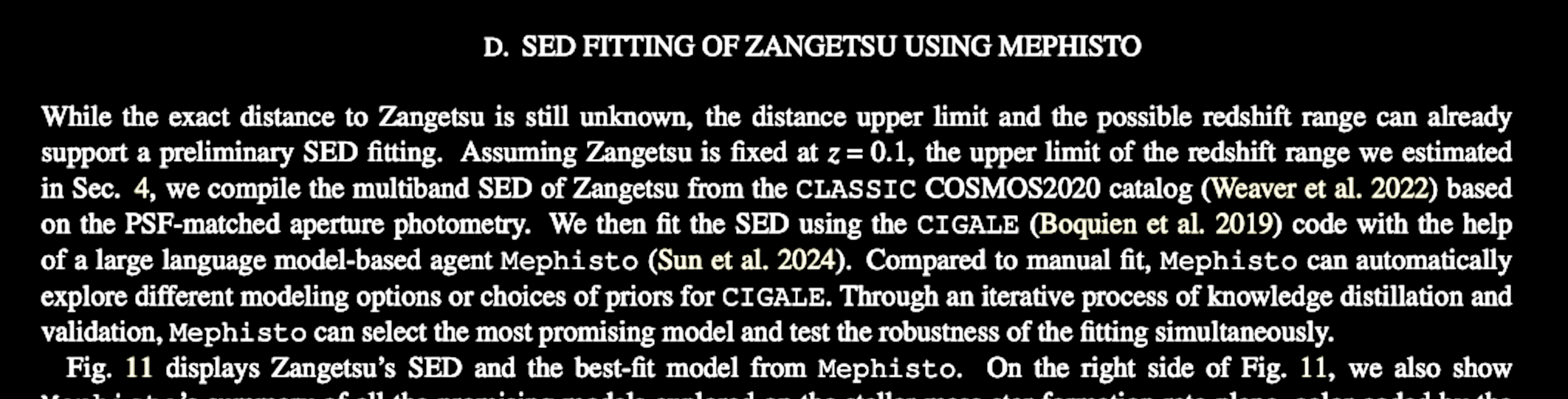

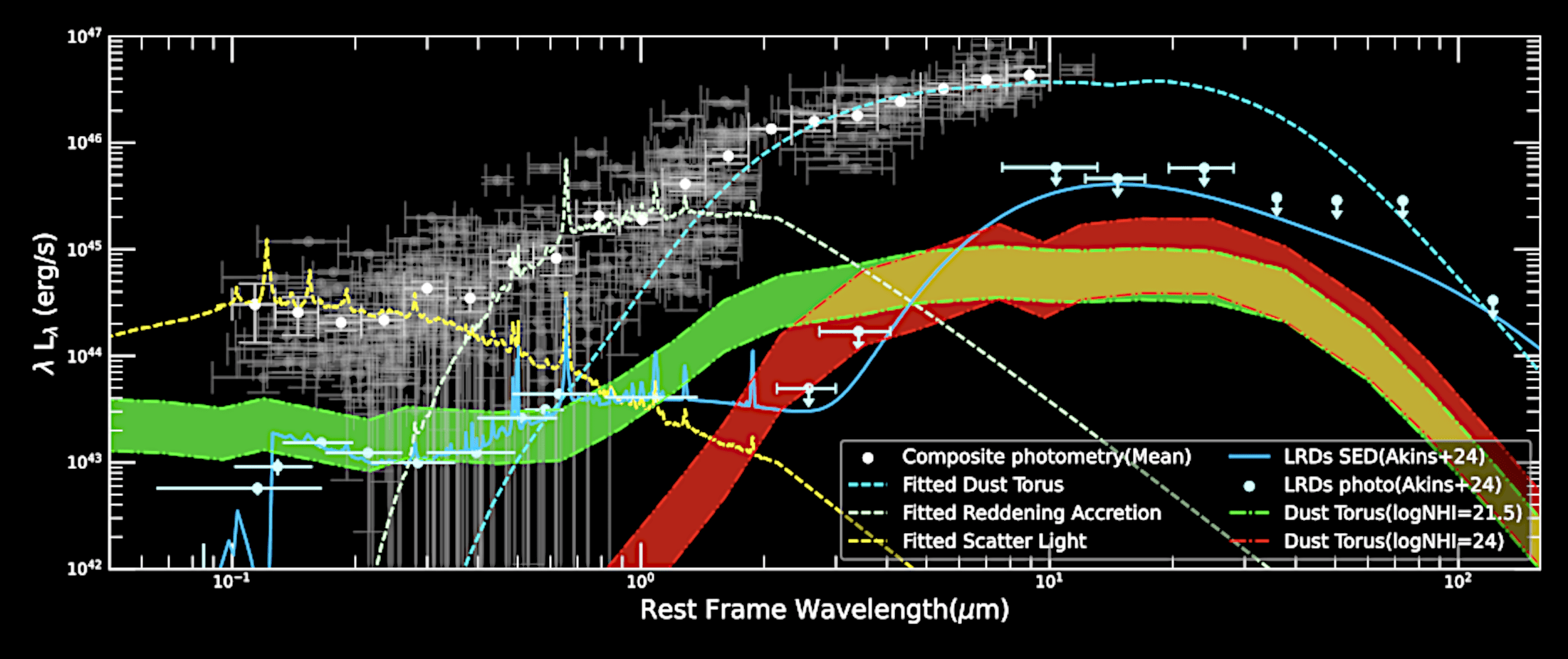

Explaining James Webb's "little red dot" galaxies with Mephisto

Wavelength [micron]

Flux

Sun, YST+, 2025

A seamless and interpretable AI-human collaboration

Learn from the data

Summarize "knowledge"

Examine and include prior knowledge

A seamless and interpretable AI-human collaboration

Expedite discovery

Use the learned knowledge as context

https://tingyuansen.github.io/NASA_AI_ML_STIG

Next Monday (4pm ET)

Graduate student

The Plot Twist

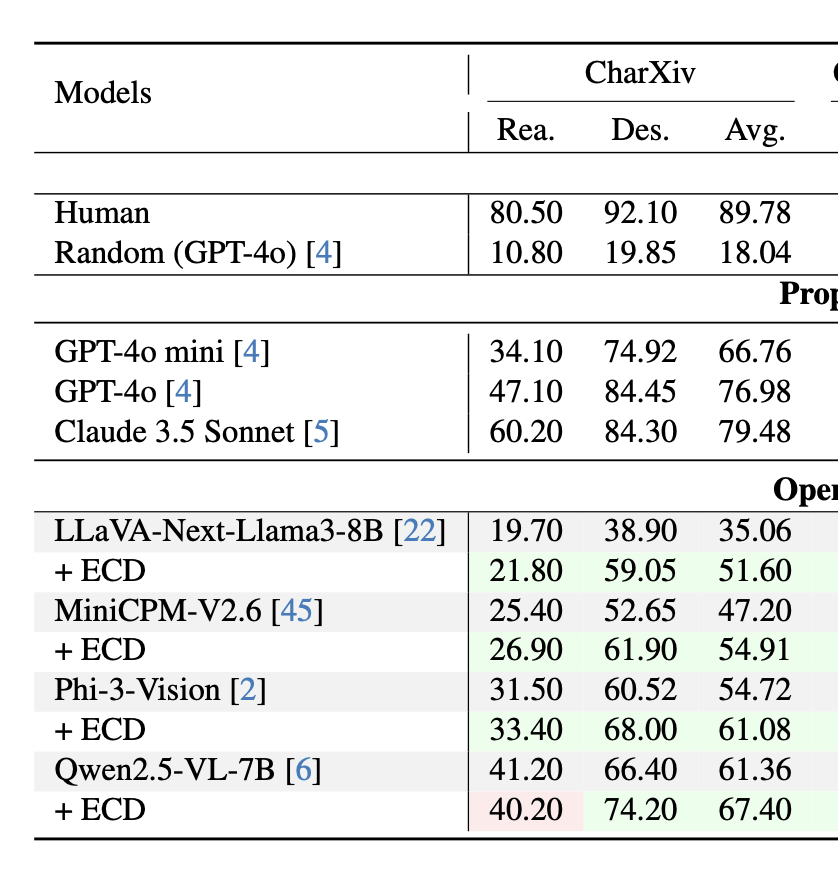

A.I. still struggles with many tasks that are easy for humans

Princeton Language and Intelligence Lab, June 2024

Human accuracy: ~80%

GPT-4o: ~47%

Can A.I. reason about scientific charts?

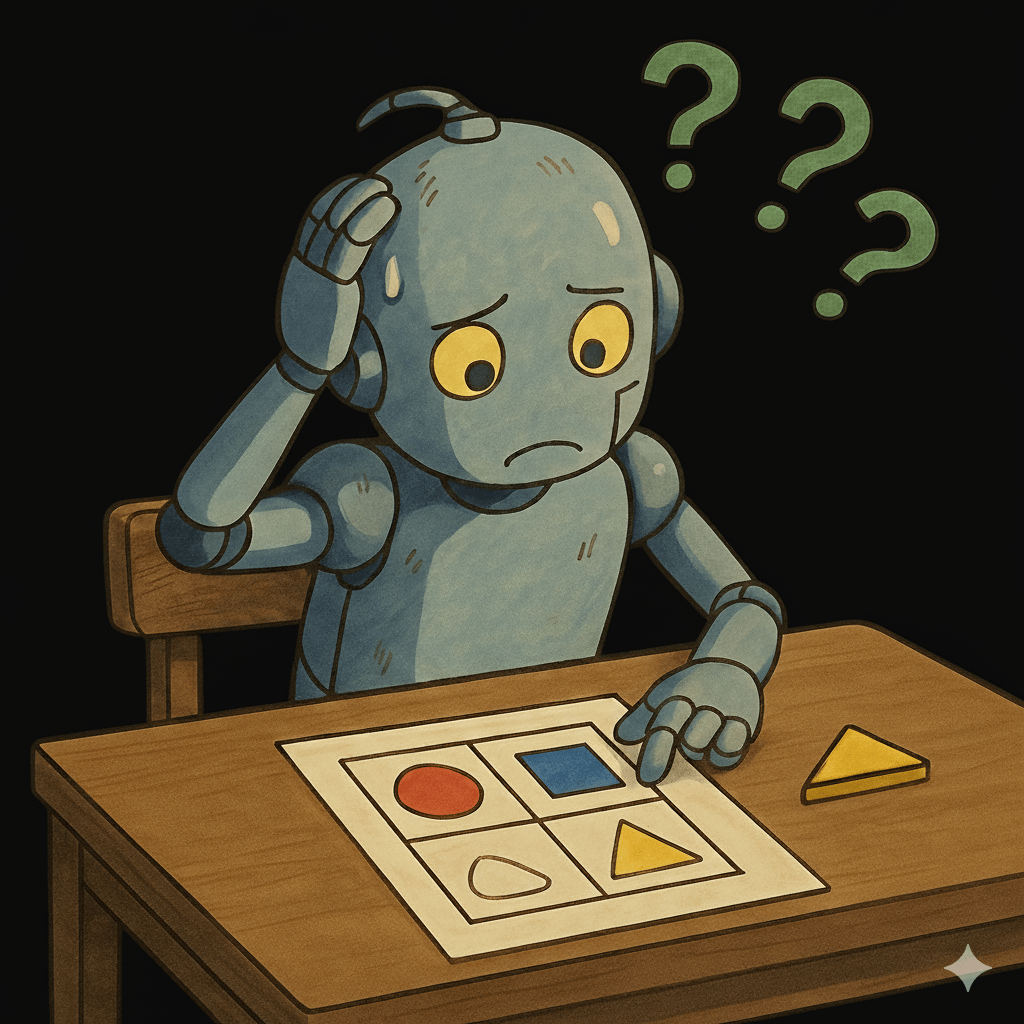

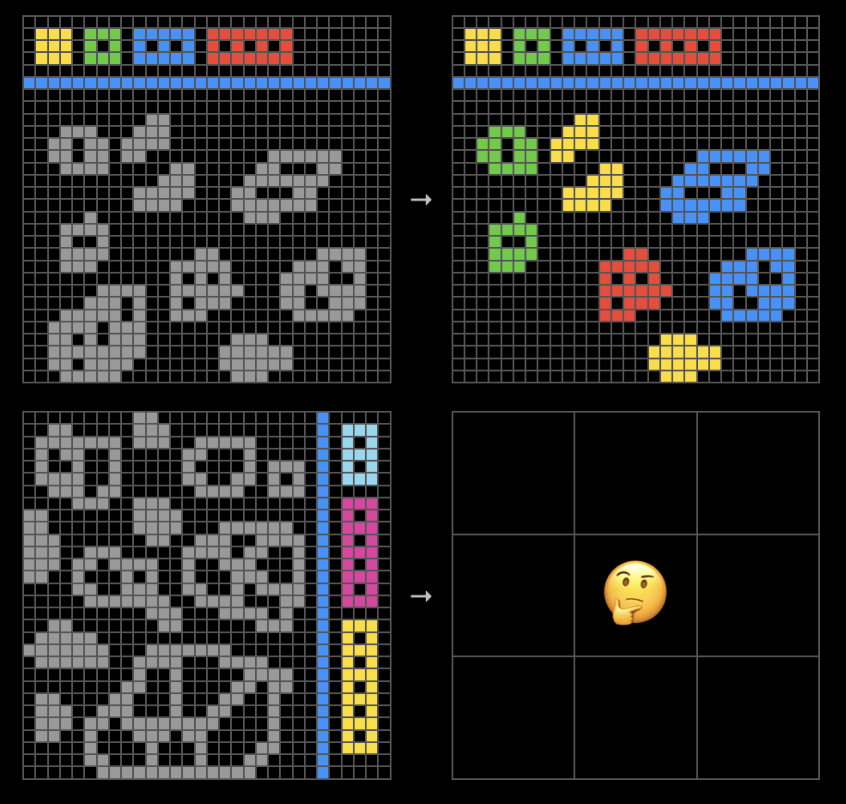

ARC Prize Foundation (ARC-AGI-2, 2025)

Spatial Pattern Reasoning

Human Panel : ~ 100%

GPT-5 : ~10%

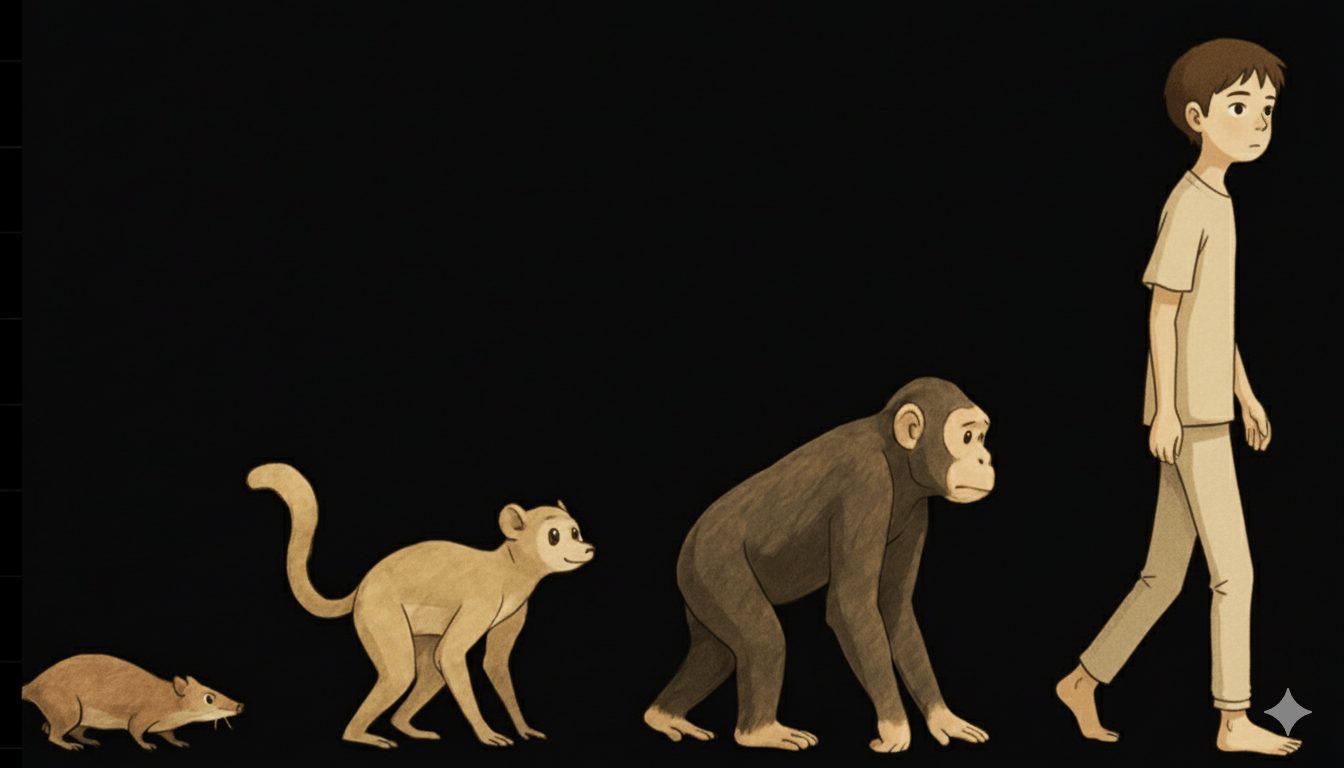

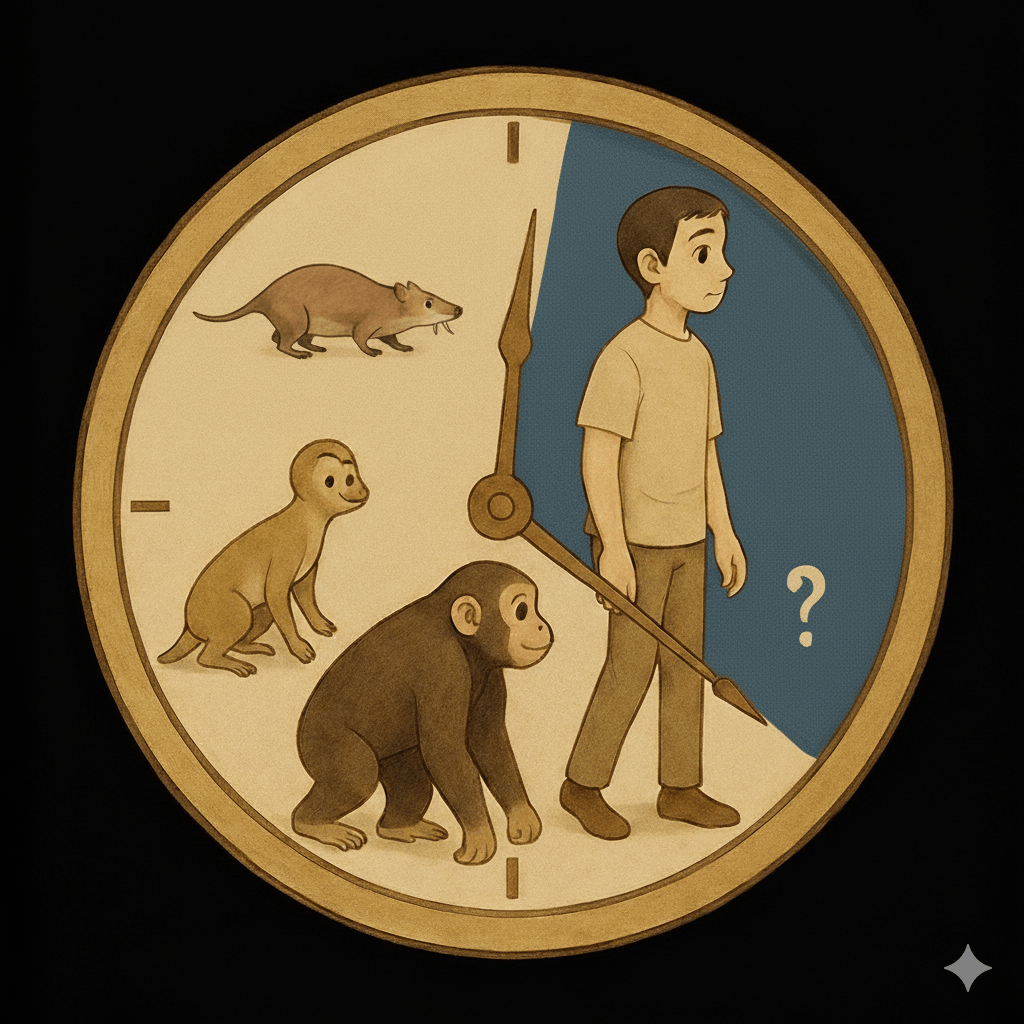

Moravec's Paradox (1988)

- High-level reasoning is easy for AI;

basic sensory-motor skills are hard

Reversing the evolution of "intelligence"

Evolution Timeline: What came first vs. last

Conversational and logical abilities are the easiest to imitate

Easy-for-AI

Complex calculations

Easy-for-Human

Logical inference (?)

Memorizing information

Language

Coding

Spatial reasoning

Common sense physics

(water flows downhill)

Basic motor skills

Visual reasoning

Understanding context

A.I. in Astronomy Olympiads

Pinheiro, ..., YST+, 2025

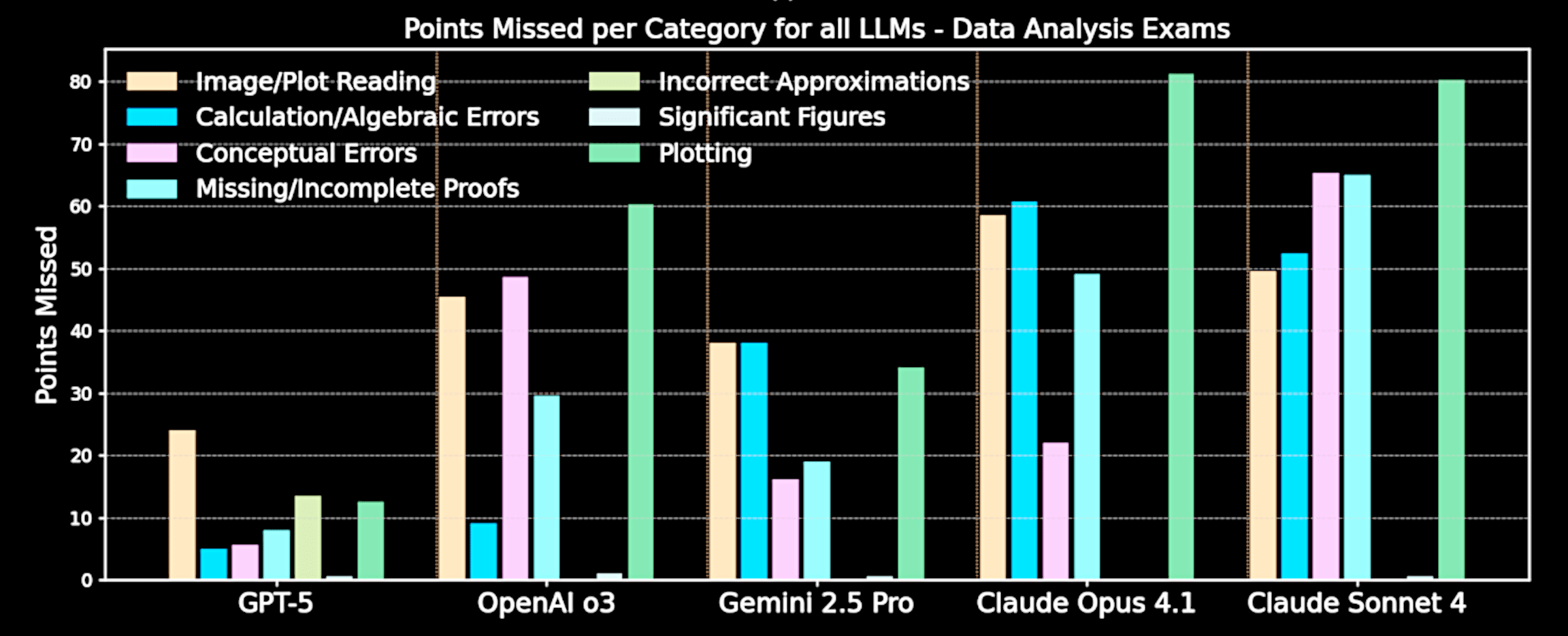

Visual reasoning remains a limiting factor for AI agents

Pinheiro, ..., YST+, 2025

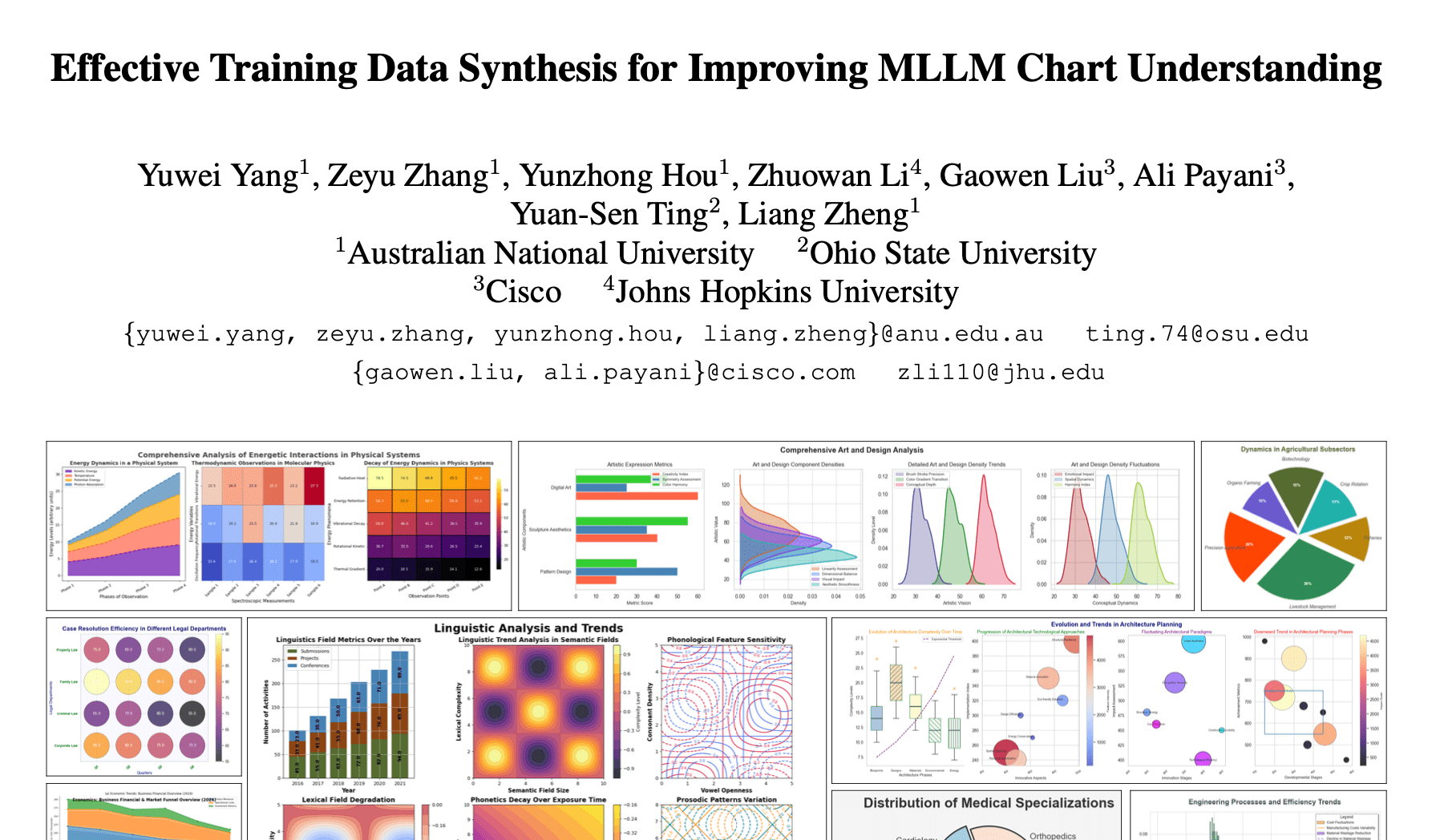

Yang,... YST+, 2025, ICCV

We don't understand how people intuitively understand plots

Yang,... YST+, 2025, ICCV

AI is still 20-50 points worse than humans

Brute force fine-tuning can close the gap in simple descriptive tasks, but not in visual reasoning tasks

Can A.I. come up with good ideas?

How do human come up with good ideas?

YST+, 2025

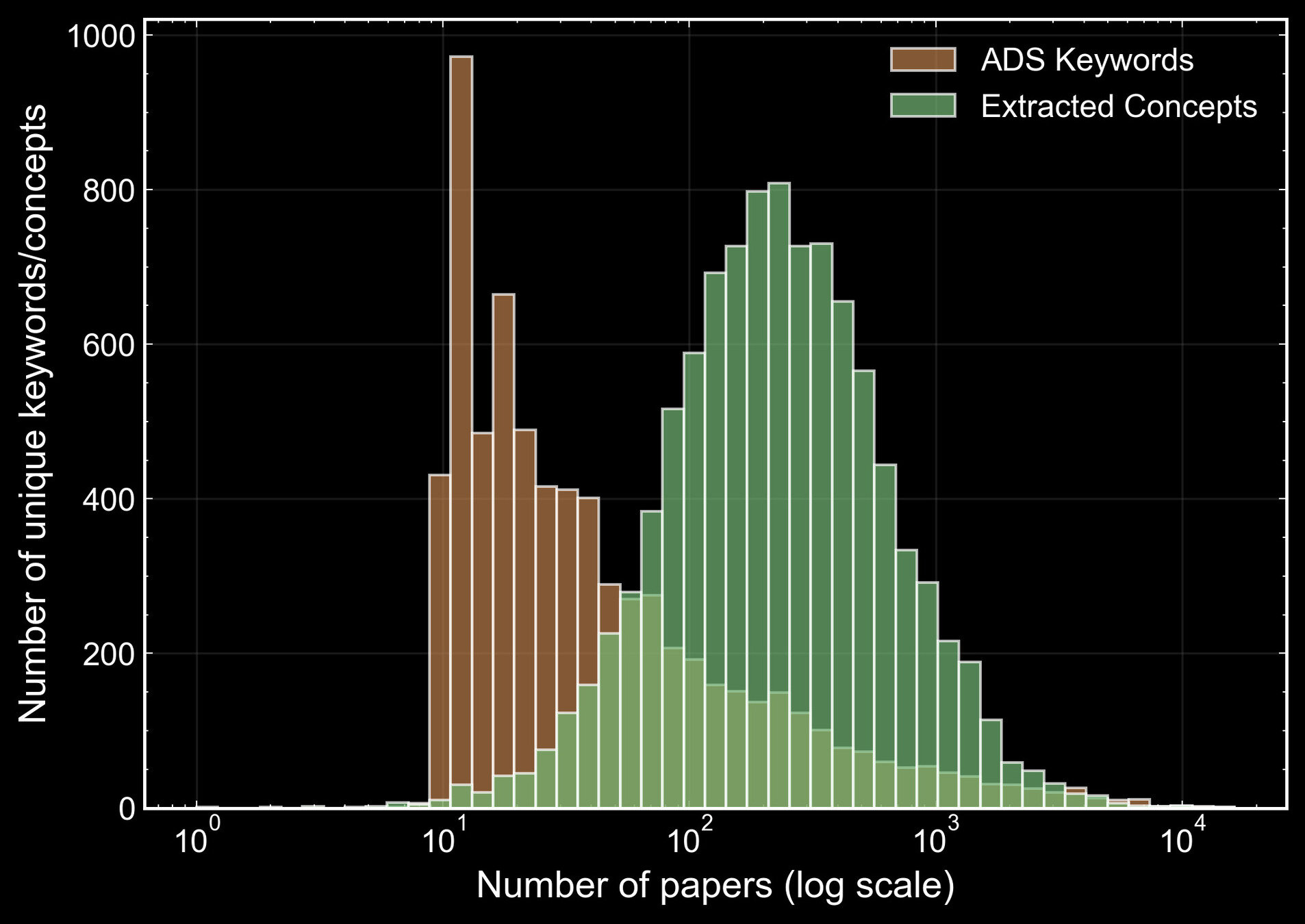

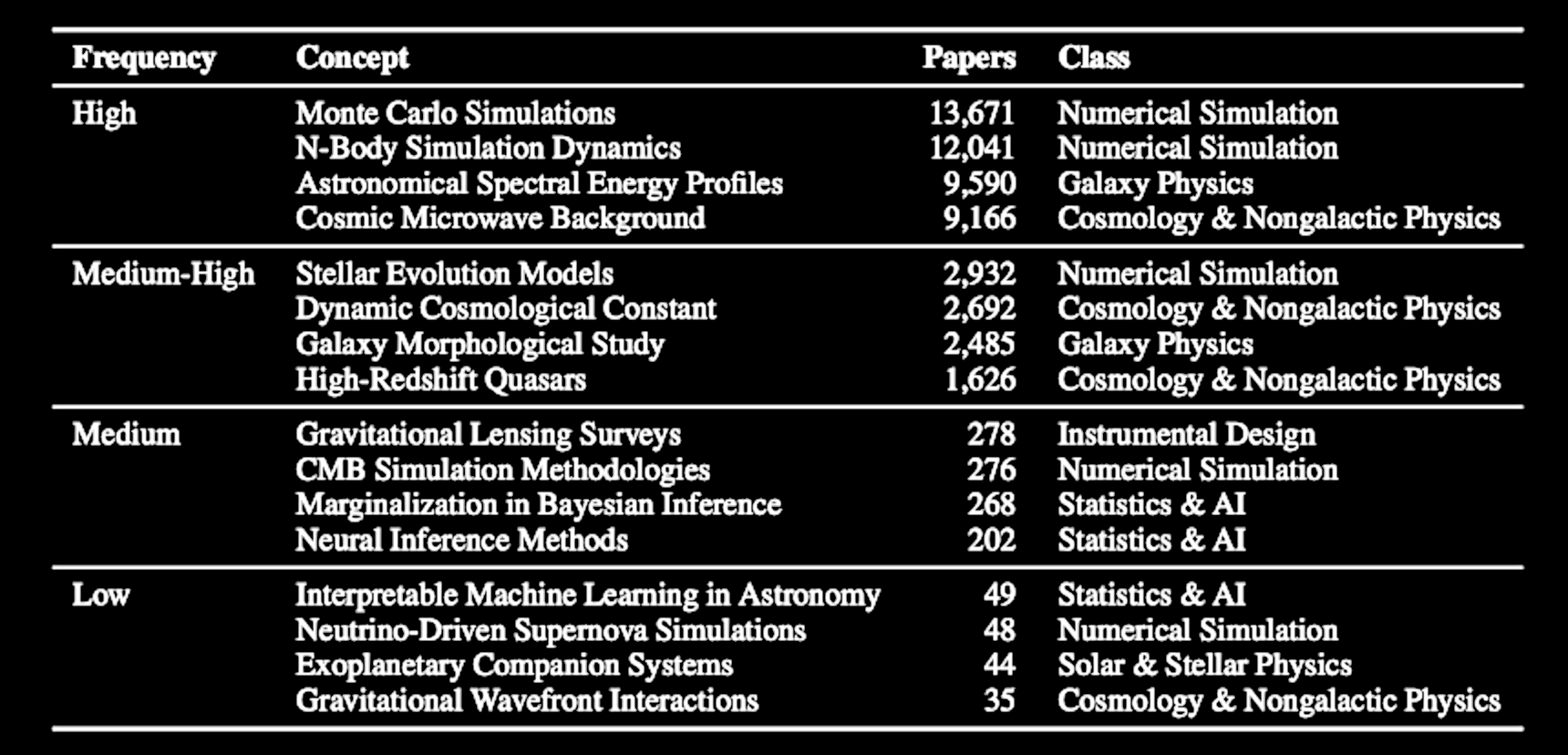

Our concepts show finer granularity than keywords

YST+, 2025

Our concepts show finer granularity than keywords

YST+, 2025

Visualizing the knowledge graph in astronomy

Sun, YST+, 2024a

astrokg.github.io

The temporal evolution of concept

co-occurrences

in papers

Cosmology

Galaxy

High-energy

Sun/Star

Exoplanet

Simulation

Instrument

AI/Stat

Cosmology

Galaxy

High

-energy

Star

Planet

Sims

Instru.

AI/Stats

Sun/Star

Applications of AI in Stats

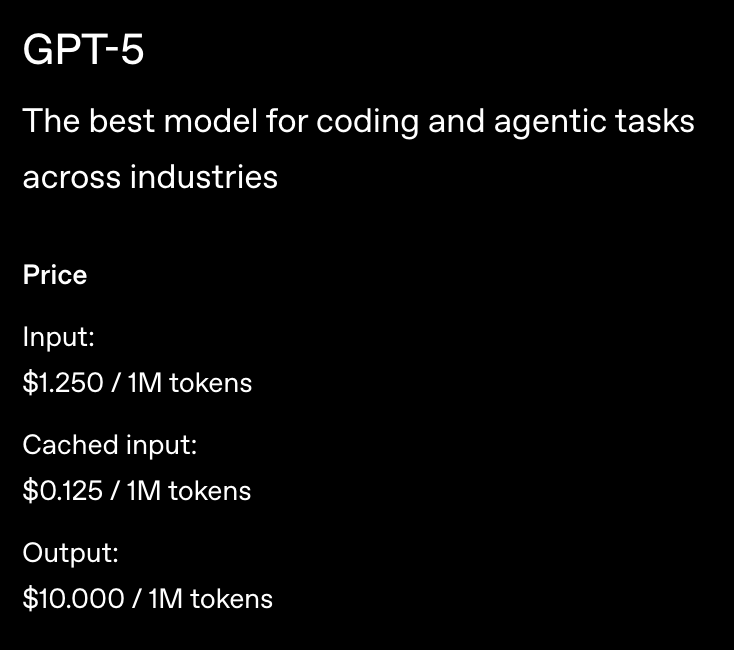

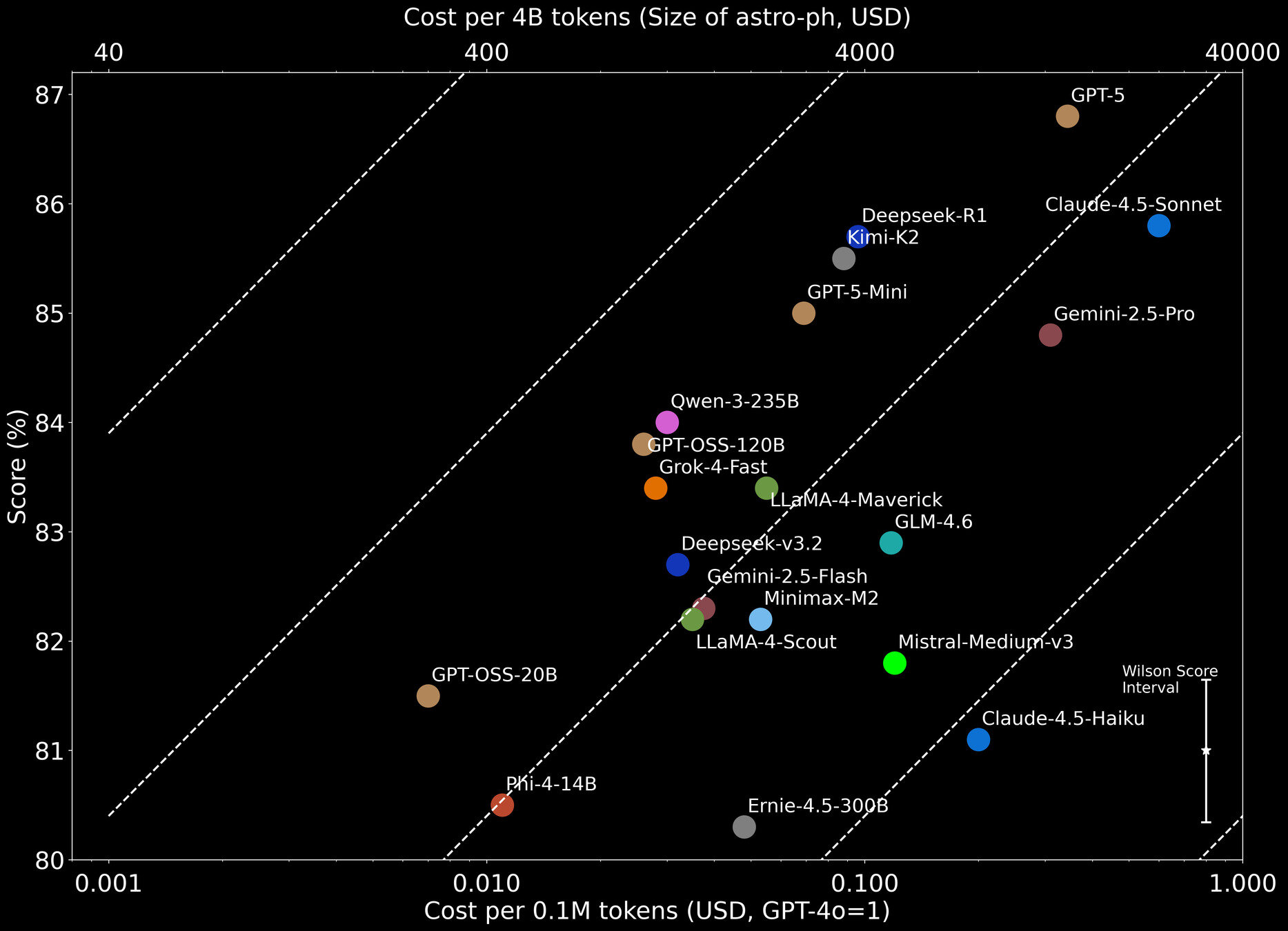

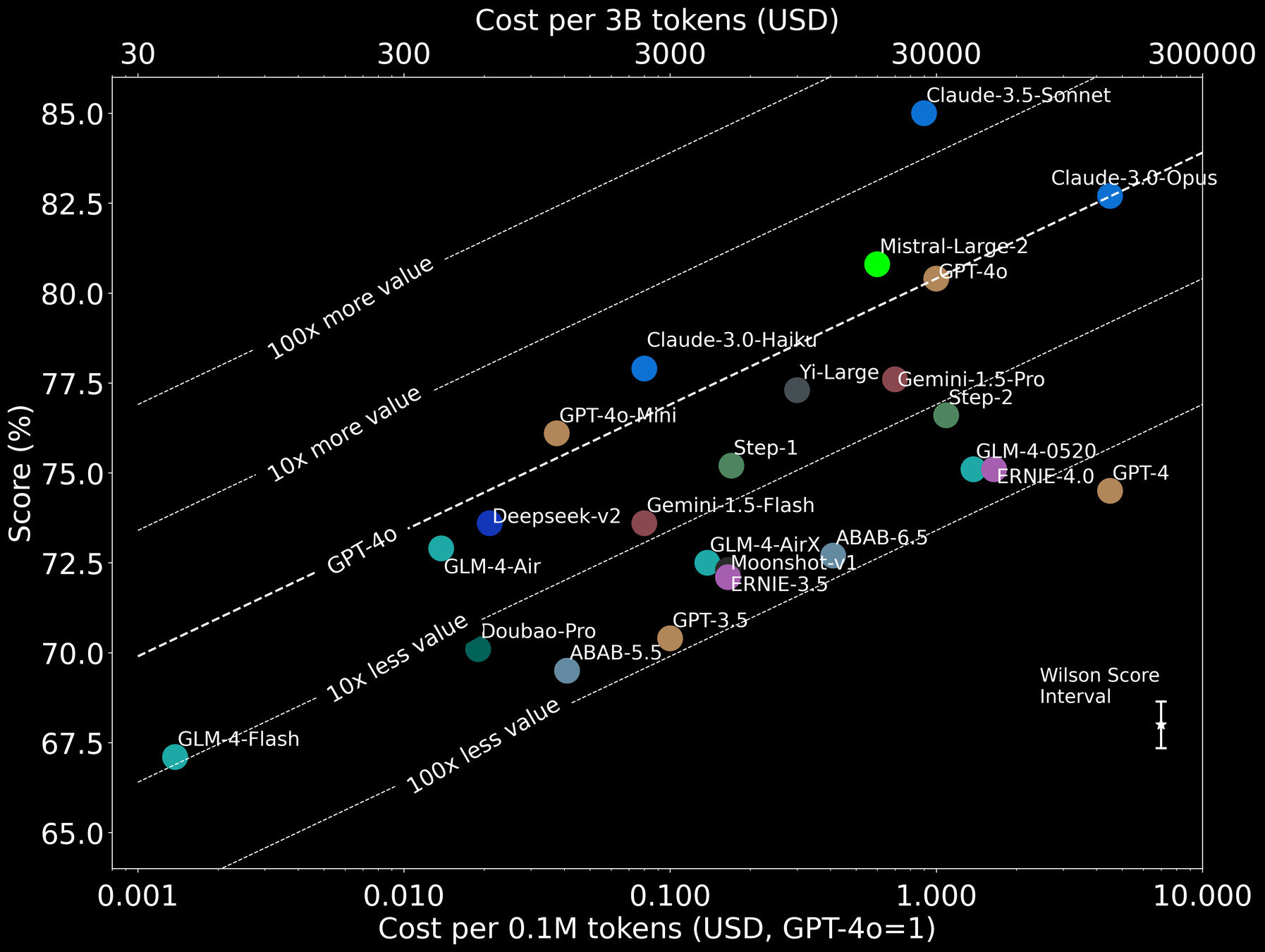

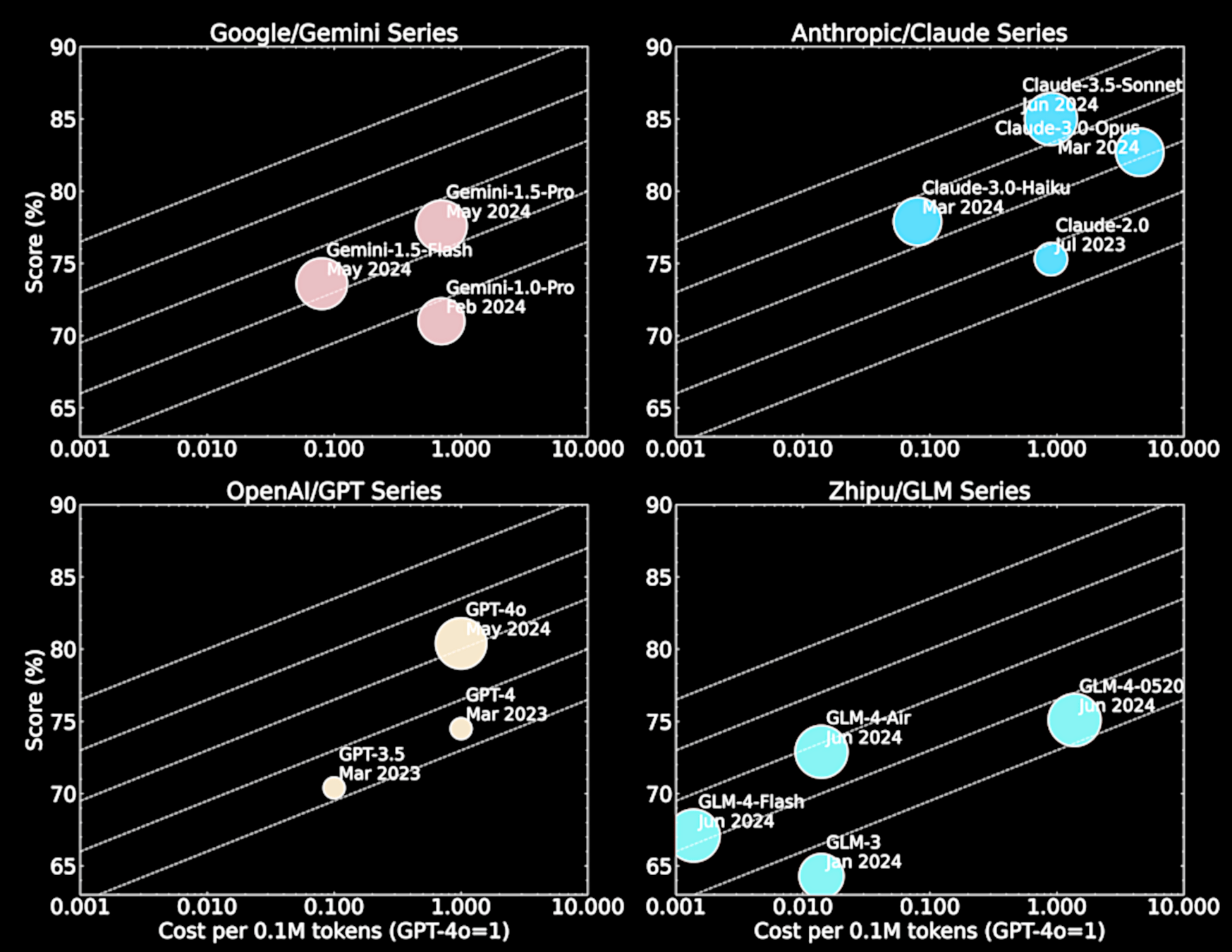

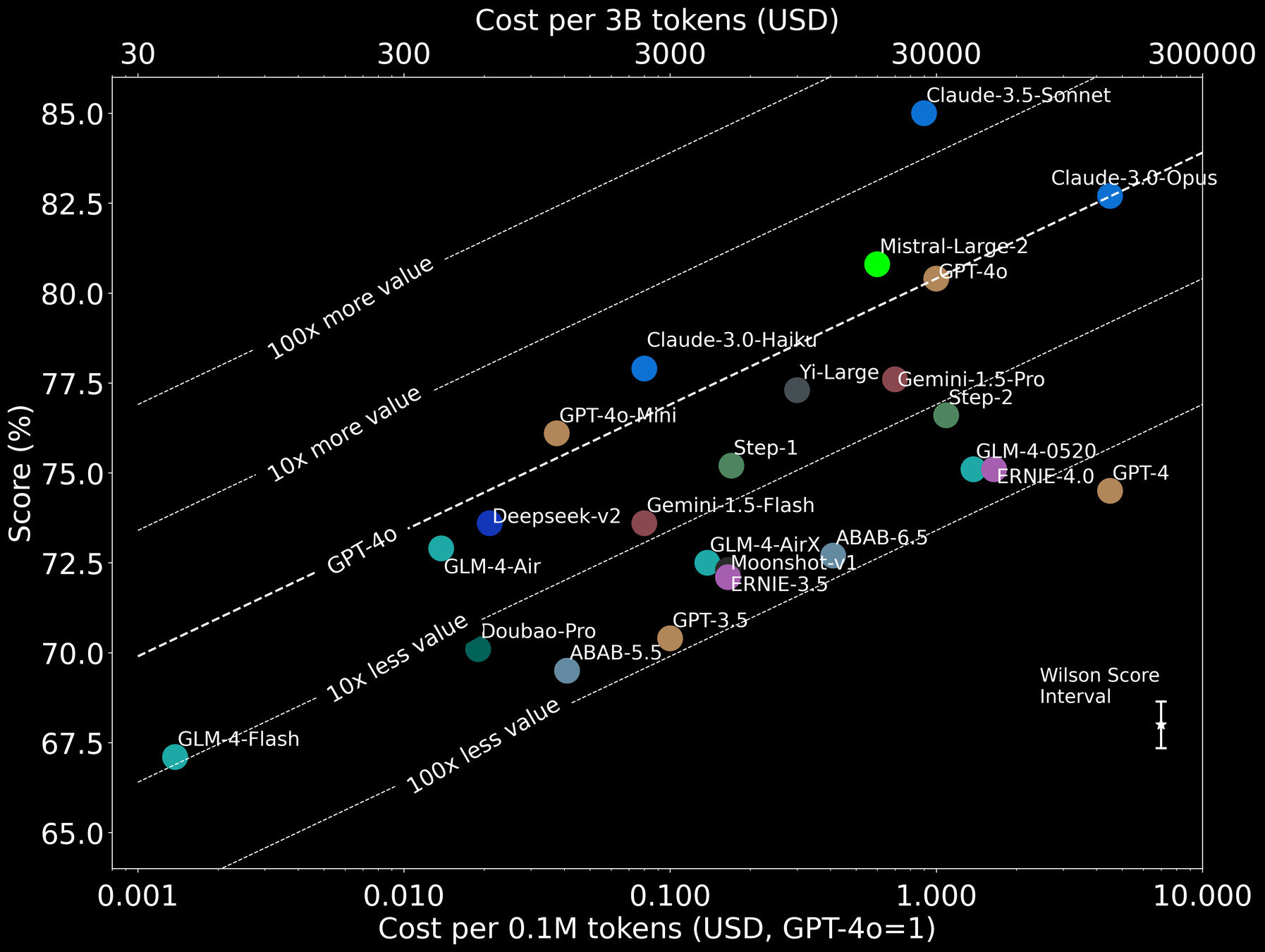

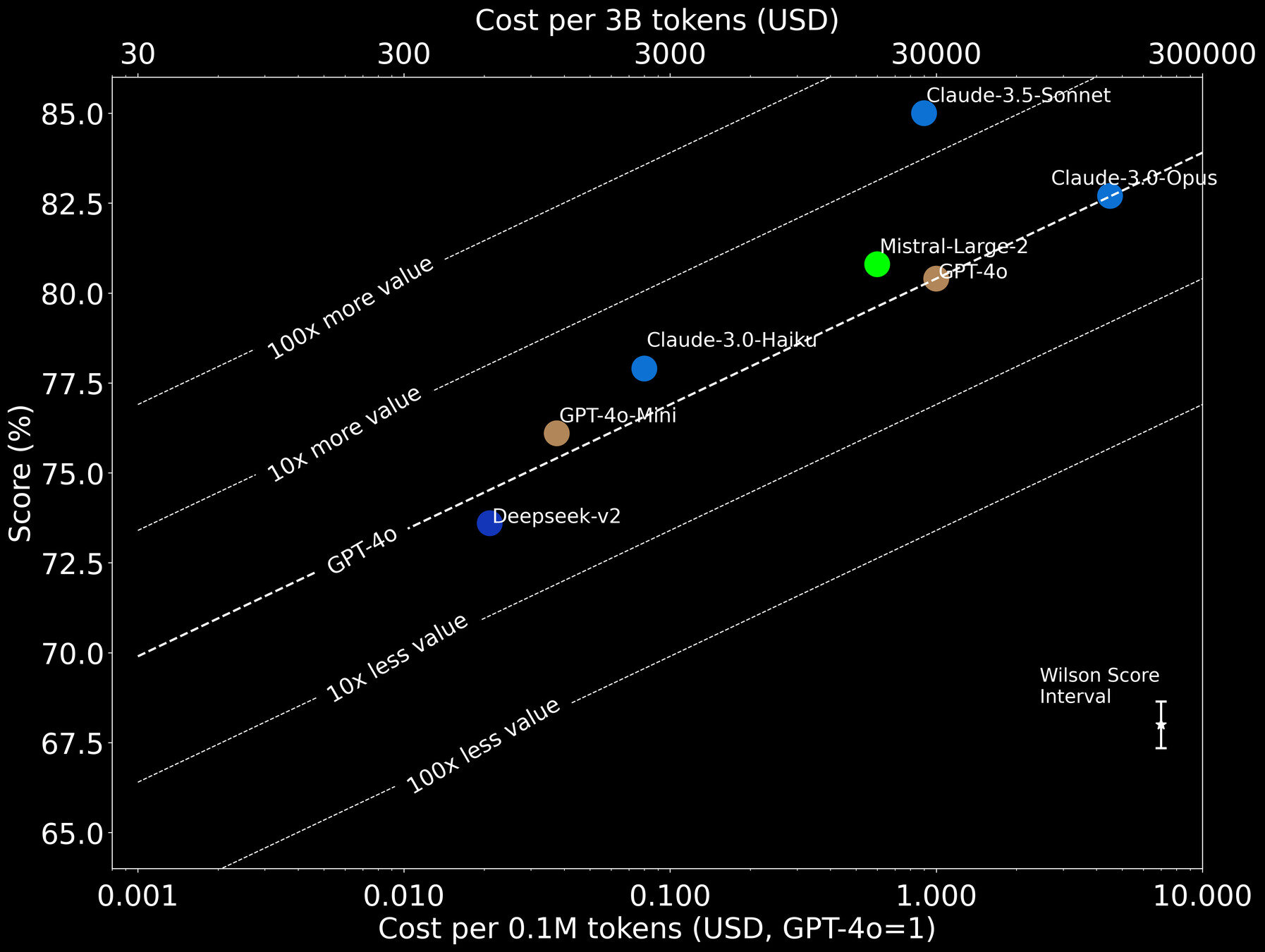

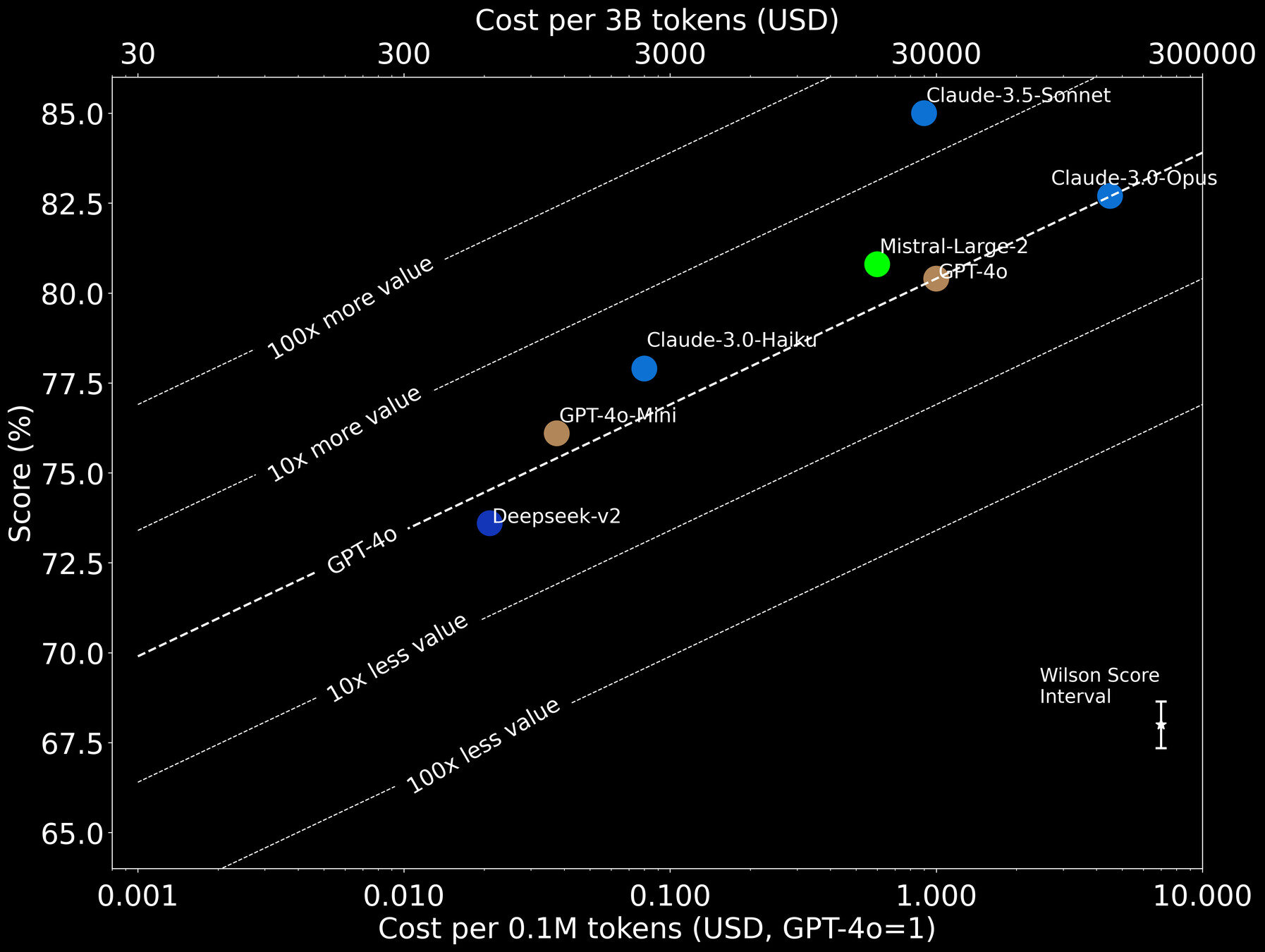

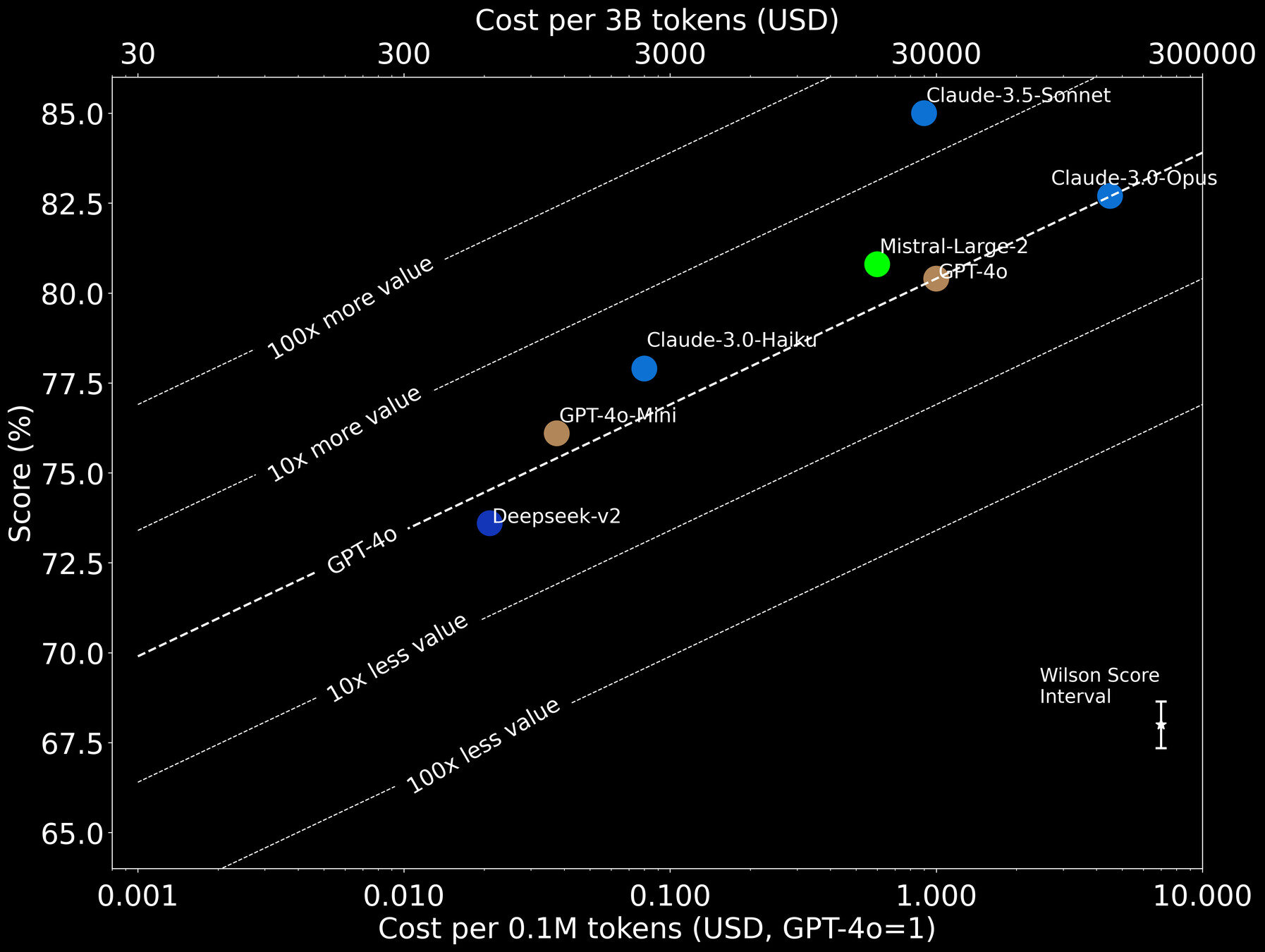

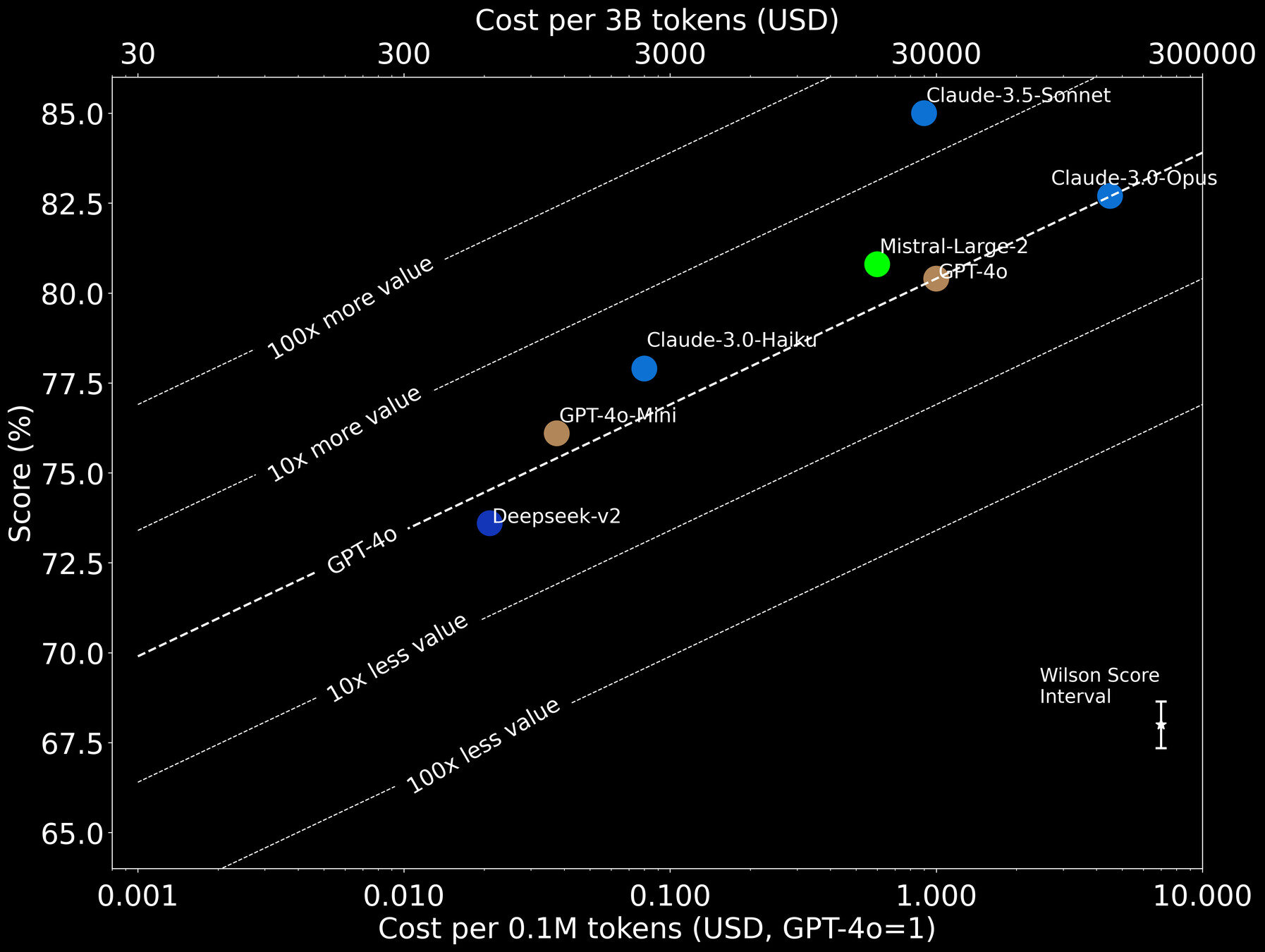

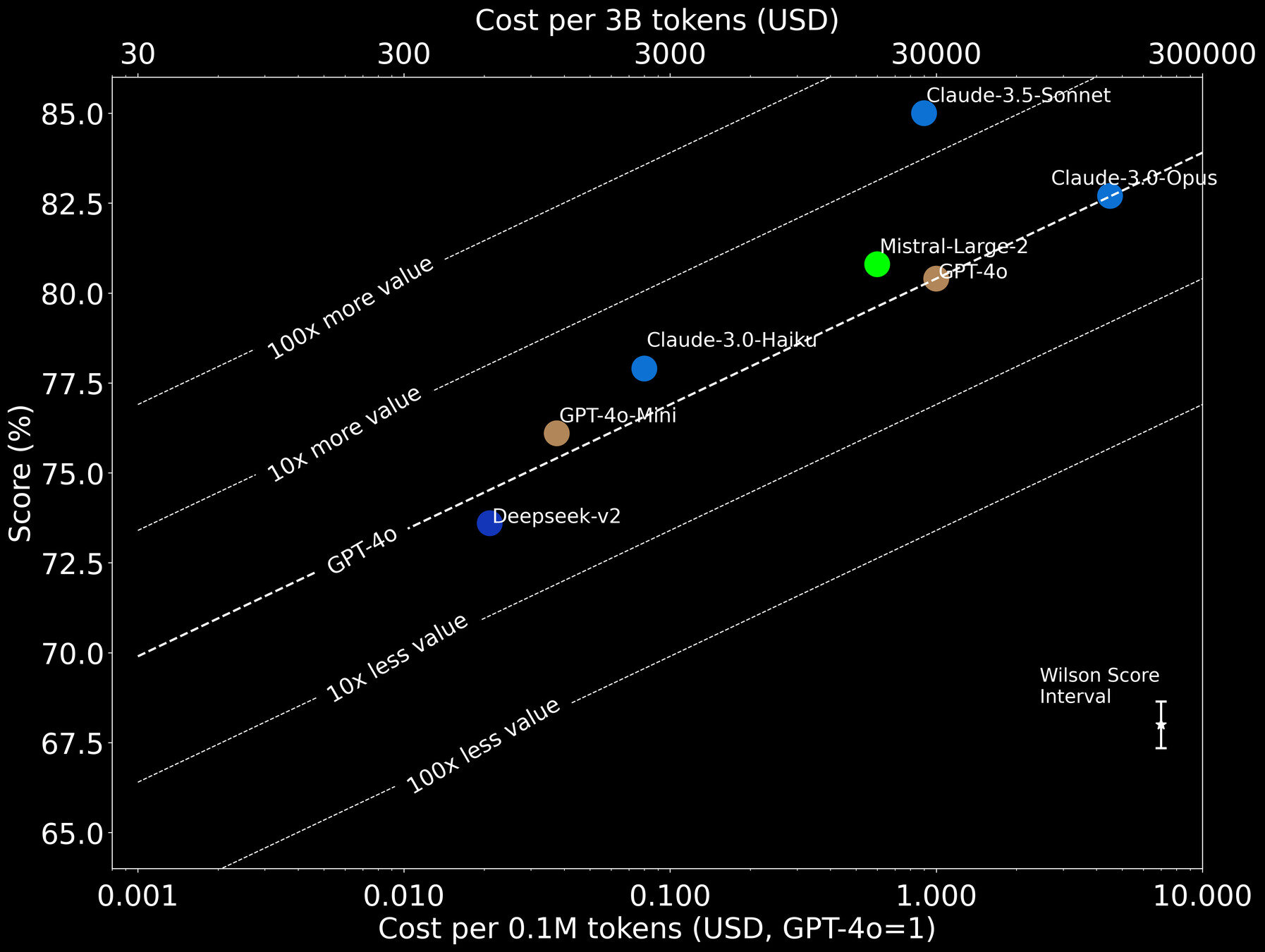

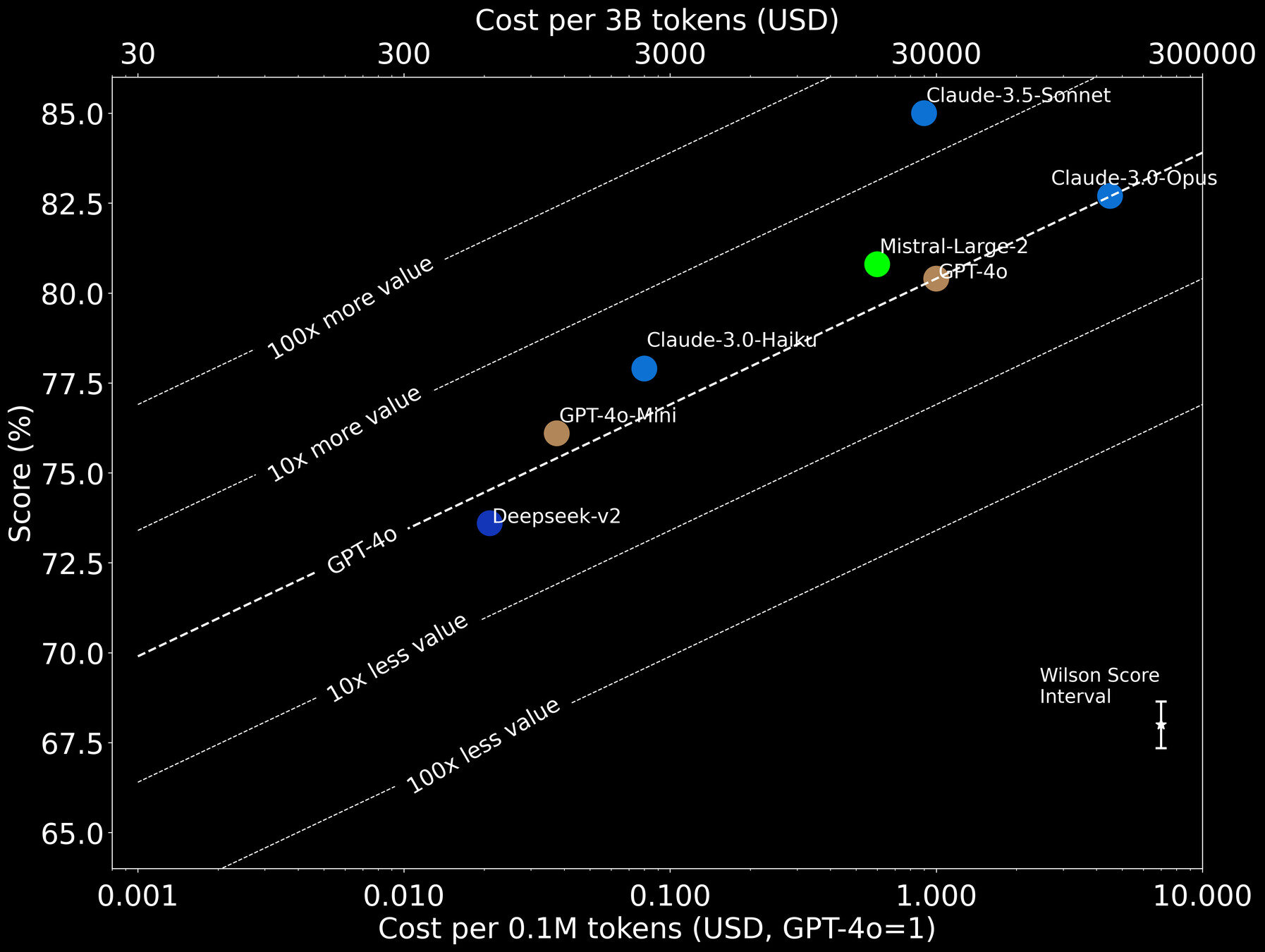

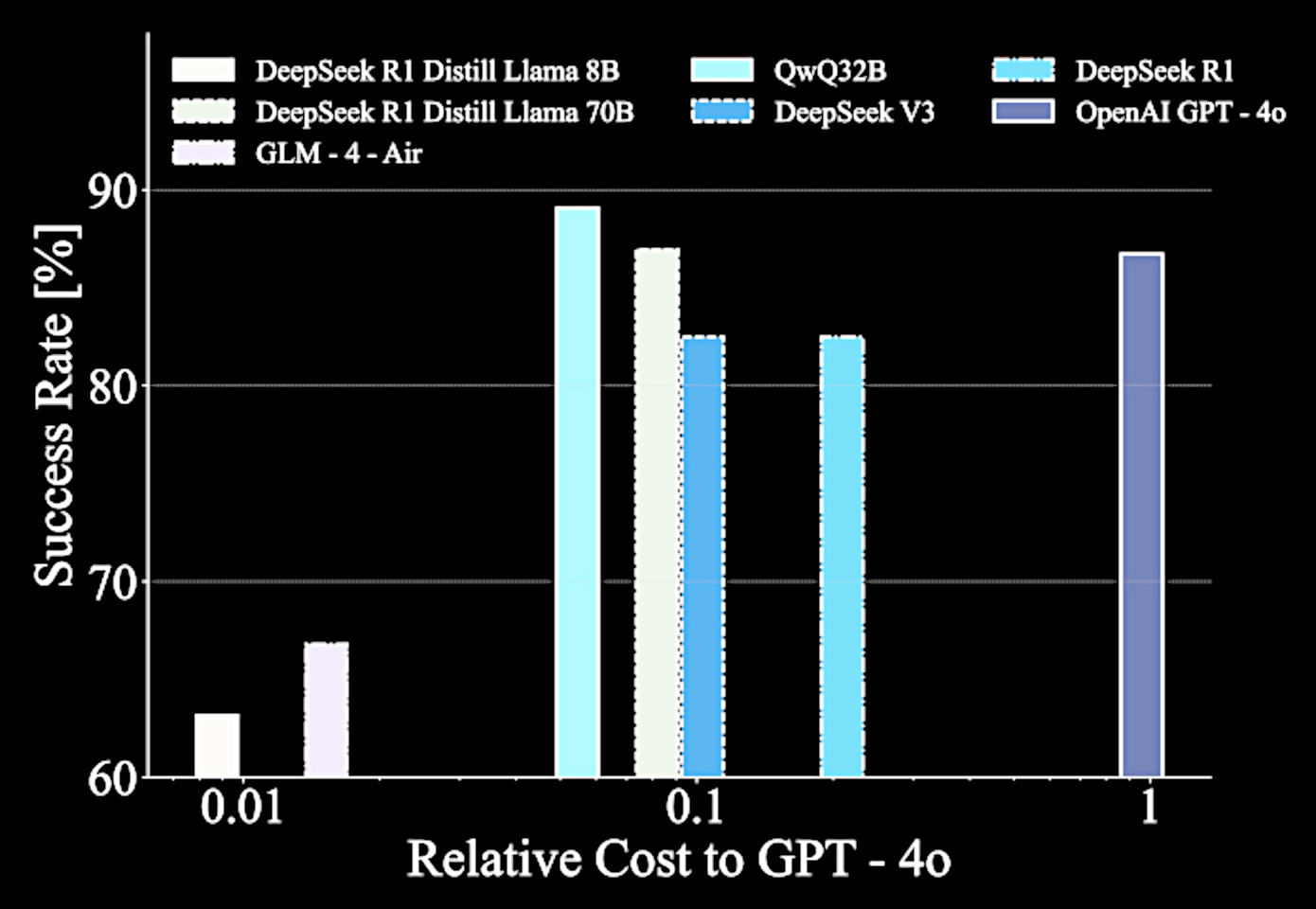

We also need a capable model that can generate run cost efficiently....

capable model

vs.

cost efficiency

e.g., GPT-5

In the SED case study, we need ~0.1M tokens per source

= USD 1 per source ...

1B sources = $1 billion

e.g., Roman Space Telescope, Euclid Space Telescope

~ approximately the build cost

Can we improve lightweight

open-weights LLMs to perform well on astronomical tasks?

Natural Language Processing experts

Oak Ridge

National Lab

Argonne

National Lab

AstroMLab (astromlab.org)

Harvard-Smithsonian ADS

U. Ilinois

Urbana-Champaign

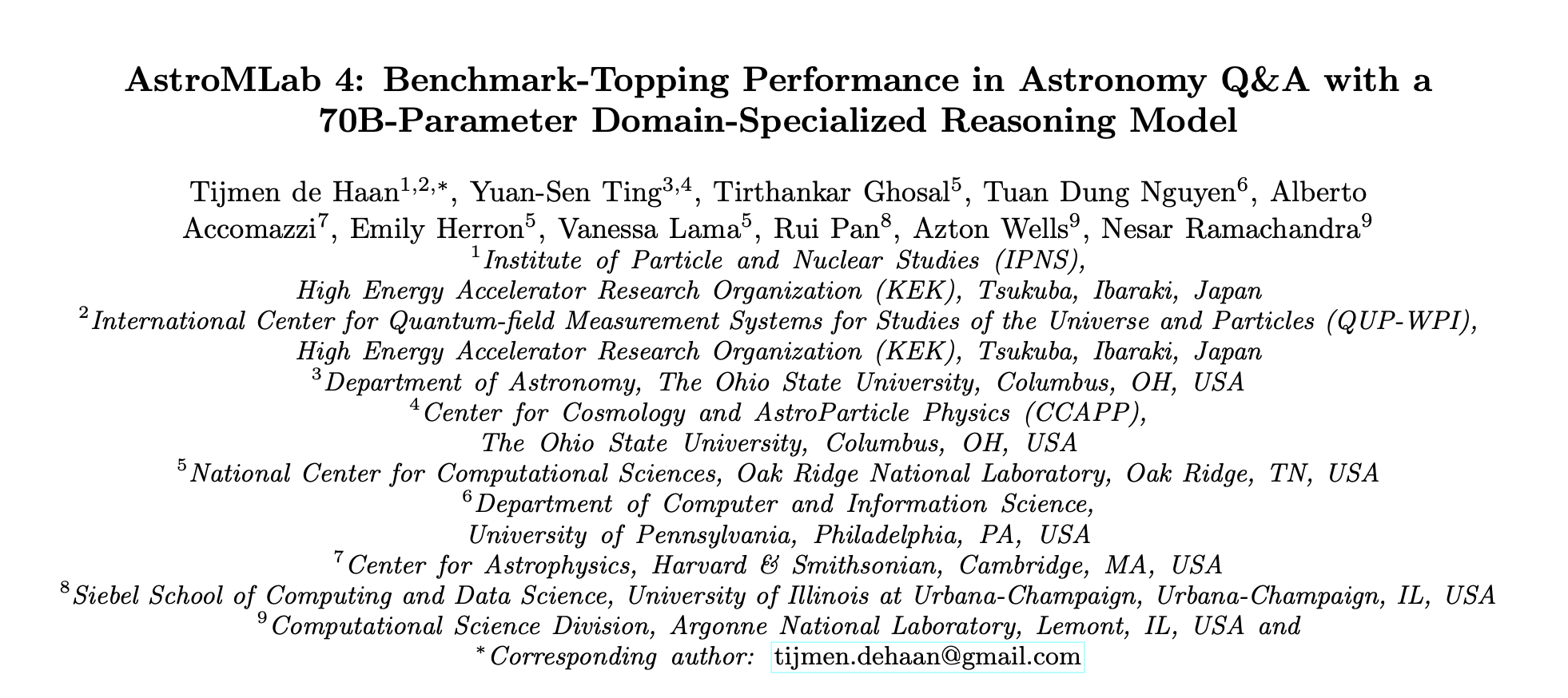

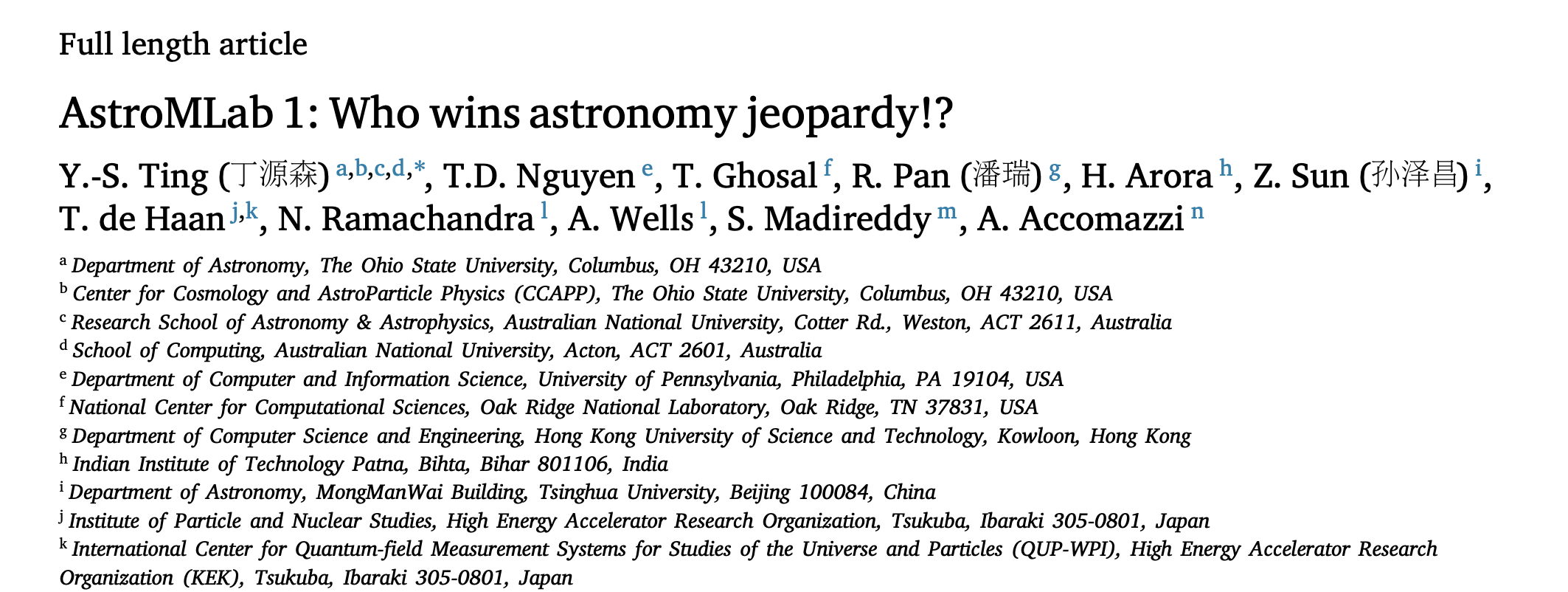

De Haan, YST+ 2025

YST, AstroMLab+, 2025

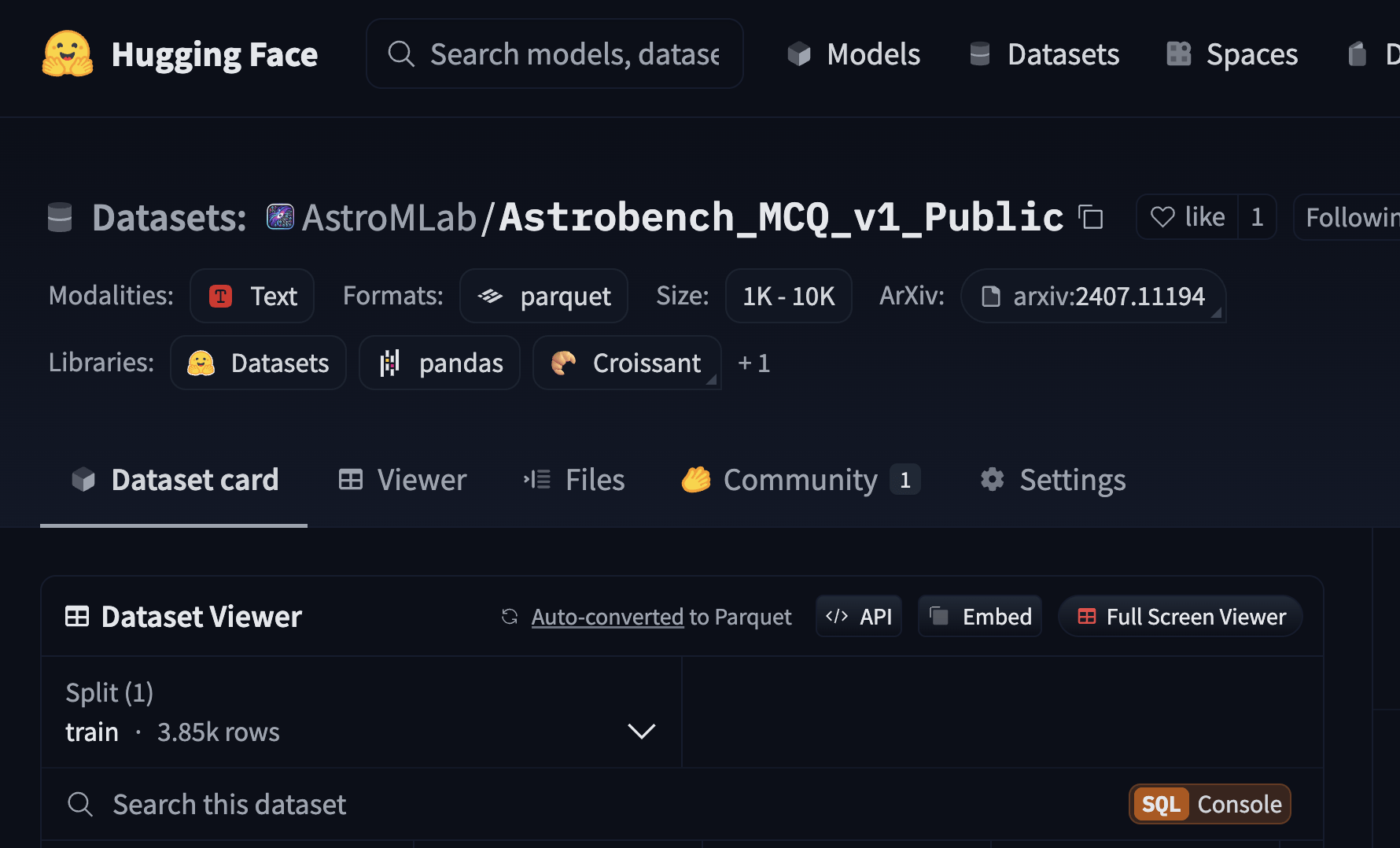

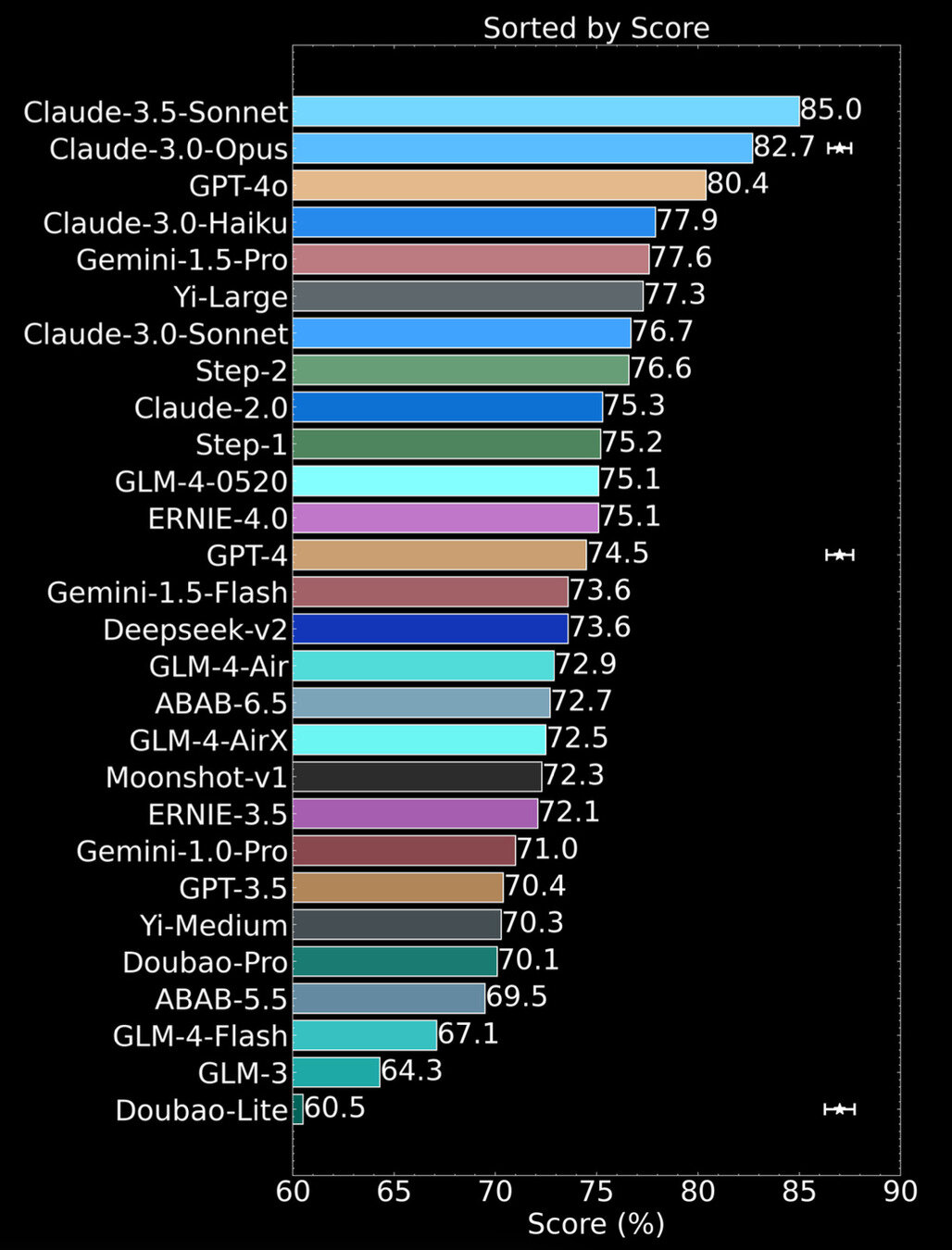

The first extensive benchmarking effort in astronomy

The first extensive benchmarking effort in astronomy

Knowledge Recall

Benchmark multiple choice question - example

What is the primary reason for the decline in the number density of luminous quasars at redshifts greater than 5?

A decrease in the overall star formation rate, leading to fewer potential host galaxies for quasars.

An increase in the neutral hydrogen fraction in the intergalactic medium, which obscures the quasars’ light.

A decrease in the number of massive black hole seeds that can form and grow into supermassive black holes.

An increase in the average metallicity of the Universe, leading to a decrease in the efficiency of black hole accretion.

Score (%)

Cost per 1 SED Source (USD)

Domain experts ~67% (20 points below AI)

AstroSage-8B

(de Haan, YST+ 2025a)

AstroSage-70B

(de Haan, YST+ 2025b)

For astronomy Q&A, AstroSage-70B delivers GPT-5-level performance while costing 20x less

Pan, ..., YST, 2025, to be submitted

Beyond just benchmarking astronomical knowledge recall

What A.I. agent

can solve

Interesting astronomy problems

What A.I. agent

can solve

Interesting astronomy problems

JWST SED Fitting

Summary :

Nonetheless, expectations should be tempered — the Moravec paradox makes AI capabilities uneven for full autonomy.

Fine-tuning models, building ecosystems and proper benchmarking to enable cost-effective, well-rounded AI agents is the path forward.

Modern LLMs' reasoning capabilities make AI agents an exciting new paradigm for astronomical research.

Though limited in reasoning, Mephisto analyzes and navigates SED physical models as effectively as humans.

Extra Slides

Making "plans"

Making "hypotheses"

Annotated

Labelled Data

supervised

tasks

Unlabelled Data

foundational models

Interacting with "physical" world

AI

astronomer

Example of learned "knowledge"

" If there is a gross underestimation in the MWIR bands,

consider exploring a wider range of fracAGN values in the agn module to improve the fit in these bands "

Number of Learning Iterations

0

10

20

30

5.1

5.6

6.0

6.4

Chi-Square

Chi-Square of the Fit

Why this plateau ??

Sun, YST+, 2024b

LLMs with self-play RL outperforms native LLMs

Number of Learning Iterations

0

10

20

30

5.1

5.6

6.0

6.4

Chi-Square

Chi-Square of the Fit

- Number of photometry bands fitted within 1σ

Sun, YST+, 2024b

LLMs with self-play RL outperforms native LLMs

Number of Learning Iterations

0

10

20

30

5.1

5.6

6.0

6.4

Chi-Square

Chi-Square of the Fit

- Number of photometry bands fitted within 1σ

"Exploration"

"Exploitation"

Sun, YST+, 2024b

LLMs with self-play RL outperforms native LLMs

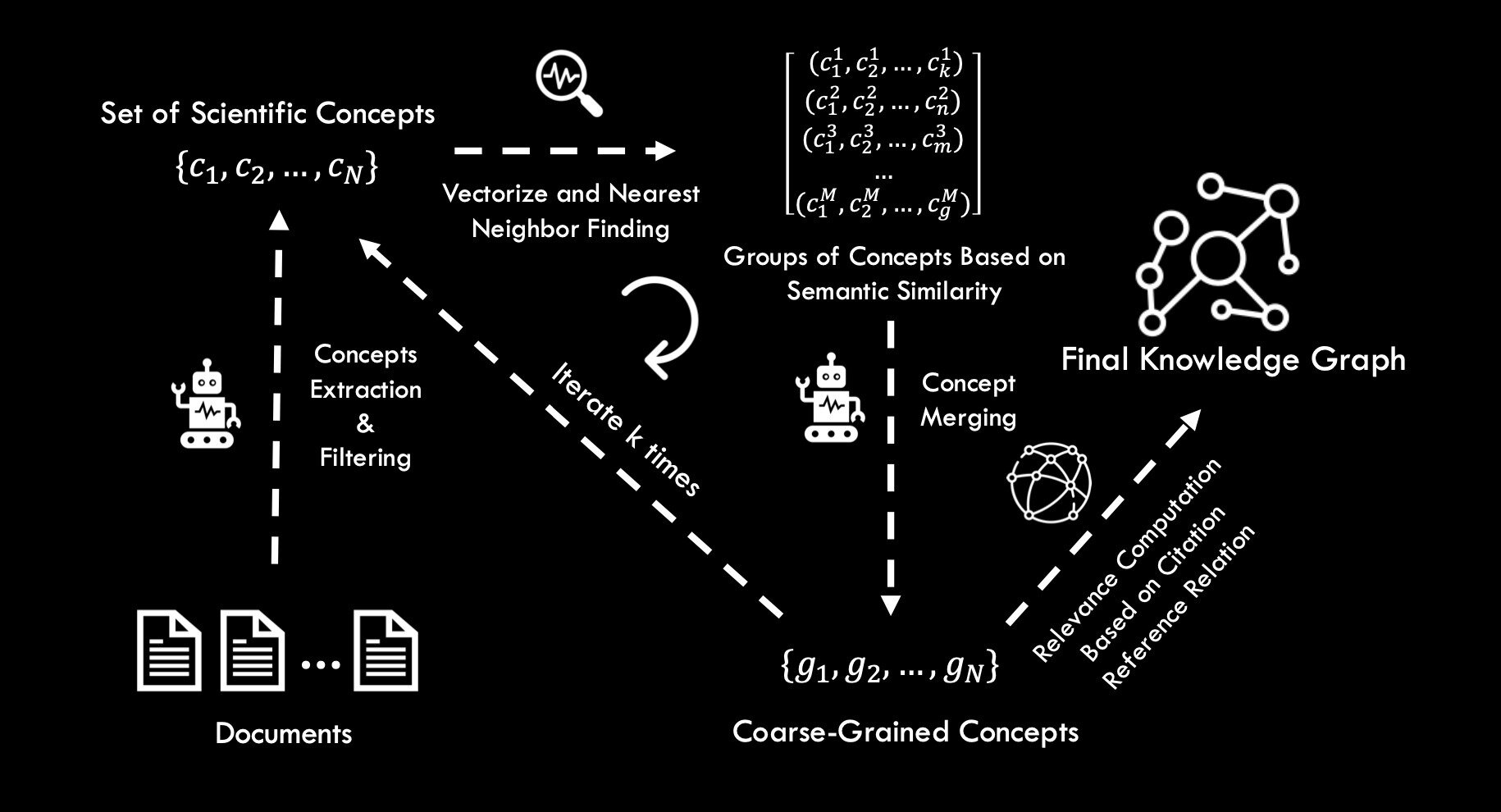

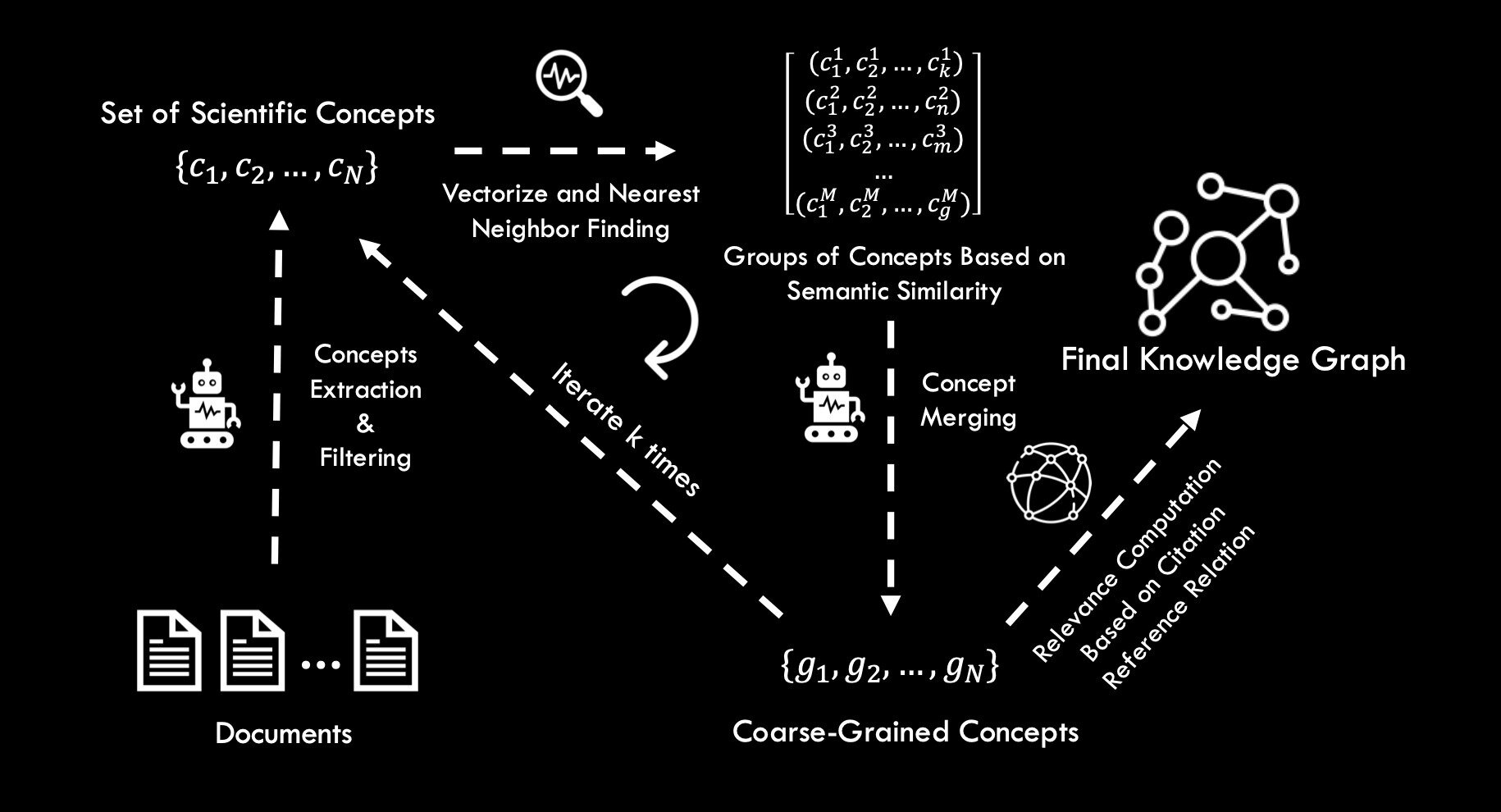

Beyond the thesaurus, extracting concepts from all arXiv papers.

300,000 papers

Mistral 7b

1,000,000 concepts

Control the desired granularity through merging and pruning

Mistral 7b

Concept merging and pruning

spectra

= spectroscopy

= spectral analysis

Unified Astronomy Thesaurus

Too granular

"Concepts" extracted with large-language models

Quantifying the growth of the field -- by groups of concepts

Year

2000

2005

2010

2015

2020

7

9

11

10

8

Count [thousands]

Scientific concepts

Sun, YST+, 2024a

Quantifying the growth of the field -- by groups of concepts

Year

2000

2005

2010

2015

2020

1.5

Count [thousands]

Numerical simulation

1.2

0.9

0.6

0.3

Statistics

Sun, YST+, 2024a

The number of ML concepts in astronomy has not grown

Year

2000

2005

2010

2015

2020

1.5

Count [thousands]

1.2

0.9

0.6

0.3

Machine learning

Linear Regression,

Gaussian Process, Random Forest, ......

152

210

230

Sun, YST+, 2024a

Quantifying the cross-domain interaction:

How technical concepts inspire scientific ones

Knowledge graph via the literature-citation metric

Concept

Paper

Ting et al.

Contain

Einstein et al.

Contain

Contain

citation

Concept B:

Plasmon

Concept A:

Dark Matter

Concept A:

Dark Matter

Concept

Concept B:

Plasmon

Distance between concept A to B =

Paper

averaged over all papers containing concept A

Knowledge graph via the literature-citation metric

Concept

Paper

Technical concept:

Neural Networks

Scientific concept: Large-Scale Structure

Cross-domain linkage shows a two-phase evolution

Year

2000

2005

2010

2015

2020

-4.0

Log Average Linkage

-4.2

-4.4

-4.6

Numerical simulation

x scientific concepts

Technology development

Sun, YST+, 2024a

Concept

Paper

Scientific Concept: Large-Scale Structure

Numerical Simulations

Simulations being developed

Linkage

decoupled

Cross-domain linkage shows a two-phase evolution

Year

2000

2005

2010

2015

2020

-4.0

Log Average Linkage

-4.2

-4.4

-4.6

Numerical simulation

x scientific concepts

Technology deployment

Technology development

Sun, YST+, 2024a

Concept

Paper

Scientific Concept: Large-Scale Structure

Numerical Simulations

Simulations being deployed to sciences

Linkage increases

Year

2000

2005

2010

2015

2020

-4.0

Log Average Linkage

-4.2

-4.4

-4.6

Numerical simulation

x scientific concepts

N-body

simulation

Hydrodynamical simulation

Cross-domain linkage shows a two-phase evolution

Sun, YST+, 2024a

Interest in AI x Astronomy outpaces technological development

Year

2000

2005

2010

2015

2020

-4.0

Log Average Linkage

-4.2

-4.4

-4.6

ML x Scientific concepts

Gaussian process

multi-layer perceptron

AstroBench: High quality astronomy QA benchmark dataset

Nguyen, YST+ 2023

Worse than GPT-4o

Score (%)

Cost per 1 SED Source (USD)

Cheaper but

not as good

Can vary by three order of magnitude in "value"!

domain experts

July 2024

YST, AstroMLab+, 2025a

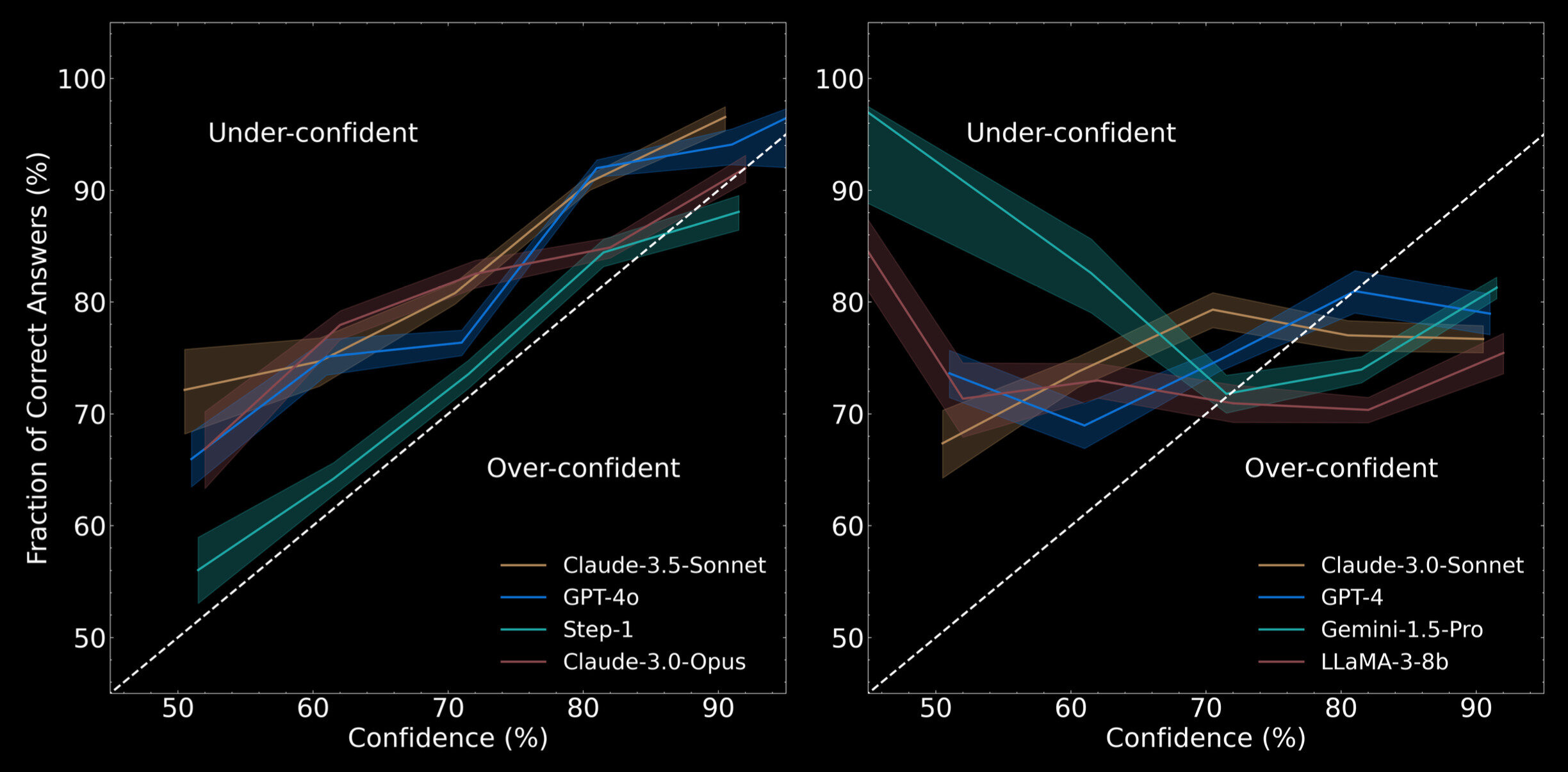

Trustworthiness : Are you sure?

YST, AstroMLab+, 2025a

Confidence (%)

Fraction of Correct Answer (%)

50

60

70

80

90

50

60

70

80

90

50

60

70

80

90

100

Under-confident

Over-confident

Model pre-summer 2024

After summer 2024

Proprietary models

Score (%)

60

70

80

90

As of July 2024

YST, AstroMLab+, 2025a

Special thanks to

Warren Buffet :

" The trick is, when there is nothing to do, do nothing "

Still it is not very scalable

LLaMA-3.1 70b throughput on four H100 GPUs

= ~ 100 tokens / second

1 SED source = 15 GPU minutes

1B sources = 10M GPU days

A cluster with 10,000 H100 GPUs

running for 3 years

= 0.03 USD

= 40 USD

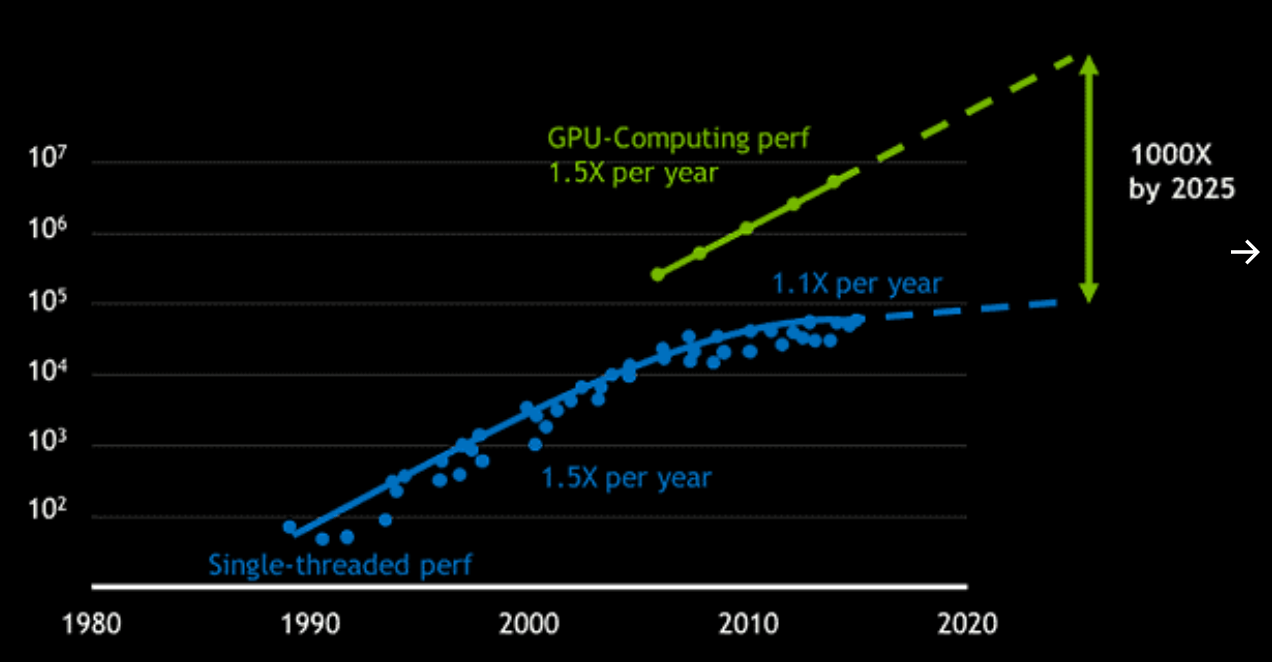

Huang's Law

Compute Power

Year

CPU Moore's Law is plateauing

GPU is

picking up the pace

LLMs are getting very cheap, very quickly

The price drop has an e-folding time of appromately

3 months

YST, AstroMLab+, 2025

Score (%)

Cost per 1 SED Source (USD)

< July 2024

Score (%)

Cost per 1 SED Source (USD)

< July 2024

Score (%)

Cost per 1 SED Source (USD)

+ 3 months

Google Gemma-2

Google

Gemini-1.5

Open-Weight

Proprietary

DeepSeek v2

Score (%)

Cost per 1 SED Source (USD)

Alibaba Qwen-2.5

Open-Weight

Proprietary

Meta LLaMA 3

+ 3 months

Yi 01

X's Grok

Stepfun

Microsoft

Phi-3.5

Nvidia's Nemotron

Score (%)

Cost per 1 SED Source (USD)

Open-Weight

Proprietary

+ 3 months

+ 3 months

Proprietary

(Experimental / Not Released)

DeepSeek v3 / R1

Score (%)

Cost per 1 SED Source (USD)

Open-Weight

Proprietary

+ 3 months

+ 3 months

Proprietary

(Experimental / Not Released)

OpenAI (o3)

Google Gemini-2.0

Score (%)

Cost per 1 SED Source (USD)

Open-Weight

Proprietary

+ 3 months

+ 3 months

Proprietary

(Experimental / Not Released)

Microsoft

Phi-4

MiniMax 01

Gemini-2.5-Pro

Claude-3.7-Sonnet

Meta LLaMA 4

1B sources = $1 billion

e.g., Roman Space Telescope, Euclid Space Telescope

~ approximately the build cost (July 2024)

3% of the build cost (March 2025)

Mephisto achieves the same success rate with 1/30 of the cost

March 2025

Sun, YST+, 2025

GPT-4o

QwQ32B

Data-poor , Theory-rich

Collecting

more data

???

Data-poor , Theory-rich

Data-rich , Theory-poor

Roman, HSC, Euclid, DESI, SDSS, PFS

Data-poor , Theory-rich

Agentic Astronomy

By Yuan-Sen Ting

Agentic Astronomy

- 121