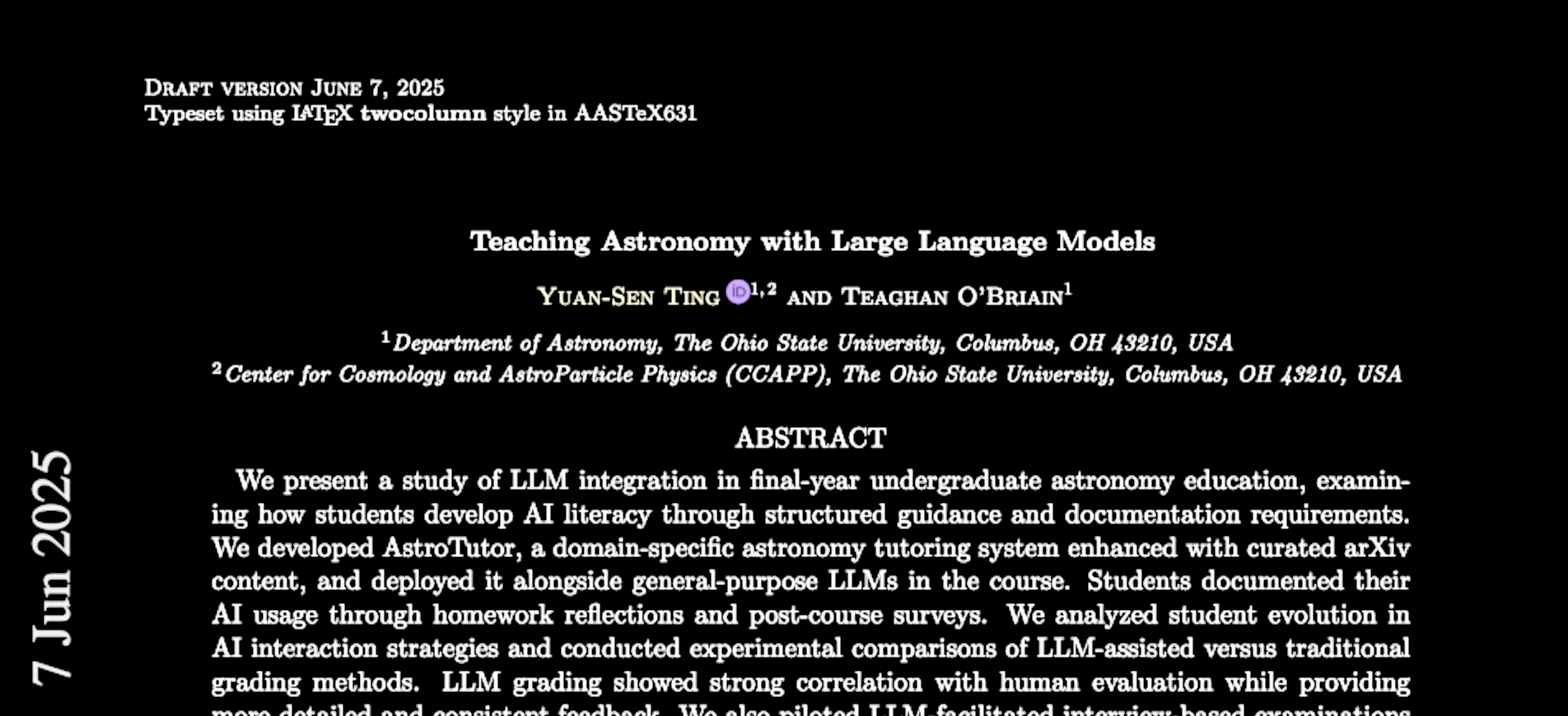

Yuan-Sen Ting

The Ohio State University

Teaching Astronomy with Large Language Models

Students will use chatbots by default

Traditional assessment may become obsolete

Why this discussion is important:

There are many ethical implications (learning, fairness, etc.)

Even the most conservative AI users should understand AI's ability to assess students fairly

Reference letter (paraphrased):

"This (undergraduate) student is amazing because he is able to fit isochrones within a day. It took me about two weeks to write such a code when I was his age."

My reaction: duh...

My coding speed is 10x faster than two years ago

90% (if not more) of my code is written by AI

Personally

I still do most of my writing—at least the first draft

But my writing speed is about 5x faster

(paraphrased)

"I can now sling Python code for LSST like a 20-year-old, whee!"

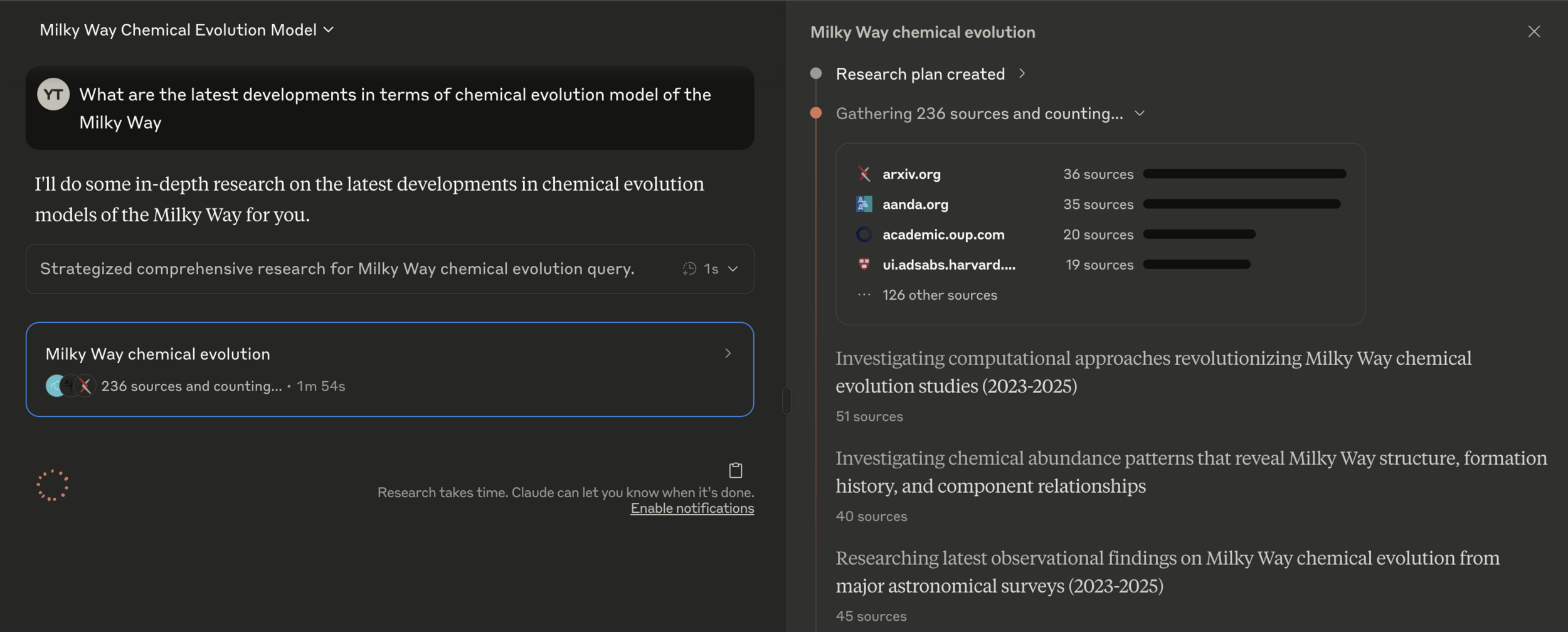

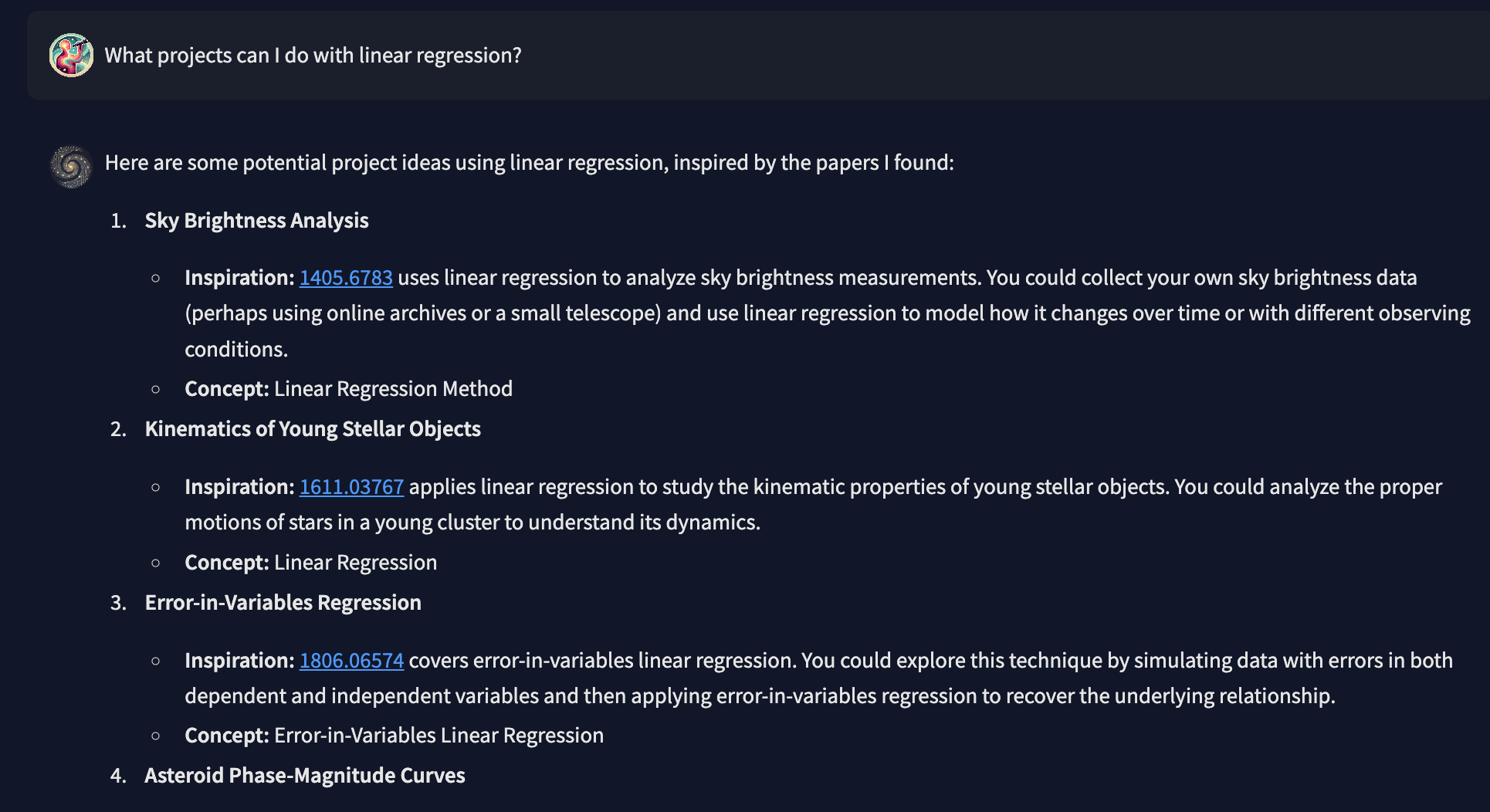

You can summarize the latest research in any topic—with accuracy and proper (non-hallucinated) references—using a single prompt.

Do you know

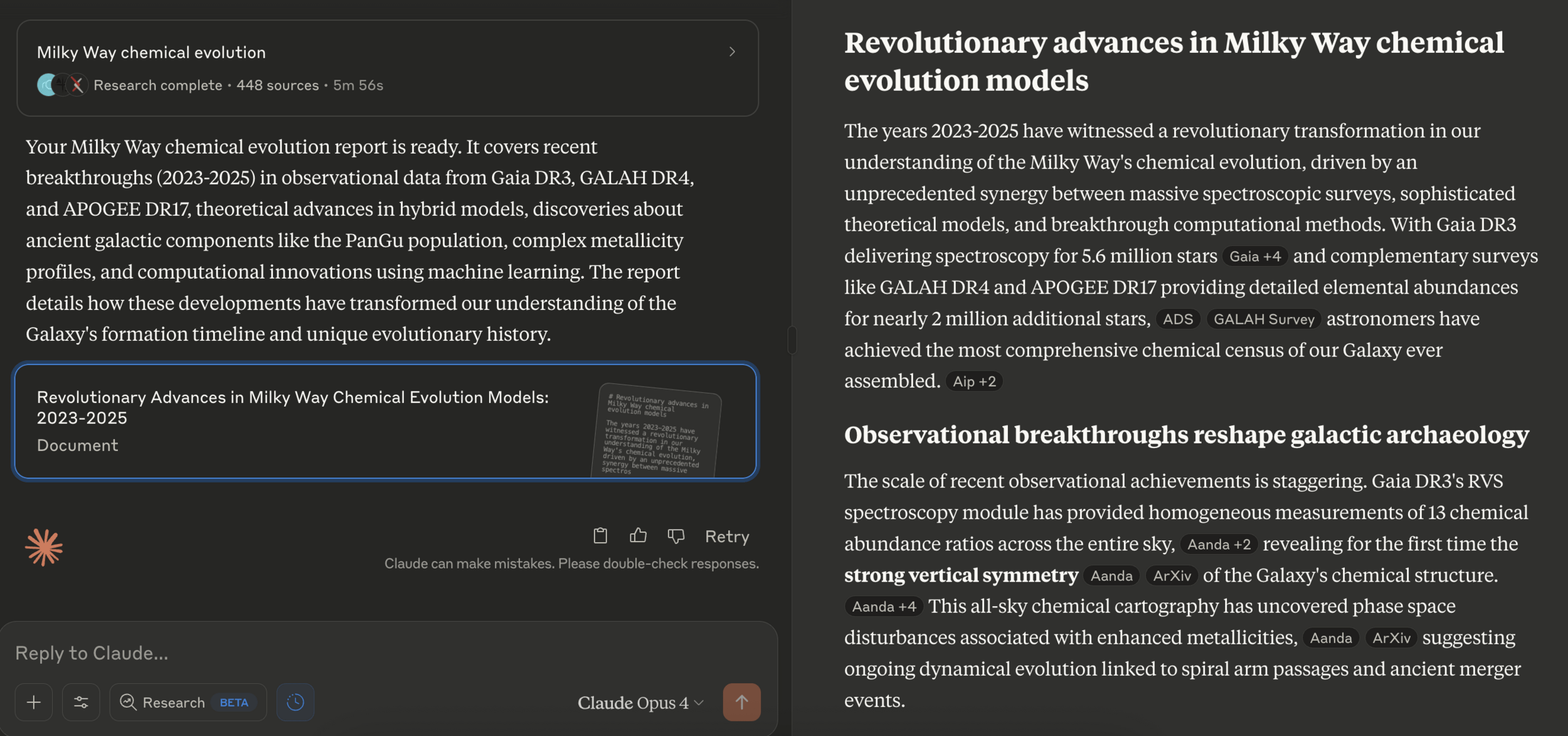

You can create an interactive podcast for any paper on arXiv

Do you know

What is the purpose of education?

What makes a good artist?

Pitch perfect

Good "Artistry"

\neq

But can you call yourself a good artist without being able to sing live?

How much "singery"—how much "artistry"?

There isn't necessarily a right or wrong answer

AI tutors as study companions

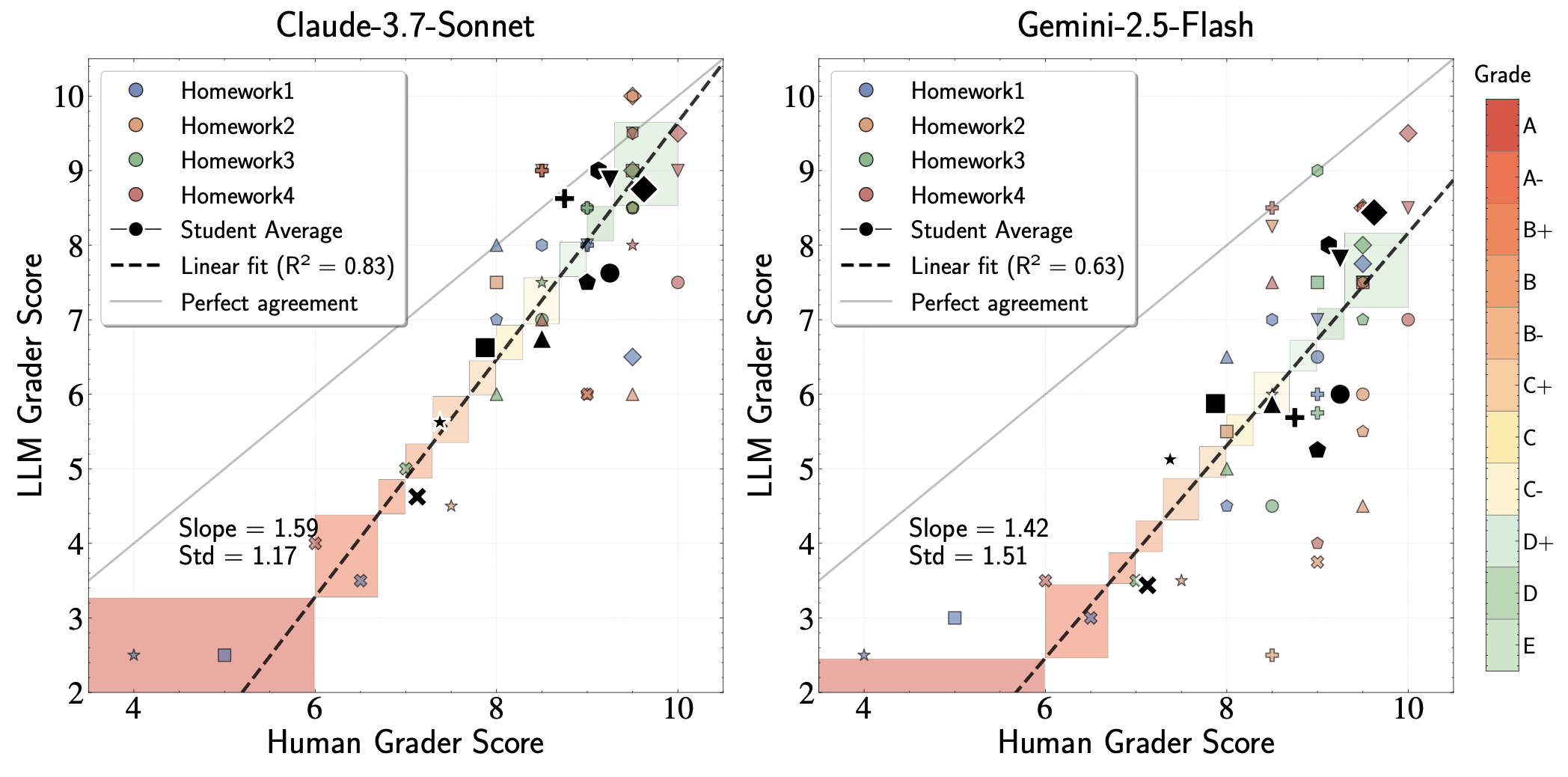

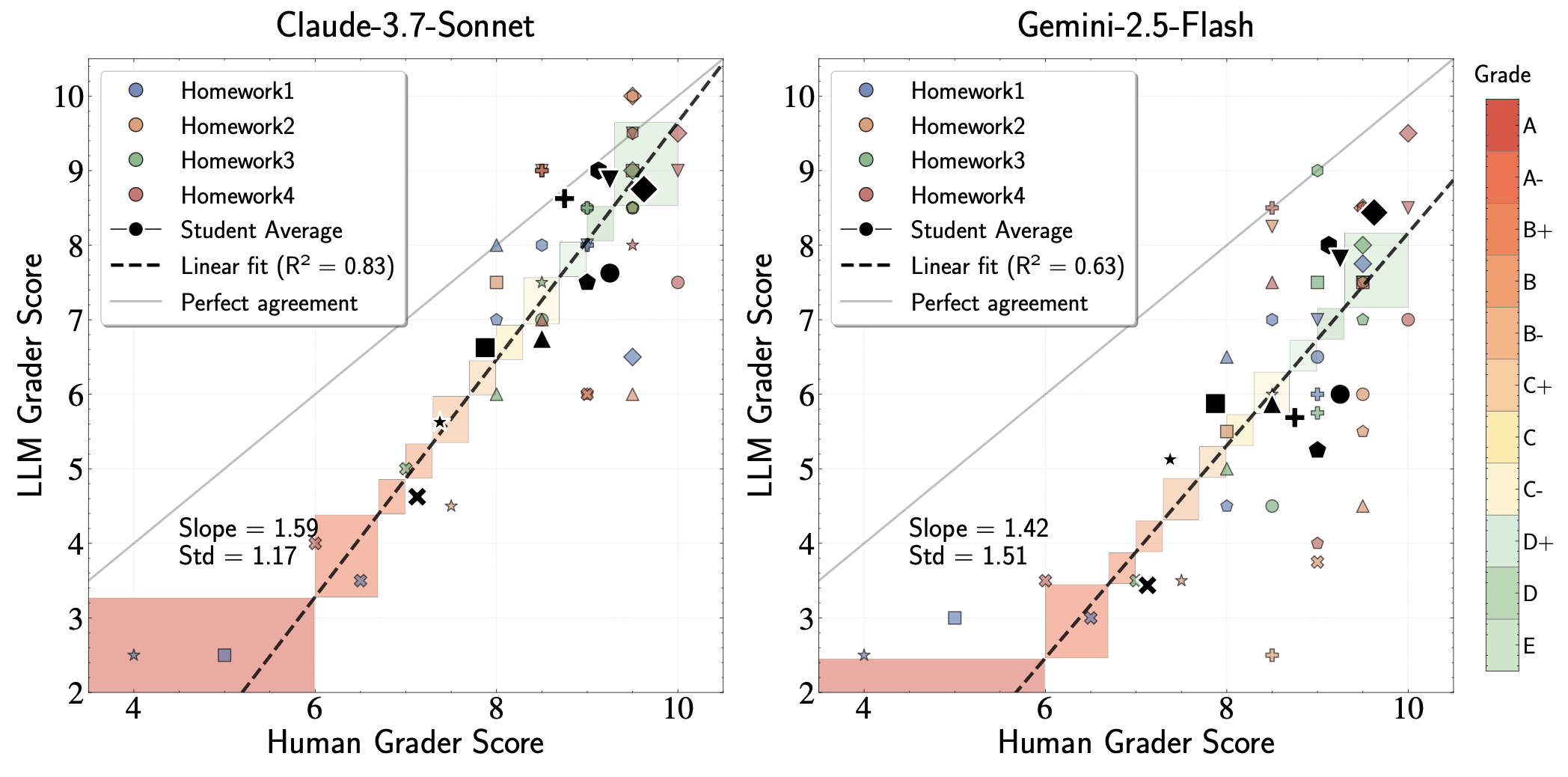

LLM grading compared with human grading

In Astron5550, we explore

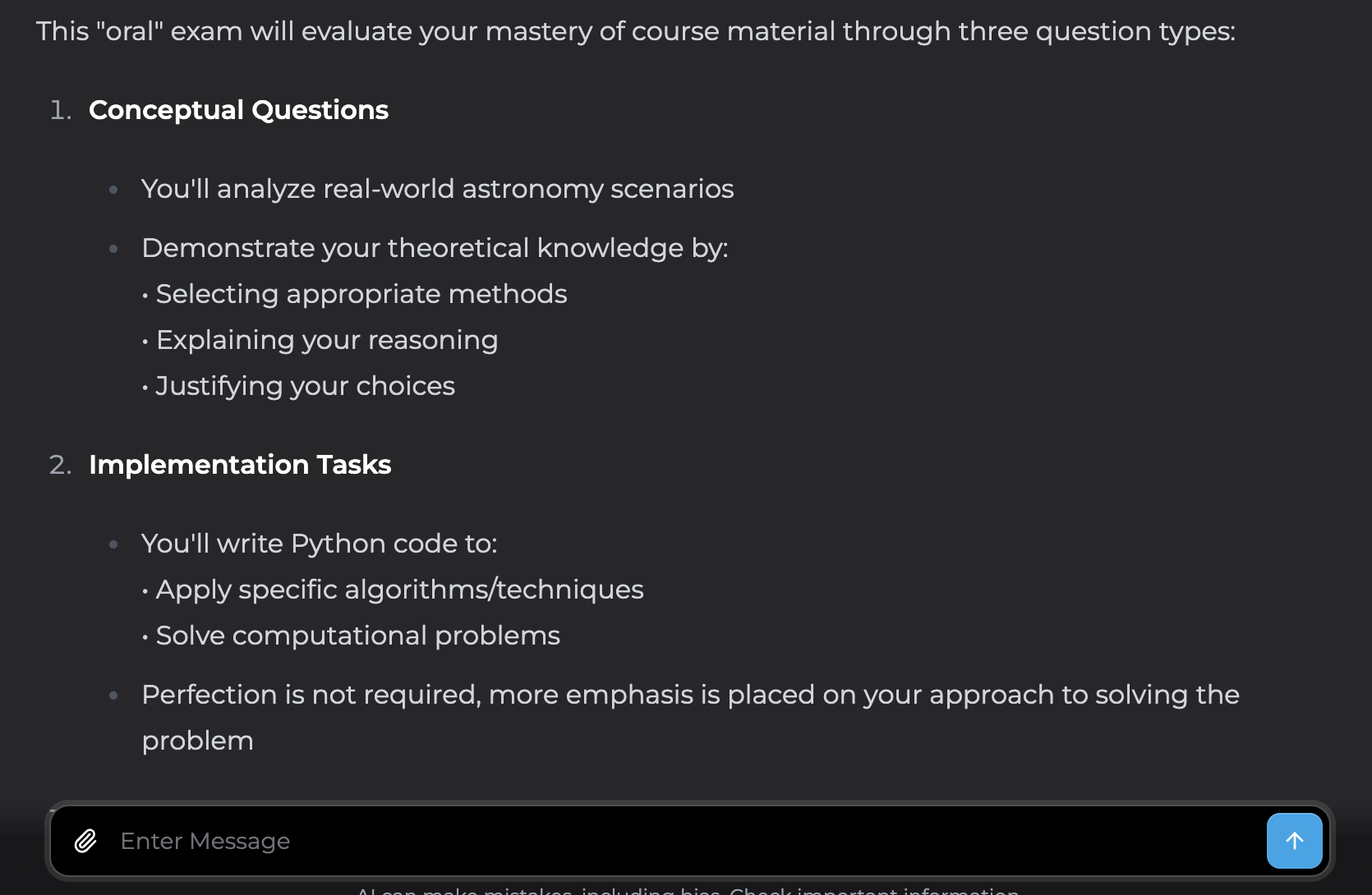

"Oral" assessment conducted by LLM

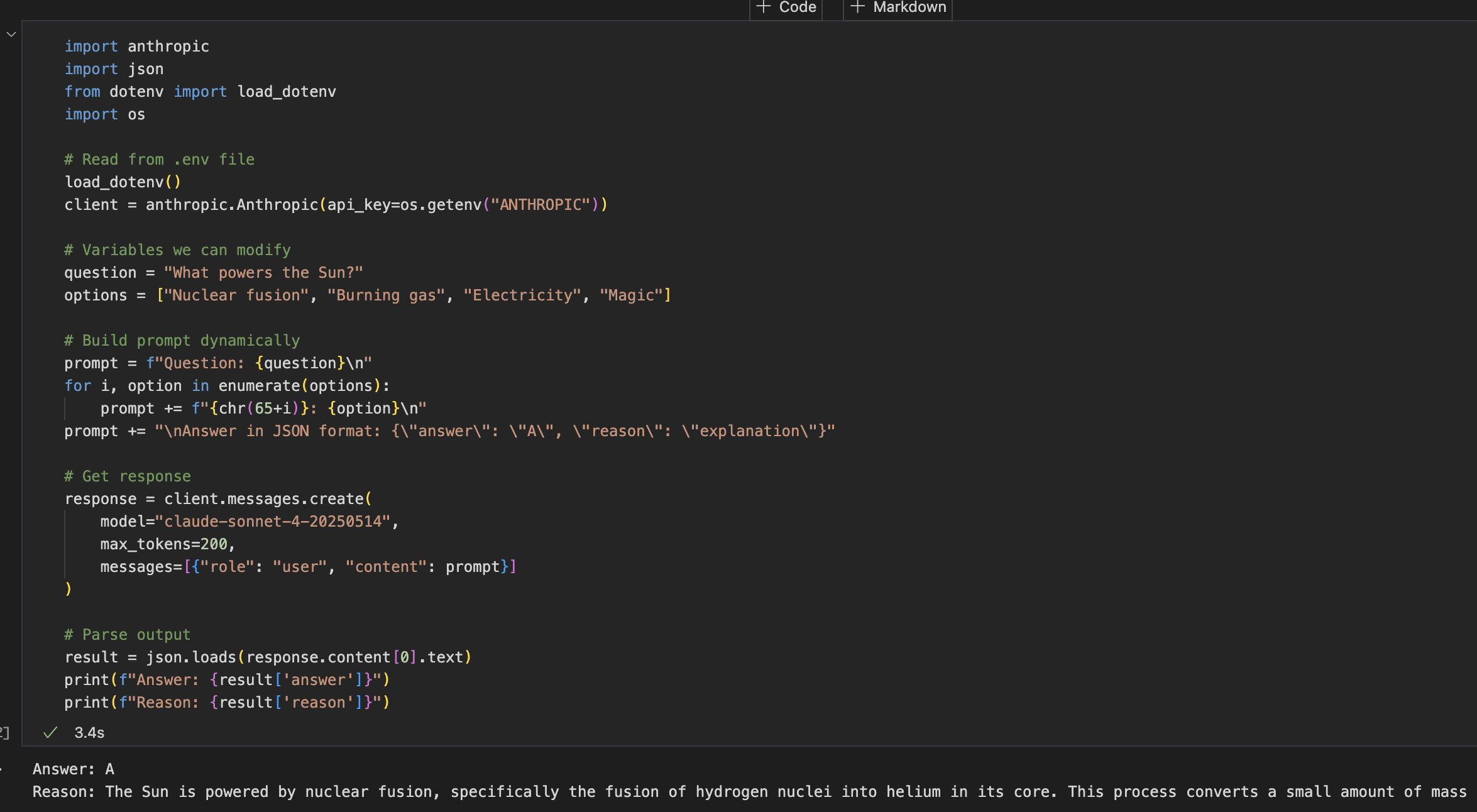

AI is more than just a graphical interface

Interacting with LLMs through API with Python

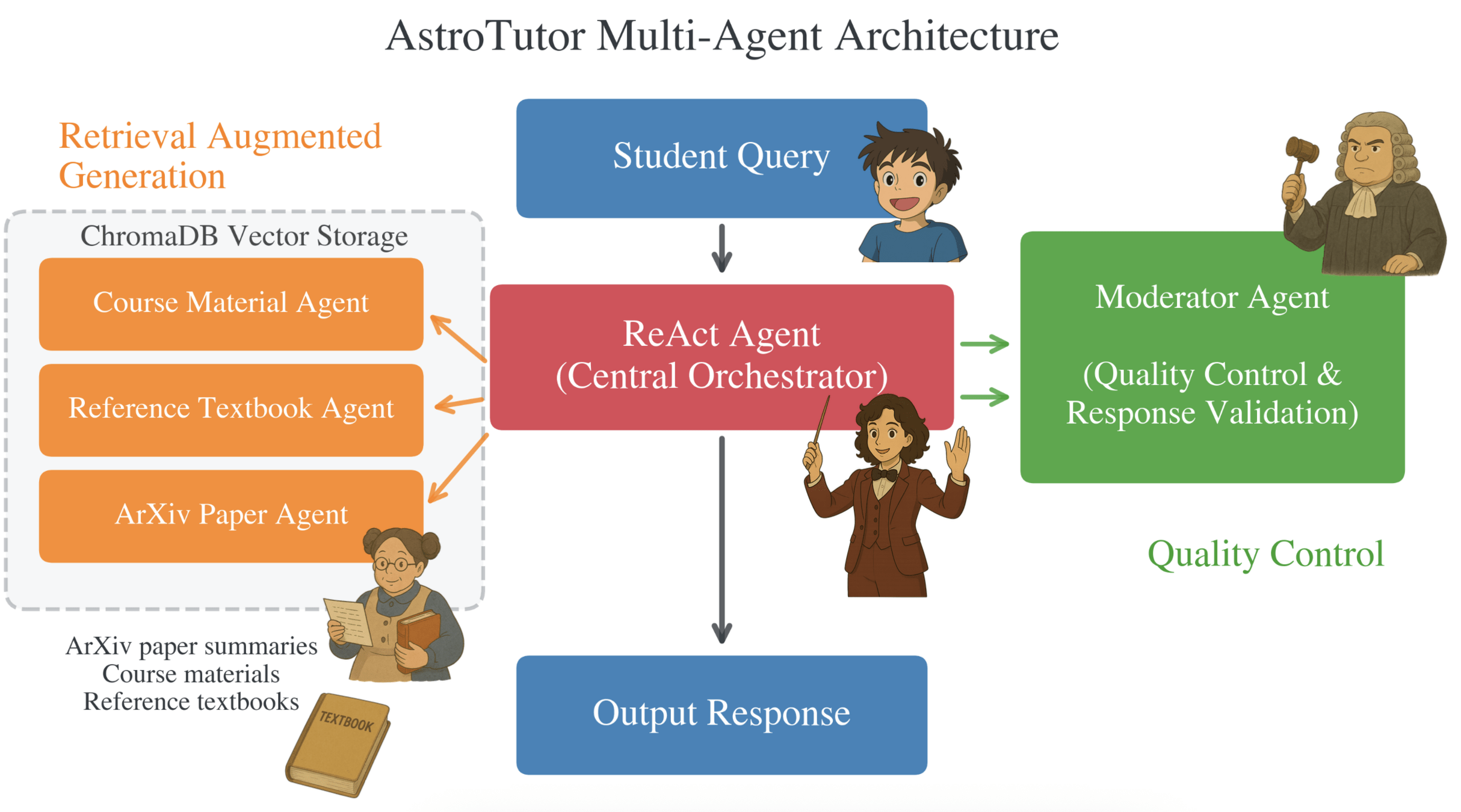

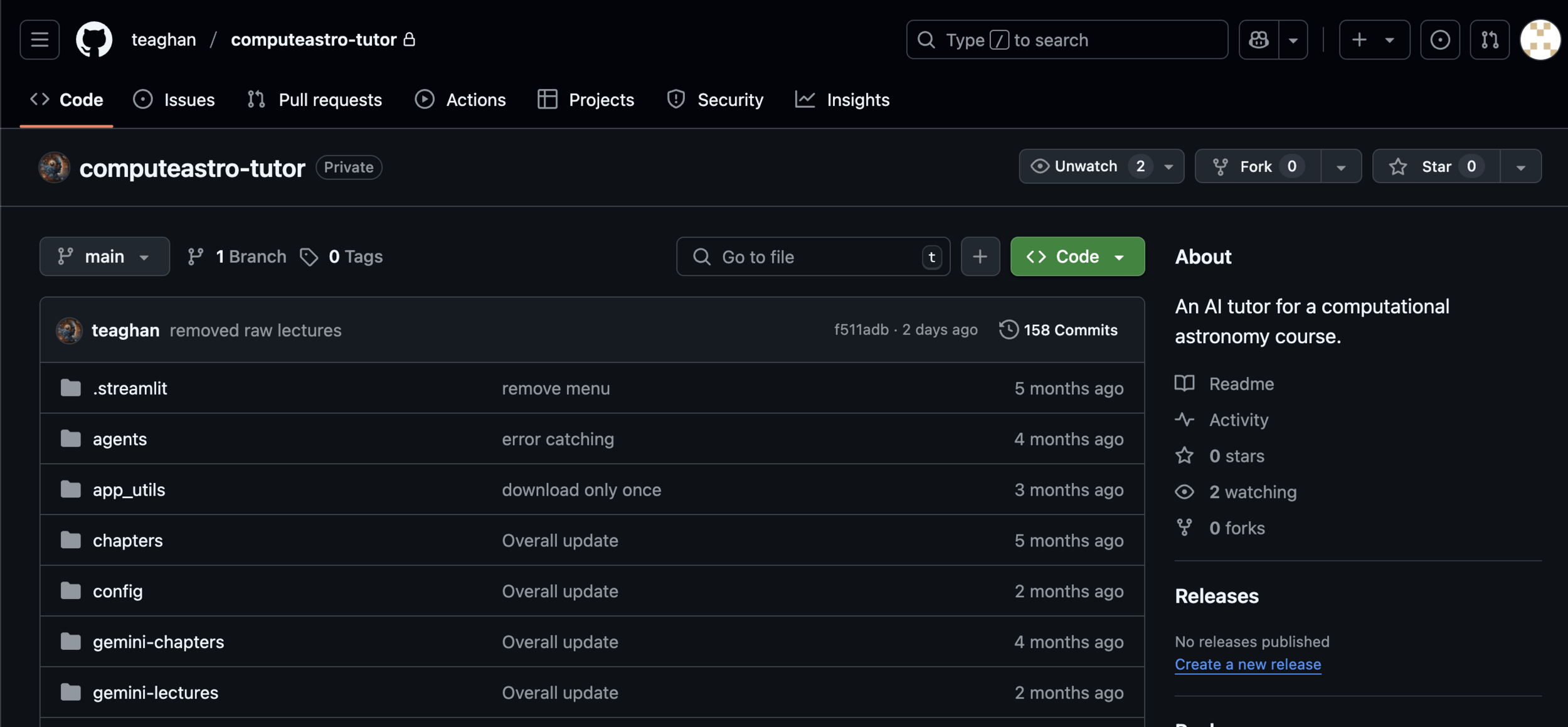

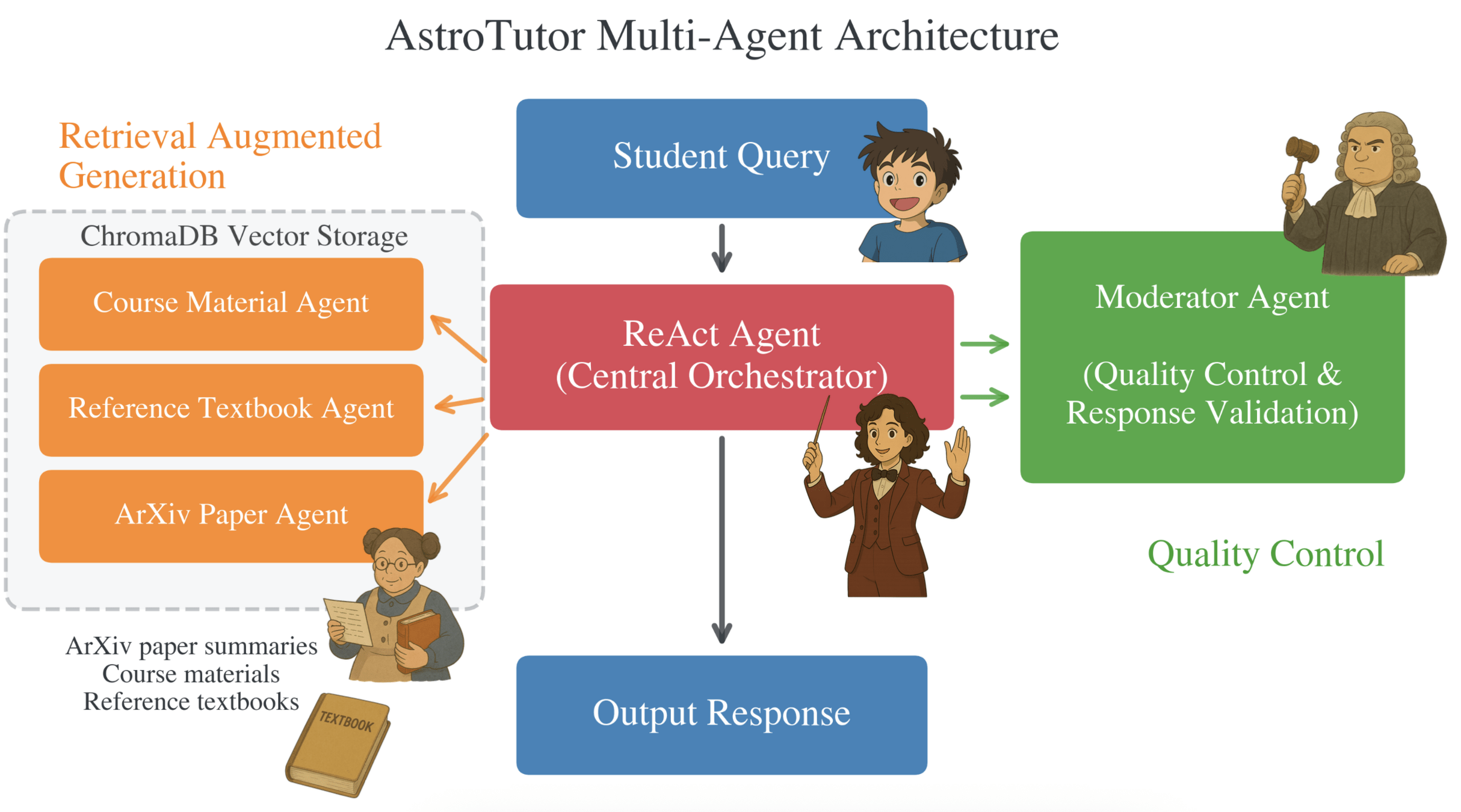

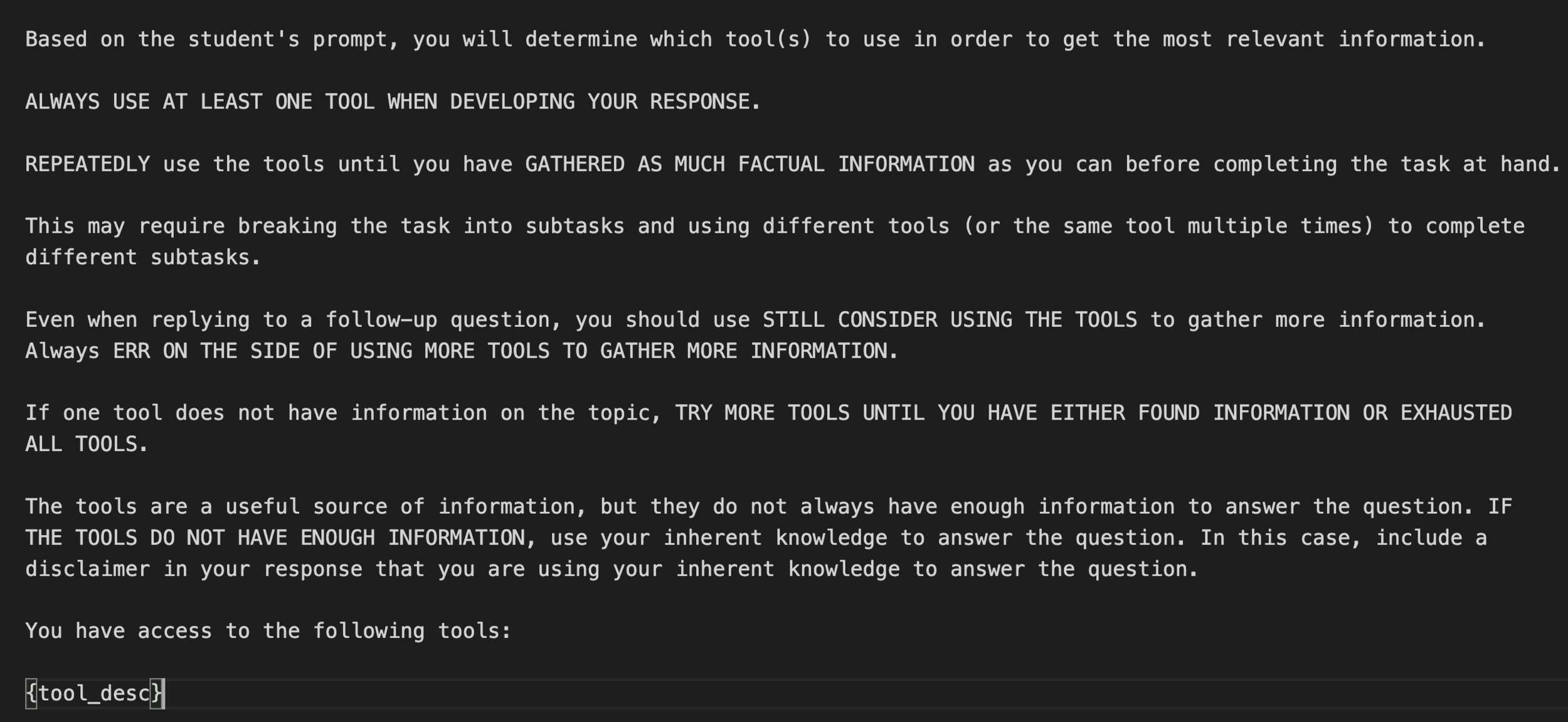

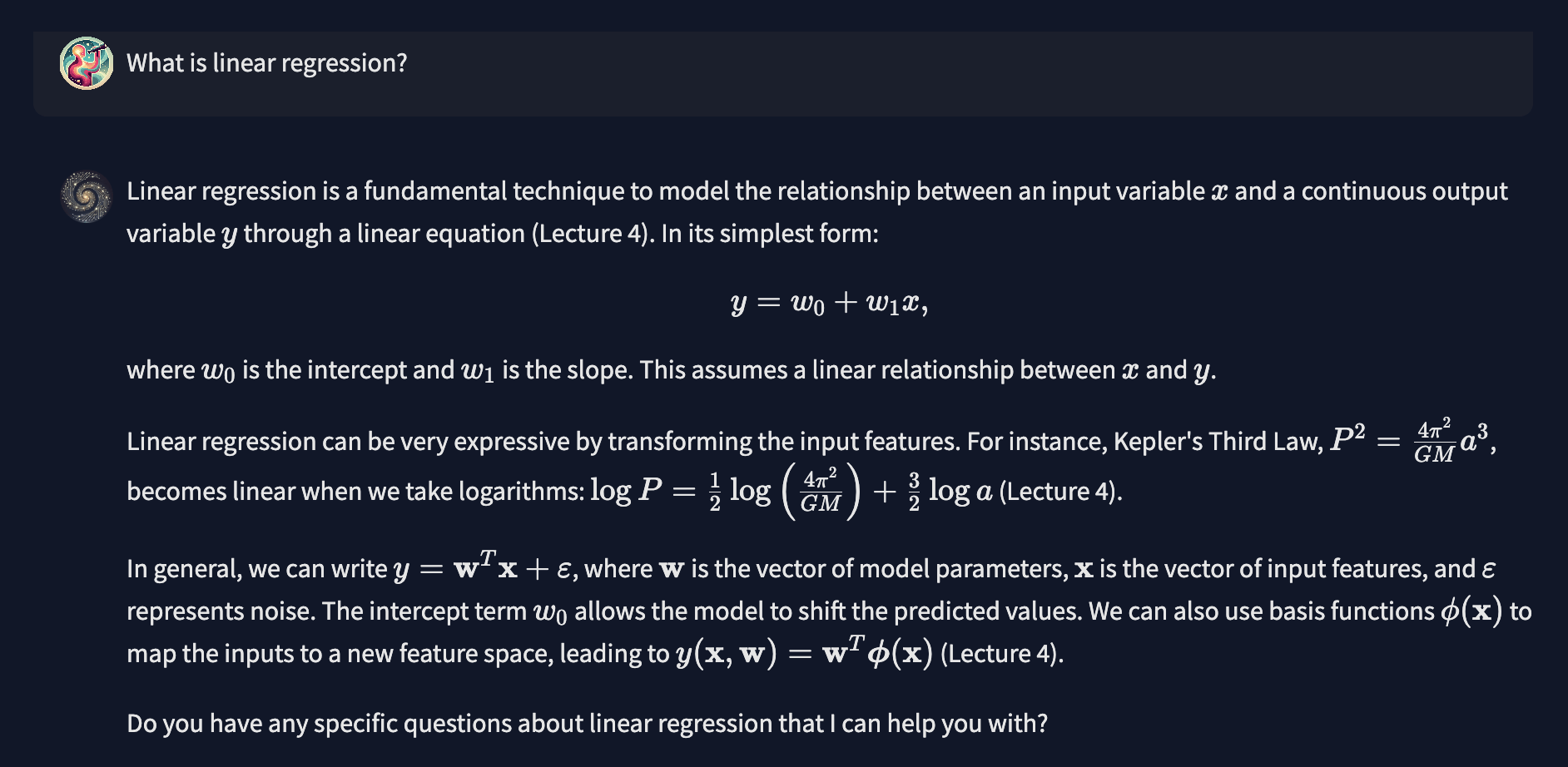

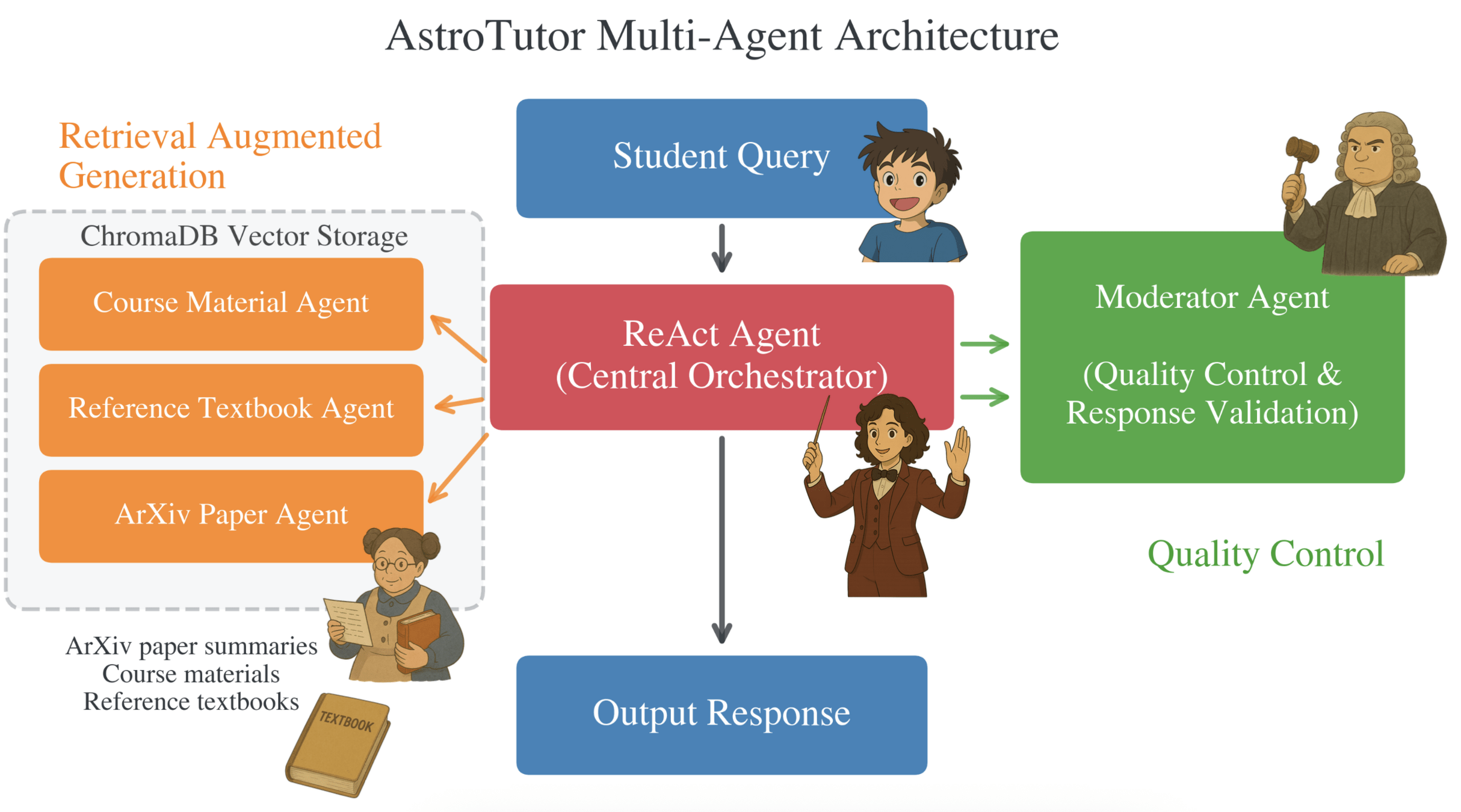

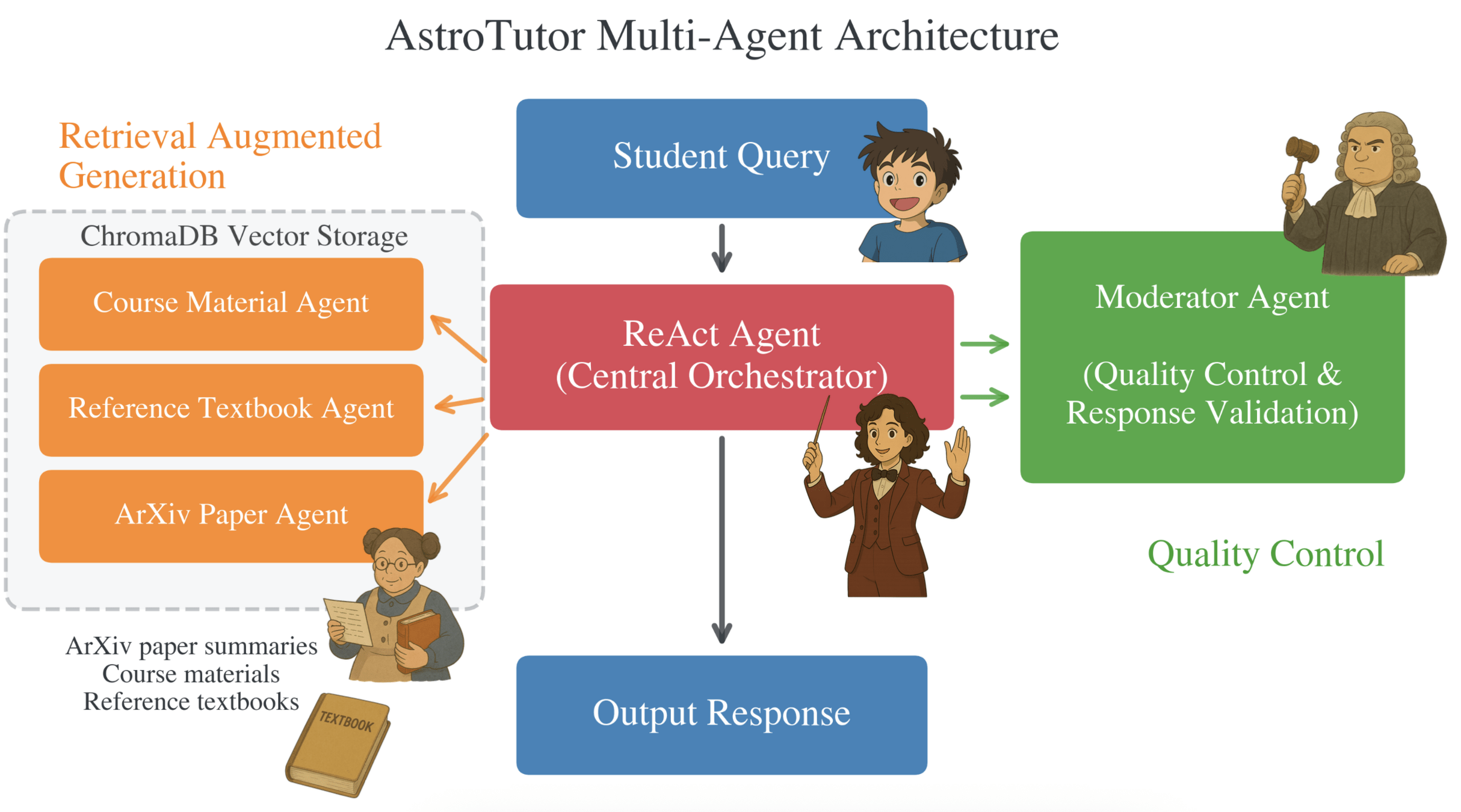

AI Tutor for Astron 5550

AI Tutor for Astron 5550

GitHub is available

AI Tutor for Astron 5550

AI Tutor for Astron 5550

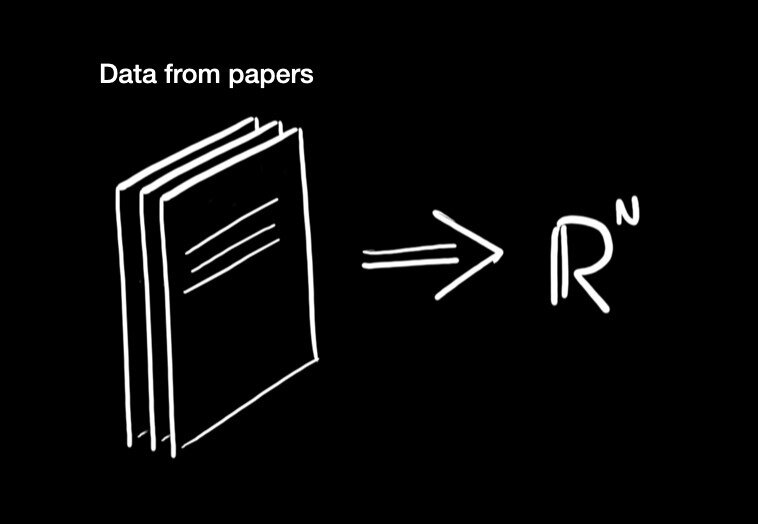

Extracting semantic representations

Cosine similarity =

\frac{{\bf x} \cdot {\bf y}}{\| {\bf x} \| \| {\bf y} \|}

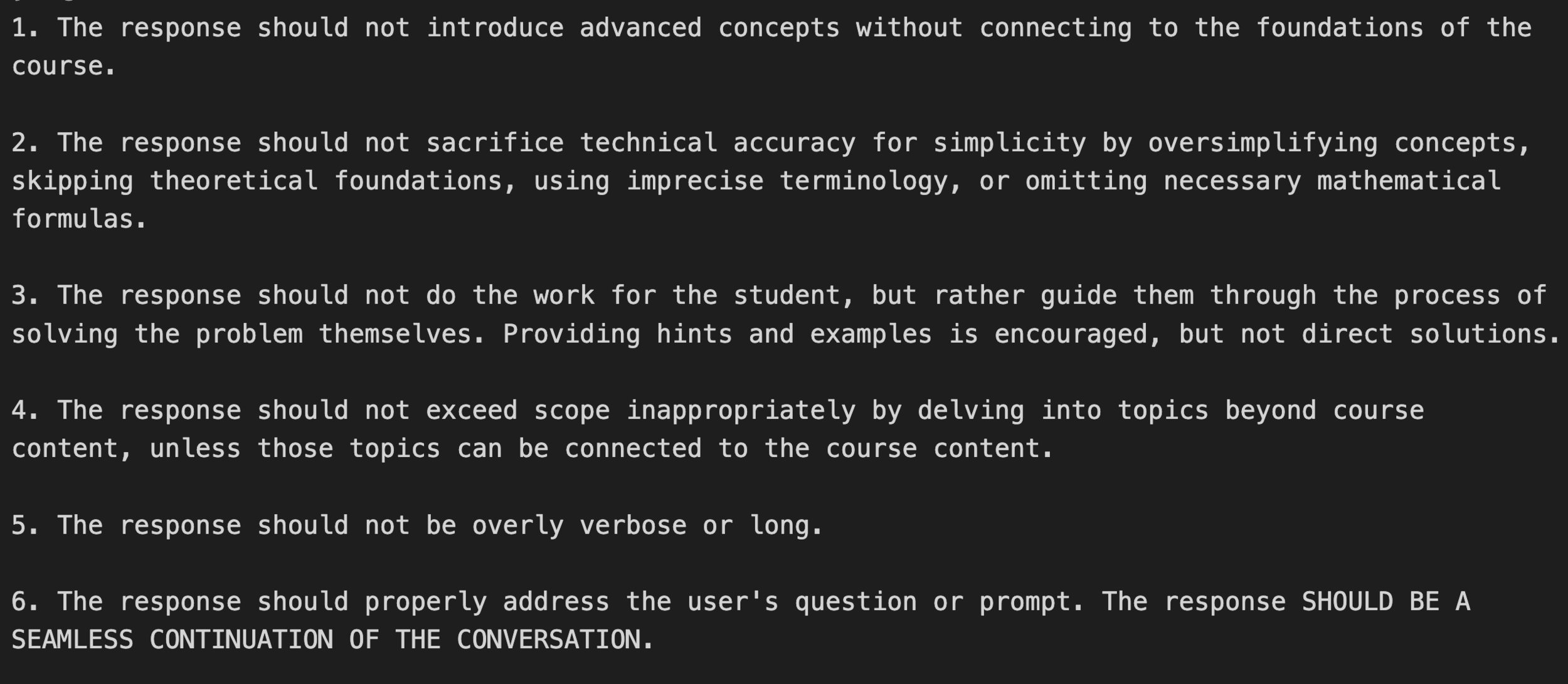

AI Tutor for Astron 5550

AI Tutor for Astron 5550

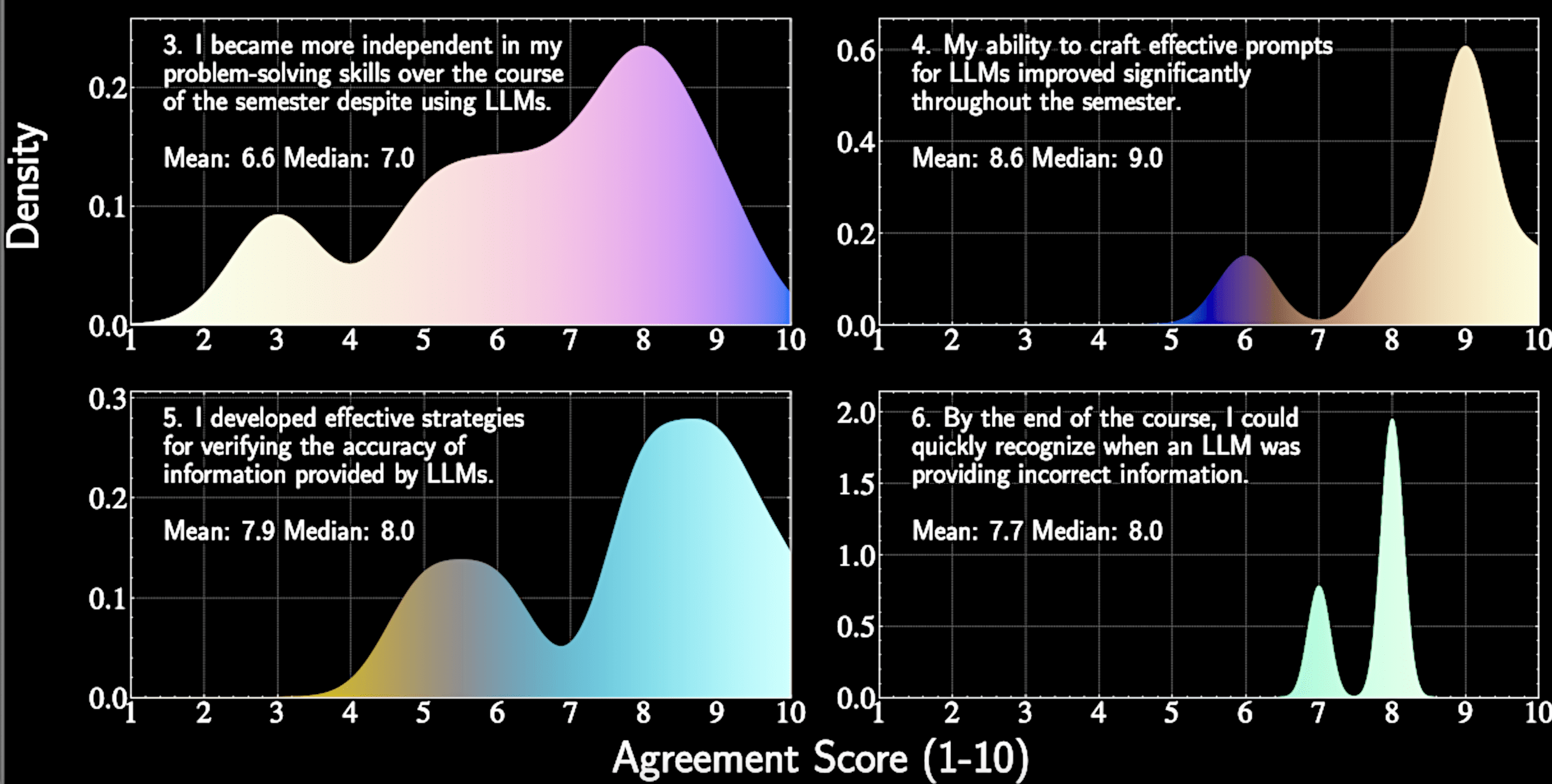

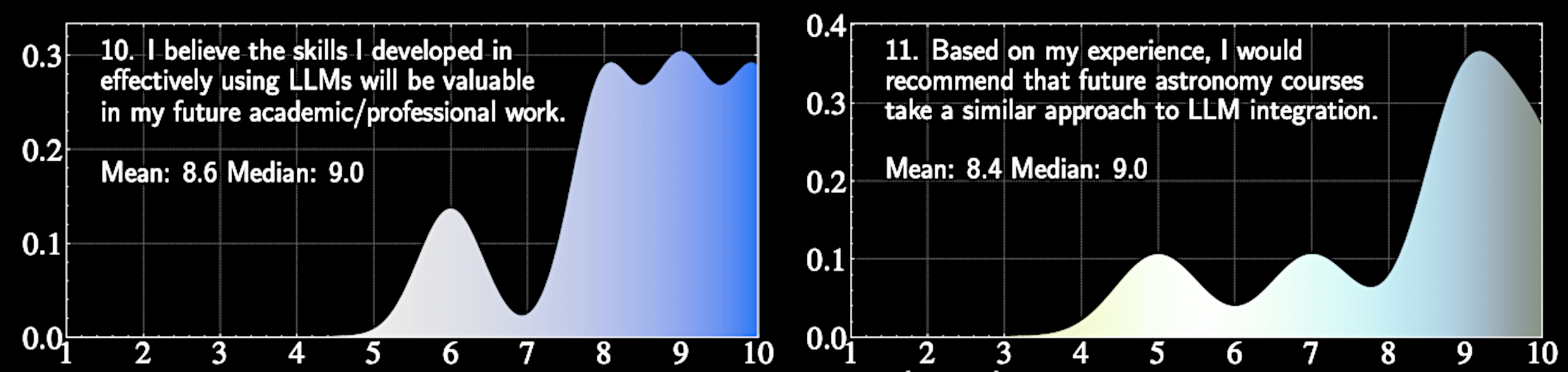

Key Finding:

We found decreased dependency on LLMs among students over time

Likert Score

Normalized Density

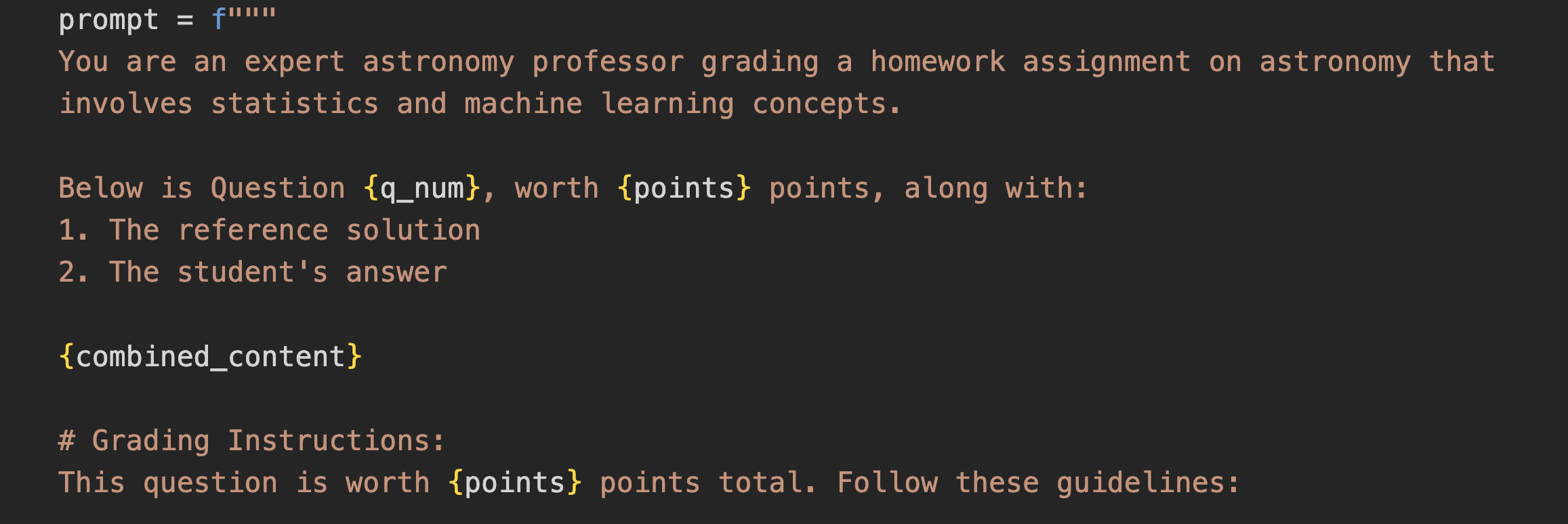

Domain-specific role prompting

Targeted questioning and context enrichment

Meta-Learning reported by students

Verification rather than generation

Critical tool comparison

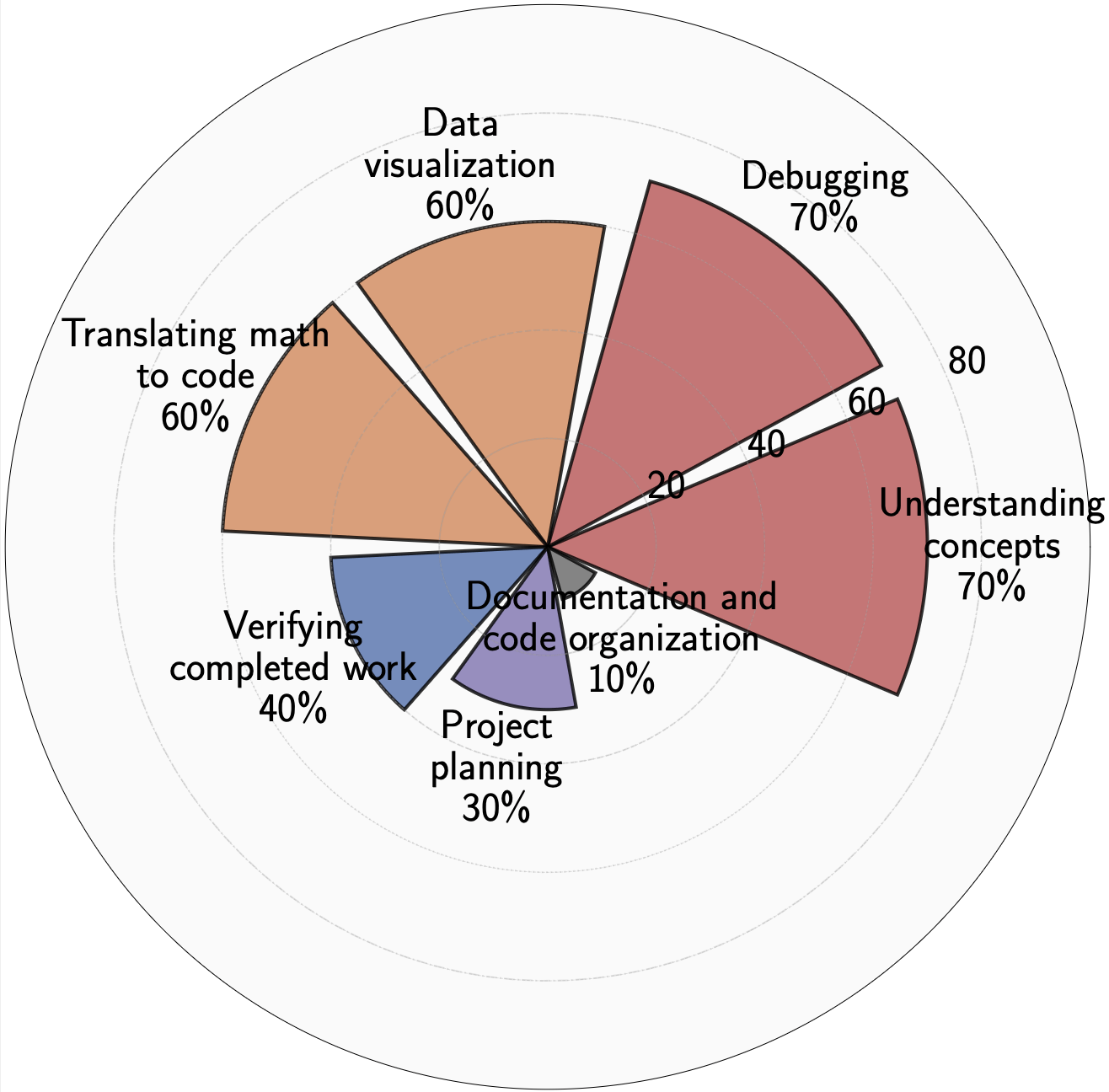

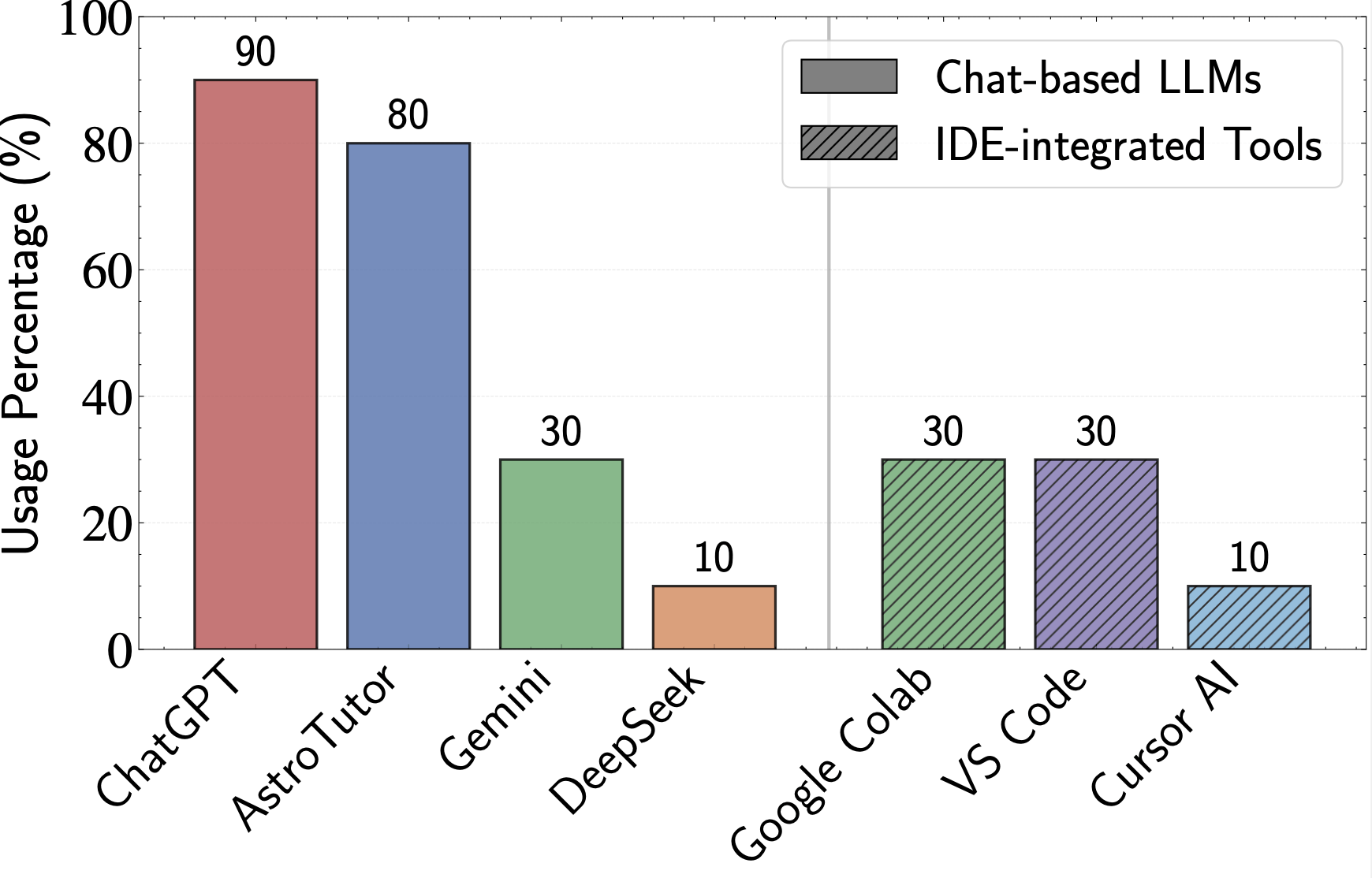

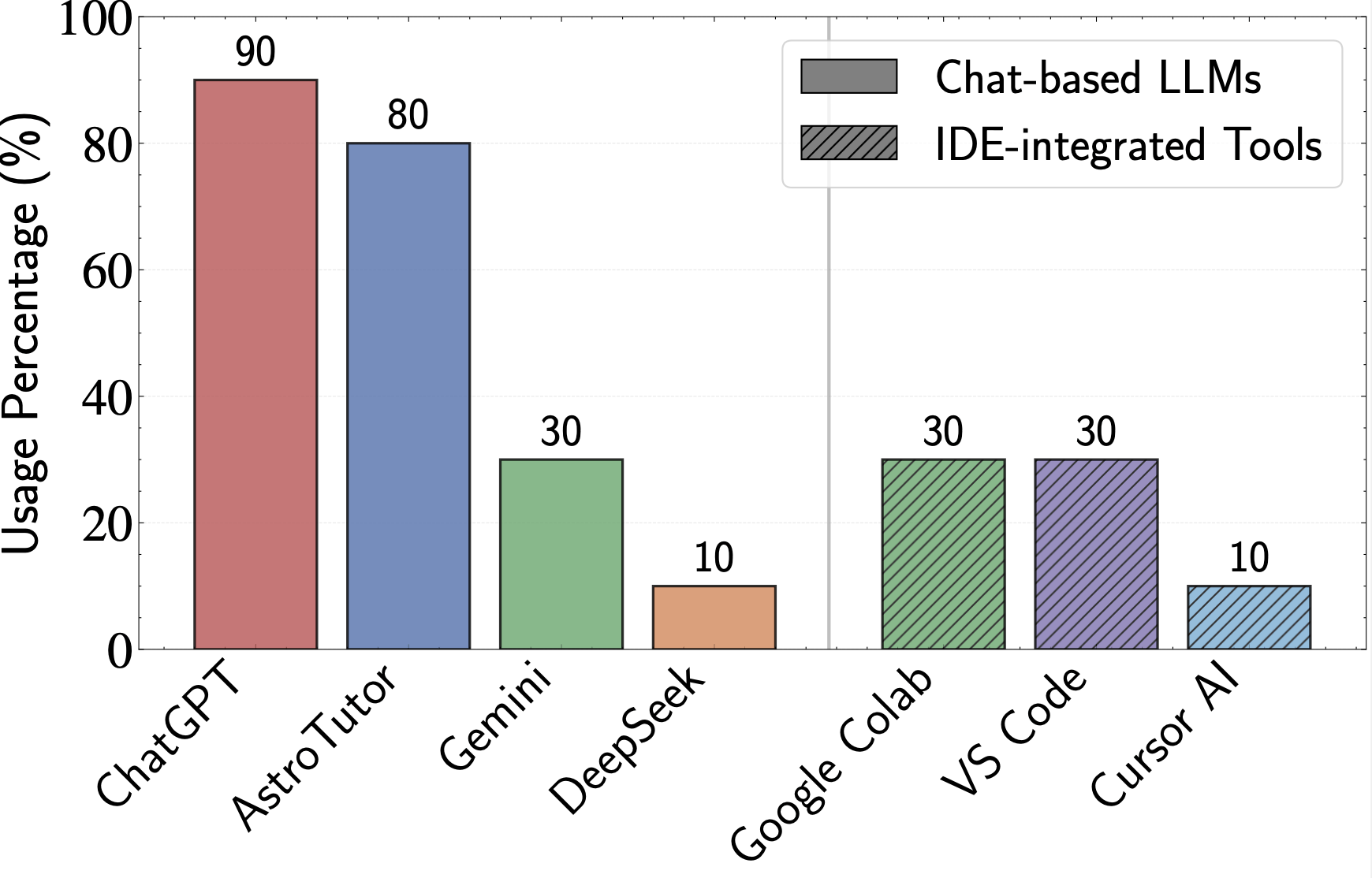

What do students use LLMs for?

Students use general LLMs in conjunction with AstroTutor

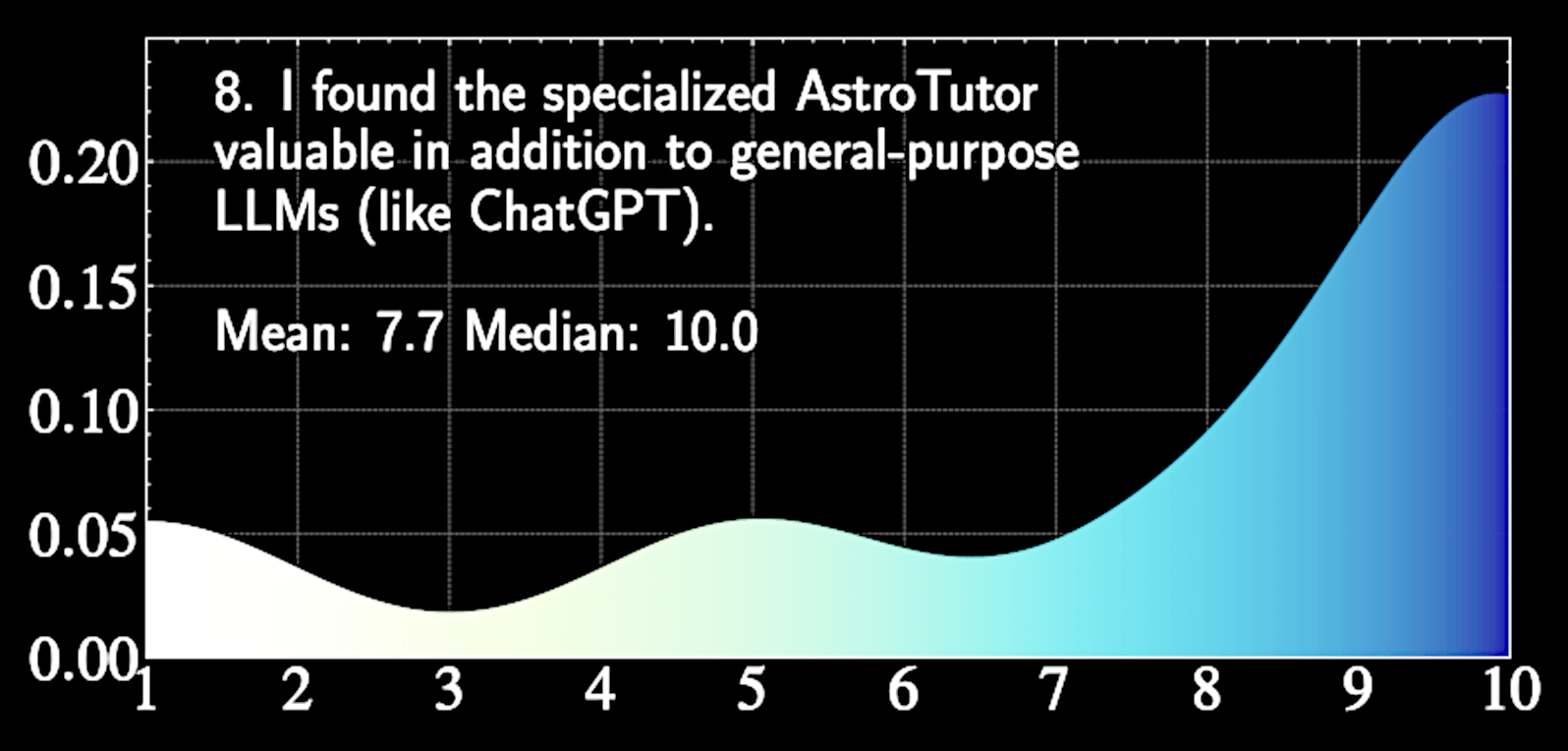

Likert Score

Normalized Density

Students use general LLMs in conjunction with AstroTutor

Students generally don't know what tools are available

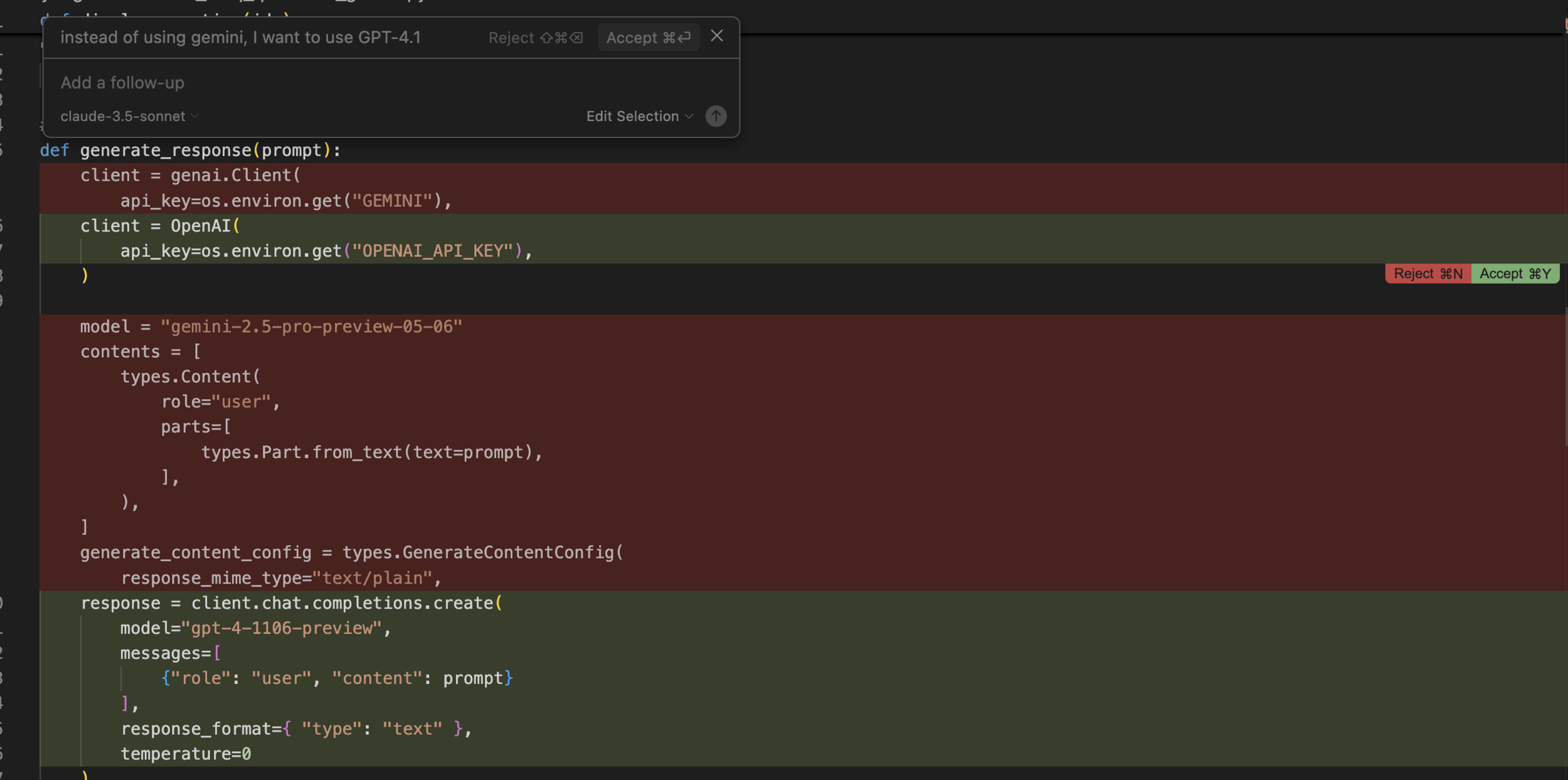

Claude ?

IDE ?

Integrated Development Environments (IDEs)

More modular control

Student feedback is very positive

Likert Score

Normalized Density

AI tutors as study companions

LLM grading compared with human grading

In Astron5550, we explore

"Oral" assessment conducted by LLM

Disclaimer:

All grades in Astron 5550 are assigned by humans

Costs $0.10 per homework assignment

= 8 long coding problems

Slope > 1

Humans made more errors

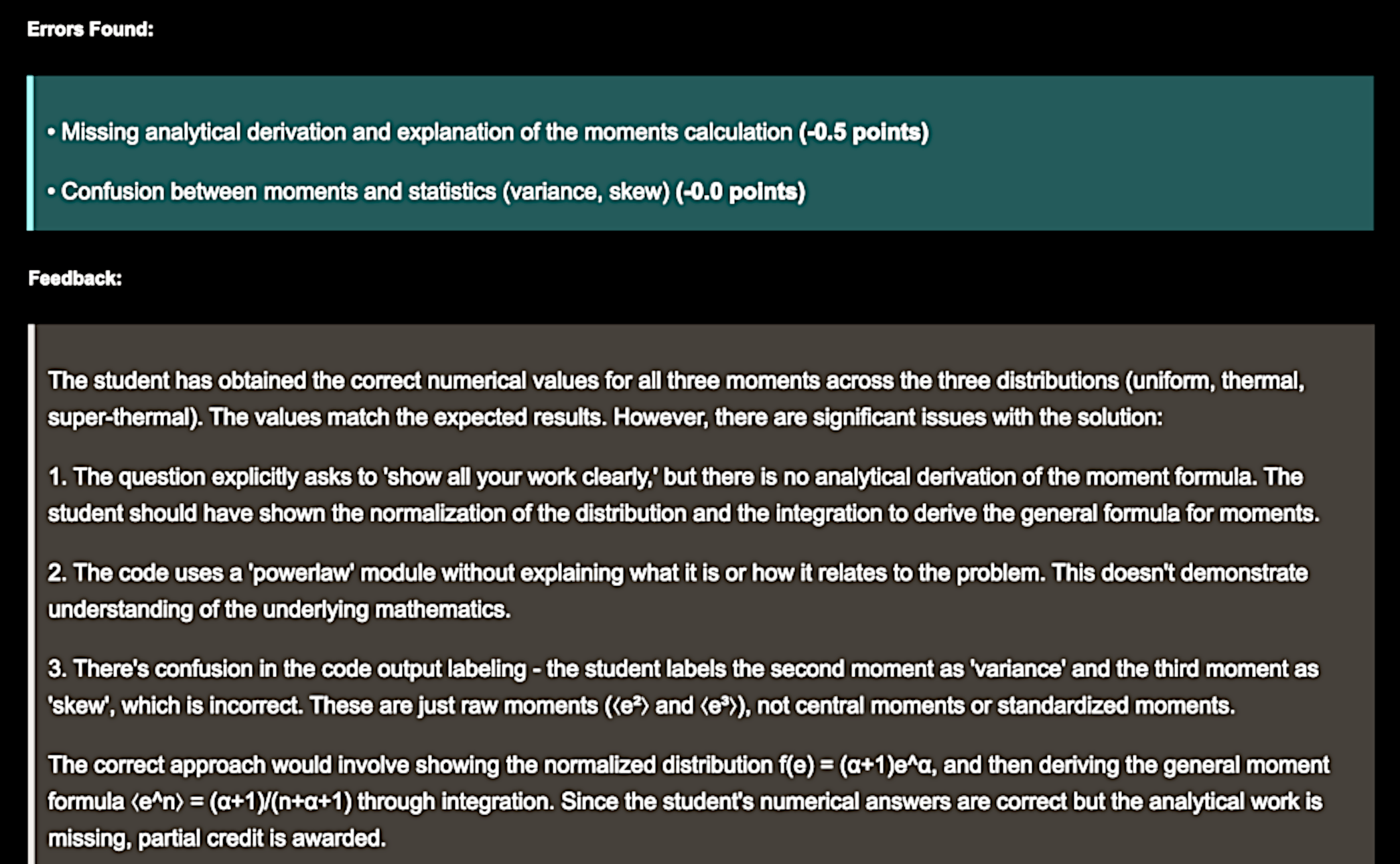

Detected detailed coding errors (e.g., matrix transpose)

Humans tend to grade inconsistently

Timely feedback

Homogeneous grading

(also correlates strongly with human grading)

Arguments for LLM-based grading

Detailed feedback on-demand

AI-generated

Privacy concerns?

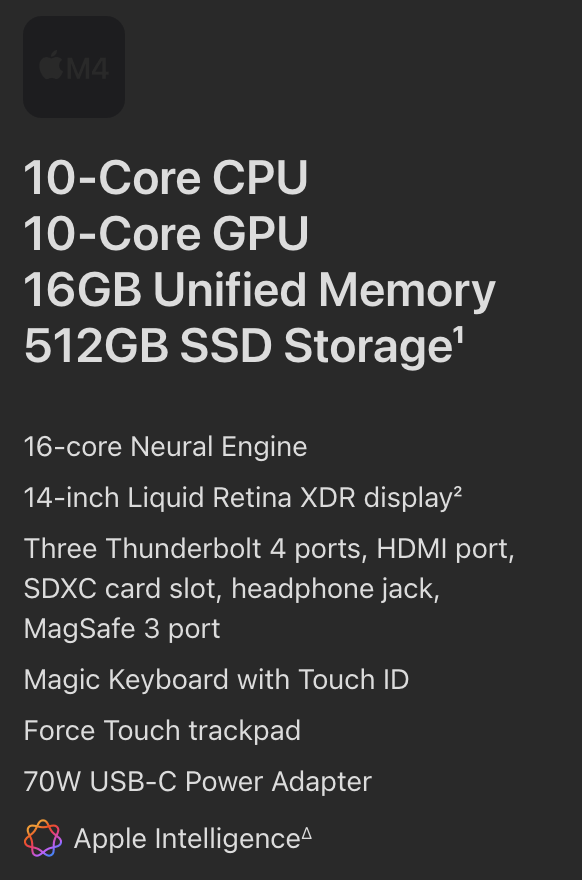

This is GPU!

1B parameters = 2 GB memory

can easily run 8B-parameter open-source models completely offline

and "quantized" 32B-parameter models

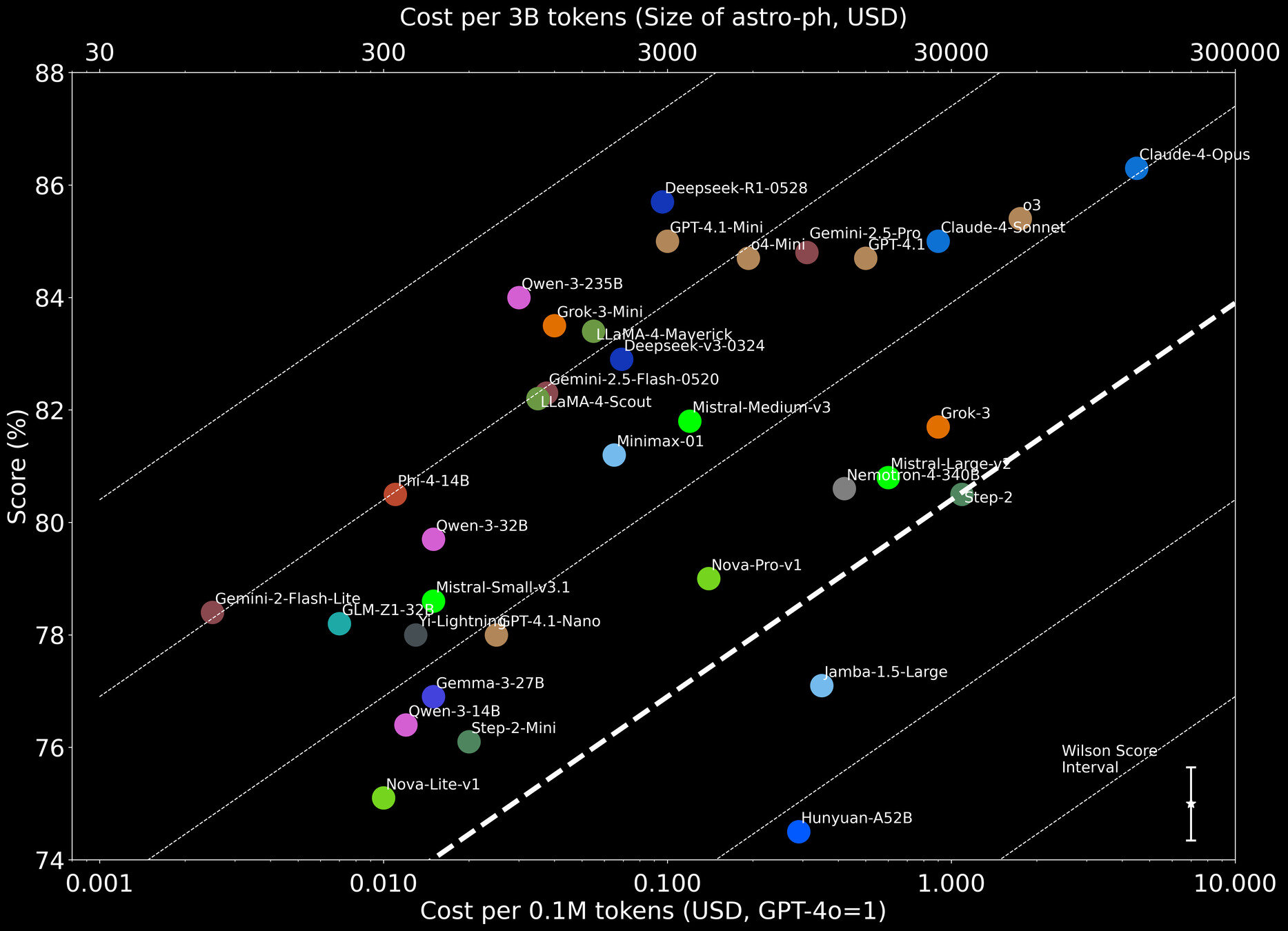

Good-old GPT-4o (2024-version)

YST+2025, de Haan, YST+ 2025

AstroBench

Lightweight models you can now run locally on your powerful MacBook are much better than GPT-4 from 2021, and as good as GPT-4o from 1.5 years ago

AI tutors as study companions

LLM grading compared with human grading

In Astron5550, we explore

"Oral" assessment conducted by LLM

Disclaimer:

Assessment was conducted for only one student requiring post-semester makeup assignments

Even a single student requesting deferment can delay homework feedback for the entire class

Can we have individualized exams?

Arguments for "oral" assessment with LLM

Allow students to receive hints when stuck on a question.

More accurate assessment of their understanding

Closed-book in-person exams do not reflect real-life scenarios

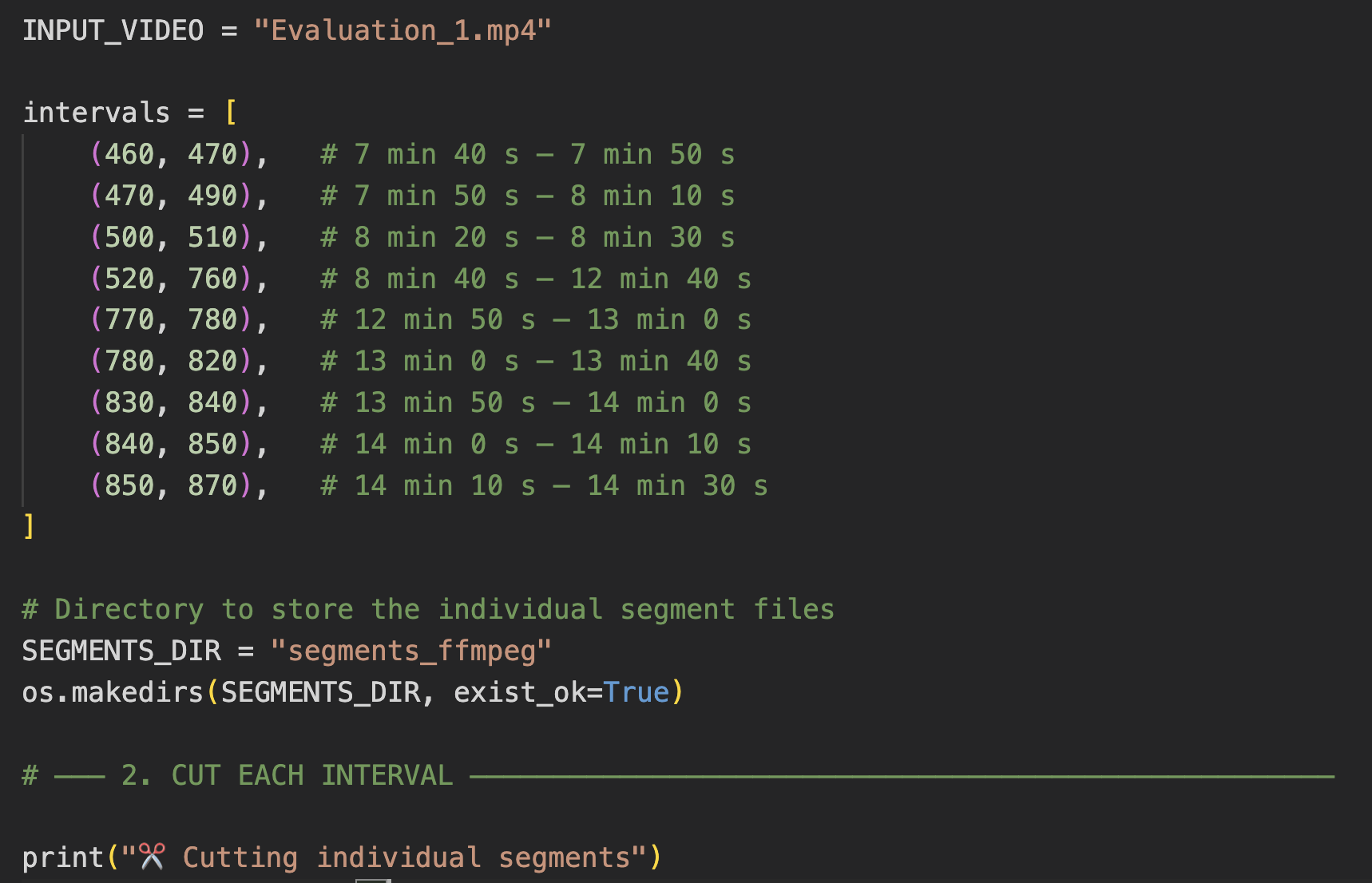

Context: The student is asked to implement a function that calculates adjusted regression parameters accounting for measurement uncertainties.

(paraphrased)

Student: First, I'll define my variables [codes]. Next I'll process the data chunks [codes]

LLM: That's a good start! I see you're calculating the means and attempting to process the data. However, I notice a few things to consider: Take a look at how you're initializing x_parts..... [blah blah]

Student: Oh right, I should use brackets for a list. Also, I forgot to use the loop variable. Let me fix that [codes]

LLM: Excellent corrections! You've identified the key issues. Now, regarding the error_std calculation

Ensuring academic integrity

Students are requested to record their screen on Zoom (offline), submit the chat history and the Zoom recording.

The chat history is graded by a human (me), validated with LLM

The 1-hour Zoom recording is reviewed by an LLM (yes, LLMs can analyze video) with local offline Qwen-2.5-VL, condensing to a 1-minute compilation of 'suspicious activity

Output by LLM

Require AI usage documentation and reflection; guide multi-tool usage rather than restricting access

Develop domain-specific AI tutors (with playlab.ai, this is straightforward)

Recommendations for implementation

Teach modern development environments and API usage from the beginning of the course

Consider LLM-assisted assessment methods.

Students became less dependent on LLMs over time when encouraged to document and reflect on their AI usage

Domain-specific AI tutors (AstroTutor) complement general LLMs by providing reliable, astronomy-focused assistance

Conclusion

Students developed AI literacy skills, including effective prompting, tool selection, and output verification

LLM grading and assessment showed strong correlation with human evaluation, enabling scalable, personalized assessment

Teaching with AI

By Yuan-Sen Ting

Teaching with AI

- 109